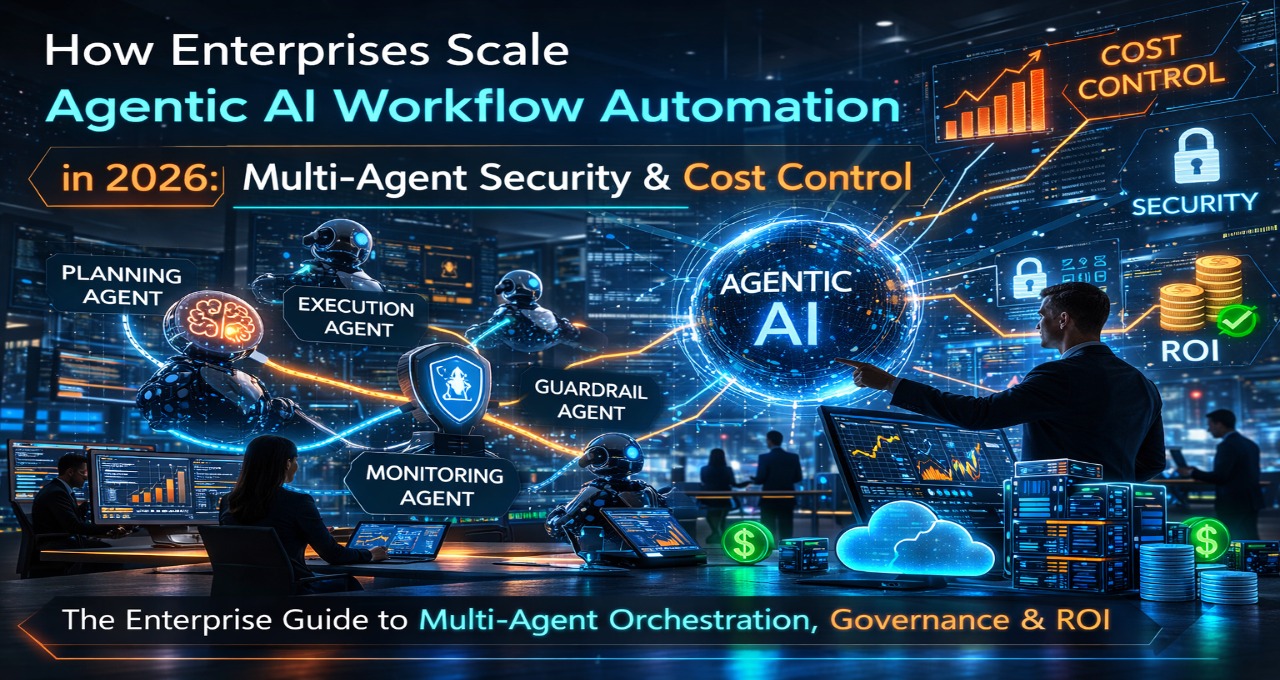

How Enterprises Secure Multi-Agent AI in 2026: mTLS-A, MCP & EU AI Act Governance

In 2026, enterprises secure multi-agent AI systems by implementing mTLS-A identity verification, Model Context Protocol (MCP) gateway enforcement, and deterministic governance layers aligned with EU AI Act Article 50 traceability requirements. Autonomous agents must operate under cryptographically verifiable intent, scoped permissions, and auditable semantic tracing to prevent cascade failures. Enterprise architects, CISOs, and governance leads … Read more