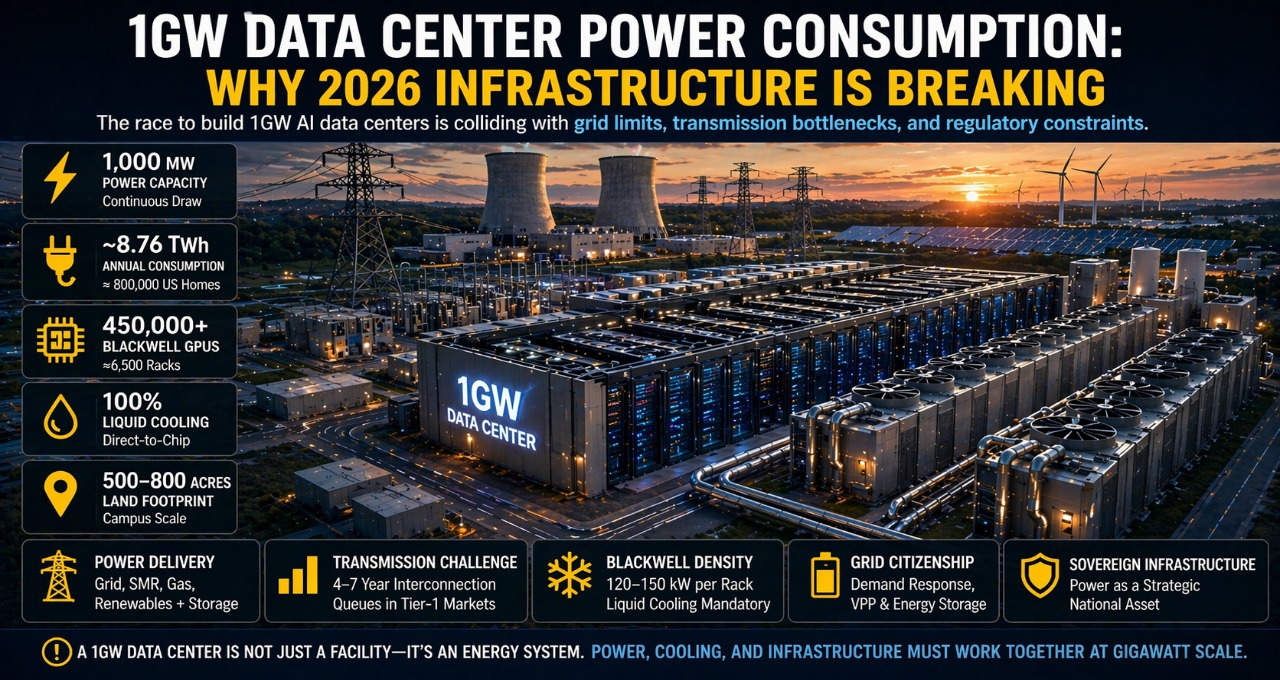

A 1GW data center consumes ~8.76 TWh annually, requires 450,000+ Blackwell GPUs, and demands direct access to generation and liquid cooling infrastructure. This guide covers everything infrastructure teams need to plan at gigawatt scale in 2026.

Gartner estimates global data center electricity demand will exceed 1,000 TWh by 2026—more than the total consumption of Japan. That scale is no longer theoretical. The race to build 1GW AI data centers is now colliding with grid limits, transmission bottlenecks, and regulatory constraints. This guide breaks down exactly what a 1GW facility requires—and why most projects fail before the first GPU is installed.

Key Takeaway

A 1GW data center in 2026 is not just a large facility — it is an energy system. It requires 450,000+ GPUs, consumes approximately 8.76 TWh annually, and demands direct access to generation, transmission, and liquid cooling infrastructure. The organizations that succeed at this scale are those who treat power delivery as their primary engineering constraint — not compute capacity.

What Is a 1GW Data Center — And Why It Matters in 2026

A 1GW data center refers to a facility capable of continuously drawing 1,000 megawatts of electrical power to support AI workloads at scale.

This matters in 2026 because AI infrastructure has crossed a structural threshold. Hyperscale is no longer 50–100 MW. Frontier model training and global inference now demand gigawatt-scale deployments — and the gap between planned capacity and deliverable power is widening fast.

Gartner estimates global data center electricity demand will exceed 1,000 TWh by 2026 — more than the total annual electricity consumption of Japan. That scale is no longer theoretical. The first wave of genuine 1GW campuses is already under construction, and the infrastructure constraints are becoming visible in real time.

The shift is structural. Training used to dominate compute budgets. Now inference accounts for 80–90% of total AI compute load across major vendor deployments. That means constant, globally distributed demand — not periodic burst workloads that allow grid recovery between jobs.

Who this directly affects:

- Hyperscalers building frontier AI training and inference clusters

- Governments investing in sovereign AI infrastructure at national scale

- Infrastructure investors funding multi-billion-dollar campus developments

- Enterprises scaling private AI deployments beyond 100 MW

As inference workloads scale, architectural decisions at the facility level increasingly intersect with application-layer efficiency. Organizations comparing enterprise AI search and RAG system architectures will find that workload design directly determines how much compute — and therefore how much power — each query consumes.

1GW Data Center: What the Numbers Actually Look Like

Before any infrastructure planning begins, the scale must be made concrete. Here is what a 1GW facility actually requires:

| Metric | 1GW Benchmark (2026) |

| Power Capacity | 1,000 MW continuous draw |

| Annual Consumption | ~8.76 TWh |

| GPU Capacity | 450,000–500,000 Blackwell GPUs |

| Rack Density | 120–150 kW per rack |

| Cooling Requirement | 100% liquid cooling |

| Land Footprint | 500–800 acres |

| Estimated CAPEX | $10B–$15B |

| Water Usage (DLC) | 500M–1B gallons/year |

To put the power numbers into perspective: 1GW equals the output of a large nuclear reactor, the electricity consumption of approximately 800,000 US homes, and roughly 10× the scale of traditional hyperscale facilities built before 2022.

For a full breakdown of how these power requirements scale across different deployment types — from edge clusters to enterprise facilities — see our complete AI data center power requirements guide for 2026.

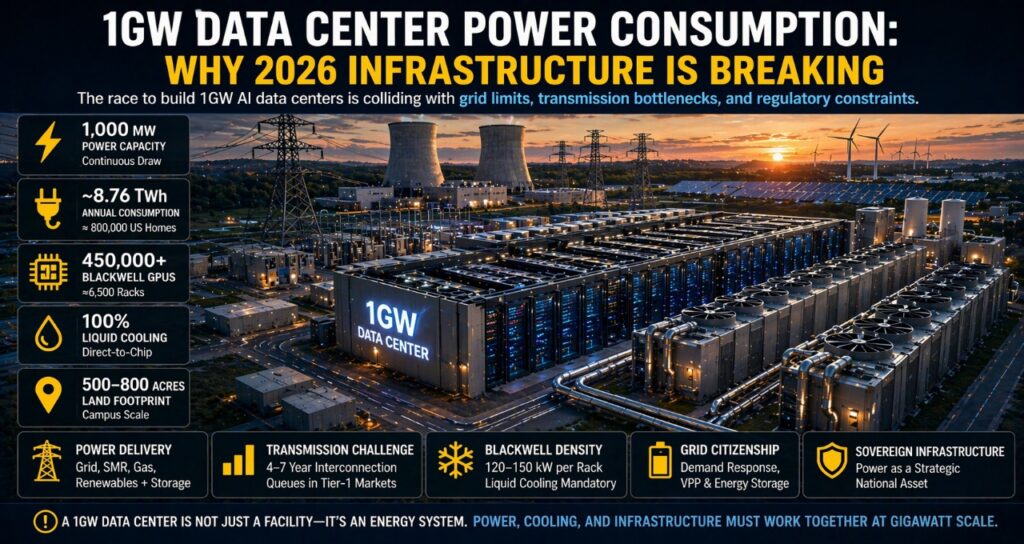

The Transmission Bottleneck: Why 1GW Rarely Reaches the Rack

Here is the uncomfortable truth about gigawatt-scale planning: securing 1GW of power generation is not the hardest problem. Delivering that power to your facility is.

Transmission infrastructure is now the binding constraint in every major data center market:

- US interconnection queues exceeded 1,500 GW as of 2025 (Lawrence Berkeley National Laboratory)

- New high-capacity grid connections in Tier-1 markets (Northern Virginia, Dublin, Singapore) take 4–7 years

- Substation capacity frequently caps usable delivery at 250–500 MW, regardless of generation availability

- Transformer lead times have extended to 2–3 years due to global manufacturing bottlenecks

This means a facility designed for 1GW may be physically limited to 400–600 MW of actual delivered power for years after opening. The gap between nameplate capacity and operational capacity is where most gigawatt-scale projects fail.

Regulatory constraints add another layer. Policies like Texas SB6 allow grid operators to curtail industrial loads during demand emergencies. For AI inference workloads running at continuous global scale, curtailment is not a minor operational inconvenience — it is a failure event with direct downstream impact on service availability.

This is precisely why hyperscalers have pivoted toward three alternative models:

1. Direct-to-wire nuclear connections — bypassing the transmission queue entirely by co-locating compute with generation.

2. Behind-the-meter generation — on-site gas turbines or SMRs that never touch the public grid.

3. Distributed campus architecture — splitting the 1GW load across multiple 250–300 MW sites in different markets, reducing single-point grid dependency.

The constraint is no longer compute. It is electricity delivery.

Power Strategies at 1GW Scale: A Full Comparison

No single power source adequately addresses the availability, cost, and carbon requirements of a 1GW facility. The 2026 model is hybrid by necessity.

| Strategy | Best For | Key Strength | Key Limitation | 2026 Verdict |

| Grid Power (Utility) | Existing hubs with contracts | Established, regulated | 4–7-year connection delays | Limited scalability above 500 MW |

| SMRs (Nuclear) | Hyperscalers, 10+ year horizons | 24/7 carbon-free baseload | High capex, regulatory complexity | Future-proof, not near-term |

| Natural Gas On-Site | Fast deployment needs | Firm, dispatchable | Carbon liability, fuel exposure | Transitional bridge only |

| Renewables + Storage | EU compliance markets | Low carbon, mandate-ready | Intermittency at 1GW scale | Viable with deep storage |

| Hybrid Portfolio | All 1GW operators | Redundancy + flexibility | Operational complexity | Recommended baseline |

In regulated European markets, the power strategy question is inseparable from regulatory compliance. This aligns directly with the EU sovereign AI infrastructure stack, where member states treat energy sovereignty as a prerequisite for compute sovereignty — meaning your power source is also a geopolitical decision.

How Many GPUs Can a 1GW Data Center Support?

The GPU math at 1GW scale is significant enough to drive facility design decisions. Here is the breakdown using current-generation Blackwell hardware:

Input assumptions:

- GB200 NVL72 rack: 140 kW per rack, 72 GPUs per rack

- Facility PUE: 1.1 (achievable with full direct liquid cooling)

- Usable compute power: 1,000 MW ÷ 1.1 = ~910 MW

Output:

- Total racks: 910,000 kW ÷ 140 kW = ~6,500 racks

- Total GPUs: 6,500 × 72 = ~468,000 B200 GPUs

- Annual compute consumption: ~8.76 TWh at continuous operation

At H100 density (40 kW/rack, 8 GPUs/rack) the same facility would support approximately 22,750 racks and 182,000 GPUs — but deliver a fraction of the inference throughput per megawatt. The density difference between generations is what makes Blackwell both the most powerful and most infrastructure-demanding option available.

These density dynamics are explored in depth in our analysis of Blackwell infrastructure deployments across Europe, where facilities are being redesigned from the ground up around power delivery limits rather than floor area.

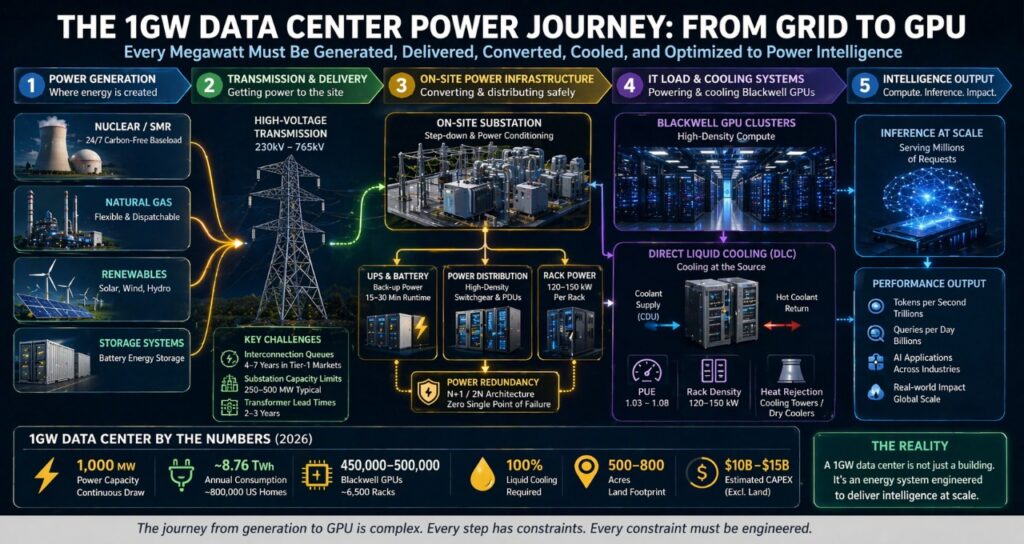

The Blackwell Density Problem: Legacy Infrastructure Cannot Adapt

Blackwell has not just raised the bar for GPU performance — it has broken the assumptions underlying every data center built before 2023.

| Generation | Rack Power Draw | Cooling Requirement | Viable in Legacy Facility? |

| V100 (2018) | 10–15 kW/rack | Air cooling | Yes |

| A100 (2020) | 20–30 kW/rack | Air or hybrid | Partially |

| H100 (2022) | 35–45 kW/rack | Air or hybrid | With upgrades |

| Blackwell B200 (2024) | 120–150 kW/rack | Liquid cooling mandatory | No |

The 10× increase in rack density from legacy to Blackwell is not an incremental upgrade problem. It is a structural incompatibility. Deploying Blackwell in a facility designed for 15 kW racks creates three immediate failure modes:

Power distribution failure — existing PDUs, busbars, and cabling are not rated for 140 kW per cabinet. Overloading causes thermal failure at the distribution layer before the GPU even throttles.

Cooling failure — air handlers designed for 15 kW/rack cannot dissipate 140 kW. GPU junction temperatures exceed safe operating limits within minutes, triggering thermal throttling that reduces effective compute by 40–60%.

Structural failure — a 140 kW Blackwell rack with liquid cooling infrastructure weighs significantly more than legacy racks. Raised floors rated for 150–200 lbs/sq ft cannot support the load without reinforcement.

The practical conclusion: any organization planning a 1GW Blackwell deployment must treat it as a greenfield infrastructure project, not an upgrade of existing capacity.

Cooling at 1GW Scale: Liquid Cooling Is the Only Option

At gigawatt scale with Blackwell density, cooling is not an engineering optimization — it is an existential constraint. Facilities that cannot cool at 140 kW per rack cannot run Blackwell GPUs at rated performance. Full stop.

| Cooling Type | Max Rack Density | PUE Range | Status at 1GW Scale |

| Traditional air cooling | Up to 20 kW/rack | 1.3–1.6 | Obsolete for AI |

| Rear-door heat exchangers | 20–40 kW/rack | 1.2–1.4 | Transitional only |

| Direct liquid cooling (DLC) | 40–150 kW/rack | 1.03–1.1 | Current standard |

| Full immersion cooling | 100–200+ kW/rack | 1.02–1.05 | Advanced, growing |

Direct Liquid Cooling (DLC) is the 2026 baseline for any 1GW AI facility. It works by running coolant directly to cold plates mounted on GPU die packages, removing heat at the source rather than relying on air movement through the rack.

At 1GW scale, the infrastructure implications of DLC are significant:

- Coolant distribution units (CDUs) must be sized for the full rack load — at 1GW, that means thousands of CDUs across the campus

- Leak detection and containment systems are safety-critical — a coolant leak in a live rack can cause catastrophic hardware failure

- Water usage at scale is substantial — a 1GW DLC facility may consume 500 million to 1 billion gallons of water annually depending on climate and cooling tower design

- Heat rejection infrastructure (cooling towers, dry coolers) must be designed for the full thermal load from day one

The efficiency gain justifies the complexity. A well-designed DLC system achieves PUE of 1.03–1.08 at scale, compared to 1.3–1.5 for air-cooled equivalents. At 1GW, the difference in overhead power — and therefore operating cost — runs to hundreds of millions of dollars annually.

The Rise of Virtual Power Plants: 1GW Facilities as Grid Assets

One of the most significant and underreported developments in 2026 is the regulatory shift treating large AI data centers as active grid participants rather than passive consumers.

Under emerging frameworks in the US, EU, and India, facilities above 500 MW are increasingly required to:

- Participate in demand response programs — reducing load on grid operator request during peak demand events

- Maintain on-site storage sufficient to cover at least 15–30 minutes of full load

- Provide grid stabilization services including frequency response and reactive power support

This creates a new operational model: the AI Data Center as Virtual Power Plant (VPP).

The key components of a VPP-capable 1GW facility:

Battery storage systems — sufficient capacity to bridge demand response events without interrupting inference workloads. At 1GW, this requires hundreds of MWh of on-site battery storage.

Thermal buffering via liquid cooling — DLC systems with thermal mass can absorb load fluctuations for short periods, effectively acting as a storage buffer without requiring battery discharge.

Intelligent load management — AI-driven systems that can shed non-critical inference workloads within seconds of a grid signal, maintaining SLA commitments on priority traffic while reducing facility draw.

The VPP model also creates a revenue opportunity. Grid stabilization services in markets like Texas, California, and Germany command significant capacity payments — partially offsetting the operating cost of the storage infrastructure required to participate.

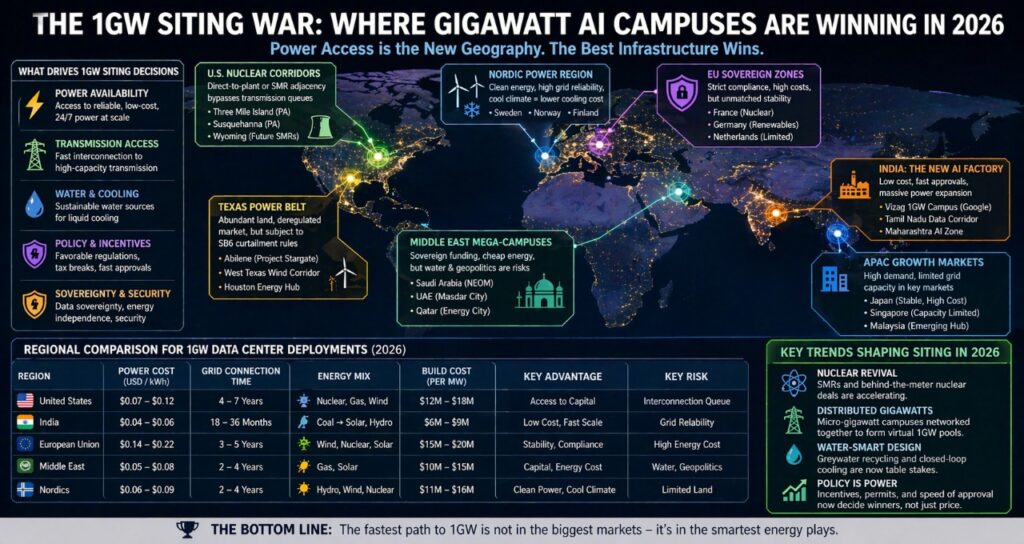

The 1GW Siting War: Power Access Is the New Geography

The era of siting data centers near population centers for latency reasons is ending. At 1GW scale, the dominant siting criterion is proximity to power — not proximity to users.

Traditional hubs are under severe pressure. Northern Virginia, the world’s largest data center market, now faces 7-year connection delays and active moratoriums on new large-scale connections in some counties. Dublin and Singapore have implemented capacity limits. Amsterdam has imposed a de facto ban on new hyperscale development.

The new siting map follows three vectors:

1. Nuclear Adjacency

Locating 1GW facilities adjacent to existing nuclear plants — or building SMRs on-site — bypasses the transmission queue entirely. Microsoft’s Three Mile Island deal and Amazon’s Susquehanna agreement are templates for this model. The facility connects directly to the generator bus, eliminating both transmission losses and interconnection wait times.

2. Emerging Market Arbitrage

India is emerging as the most significant new 1GW market due to three structural advantages: build cost of $6–9M per MW versus $12–18M in the US, government approval timelines of 18–36 months versus 4–7 years, and rapidly expanding grid capacity driven by national electrification programs. The risk is grid reliability — India’s grid still experiences significant volatility in many regions, requiring substantial on-site backup.

3. Sovereign Infrastructure Programs

Multiple governments — including France, Saudi Arabia, UAE, and Japan — are treating 1GW AI campuses as national strategic assets, funding them through sovereign wealth vehicles and providing dedicated grid connections outside normal interconnection queues. These programs offer the fastest path to power access but come with significant operational constraints around data residency, security, and procurement.

Regional Risk Matrix: Where to Build a 1GW Facility

| Factor | United States | India | European Union |

| Power Cost | $0.07–0.12/kWh | $0.04–0.06/kWh | $0.14–0.22/kWh |

| Grid Connection Time | 4–7 years | 18–36 months | 3–5 years |

| Primary Energy Mix | Nuclear, gas, wind | Coal transitioning to solar | Wind, nuclear, gas |

| Regulatory Complexity | Moderate | High (improving) | Very high |

| Build Cost per MW | $12M–$18M | $6M–$9M | $15M–$20M |

| Grid Reliability | High | Moderate | High |

| Sovereignty Risk | Low | Moderate | Low (within EU) |

| Carbon Compliance Pressure | Moderate | Low | Very high |

The EU’s high cost and compliance burden are partially offset by grid reliability, political stability, and access to renewable energy at scale. For operators with EU-based customers and data residency requirements, the premium is not optional.

The 1GW Intelligence Factory: A Layered Planning Framework

Infrastructure failures at gigawatt scale are almost never caused by the layer teams focus on most. They happen in the layers assumed to be solved. Use this framework to audit where your real constraint sits before committing capital:

Layer 1 — Generation: Where is your power produced? Is it firm (nuclear, gas) or variable (wind, solar)? Do you have contractual certainty over capacity for the facility lifetime?

Layer 2 — Transmission: Can that power physically reach your site? What is your interconnection queue position? What is your substation headroom? This is where most 1GW projects stall — and where the gap between paper capacity and operational reality lives.

Layer 3 — Facility: Is your cooling architecture rated for 140 kW racks? Is your power distribution infrastructure (switchgear, PDUs, busbars) rated for the full load? Is your structural loading adequate for DLC infrastructure?

Layer 4 — Compute: What GPU generation are you deploying? What is your actual utilization target? Are you optimizing rack layout for thermal efficiency or just density?

Layer 5 — Inference: What is your tokens-per-watt ratio? Are you running batched inference? Have you evaluated model quantization or distillation to reduce per-token compute cost? This is the layer with the most headroom for efficiency gains — and the one most teams ignore until the power bill arrives.

Most 1GW project failures occur at Layer 2. Most optimization opportunities sit at Layer 5. Teams that audit both before breaking ground have a fundamentally different outcome profile than those that focus solely on Layer 4.

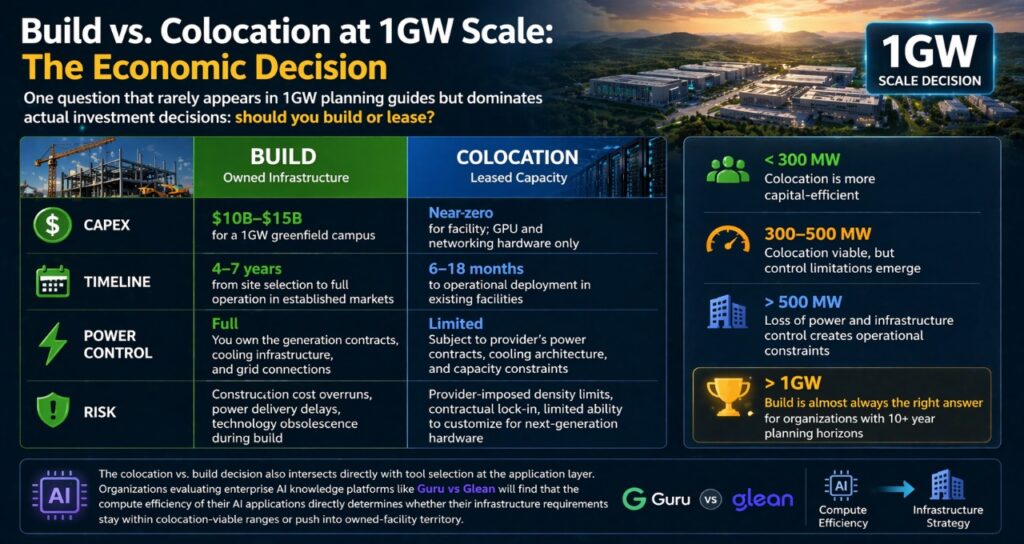

Build vs. Colocation at 1GW Scale: The Economic Decision

One question that rarely appears in 1GW planning guides but dominates actual investment decisions: should you build or lease?

At gigawatt scale, the economics shift significantly compared to smaller deployments.

Build (owned infrastructure):

- CAPEX: $10B–$15B for a 1GW greenfield campus

- Timeline: 4–7 years from site selection to full operation in established markets

- Power control: Full — you own the generation contracts, cooling infrastructure, and grid connections

- Risk: Construction cost overruns, power delivery delays, technology obsolescence during build

Colocation (leased capacity):

- CAPEX: Near-zero for facility; GPU and networking hardware only

- Timeline: 6–18 months to operational deployment in existing facilities

- Power control: Limited — subject to provider’s power contracts, cooling architecture, and capacity constraints

- Risk: Provider-imposed density limits, contractual lock-in, limited ability to customize for next-generation hardware

For most organizations — including many that self-identify as hyperscalers — the colocation model remains more capital-efficient below 300 MW. Above 500 MW, the loss of power and infrastructure control starts to create operational constraints that outweigh the CAPEX savings. Above 1GW, build is almost always the right answer for organizations with 10+ year planning horizons.

The colocation vs. build decision also intersects directly with tool selection at the application layer. Organizations evaluating enterprise AI knowledge platforms like Guru vs Glean will find that the compute efficiency of their AI applications directly determines whether their infrastructure requirements stay within colocation-viable ranges or push into owned-facility territory.

The Contrarian View: Scale Is Not the Goal

The conventional narrative is that bigger AI data centers are more efficient, more competitive, and more future-proof.

The reality in 2026 is more nuanced.

A poorly optimized 1GW facility can waste more energy — and generate less useful AI output — than three well-designed 200 MW clusters running efficient inference workloads. Scale creates economic efficiency in procurement and operations, but it does not automatically create efficiency in compute output.

The metric that separates successful 1GW operators from expensive ones is not megawatts under management. It is intelligence per megawatt — the amount of useful AI output generated per unit of energy consumed. This is a function of hardware generation, workload design, inference optimization, and cooling efficiency working together.

Organizations that treat the 1GW facility as the goal will build impressive infrastructure. Organizations that treat tokens per watt as the goal will build competitive businesses.

For a detailed breakdown of how inference workload design affects power consumption at the facility level, see our AI data center power requirements analysis.

FAQ

What is a 1GW data center?

Ans-A 1GW data center is a facility designed to continuously draw 1,000 megawatts of electrical power to support large-scale AI workloads — primarily frontier model training and global inference serving. At this scale, the facility functions as an energy system: it requires dedicated generation or transmission infrastructure, full liquid cooling, and active grid management capabilities. The first wave of genuine 1GW campuses entered construction between 2024 and 2026, driven by hyperscaler demand for frontier AI compute.

How much electricity does a 1GW data center consume annually?

Ans-A 1GW facility operating continuously consumes approximately 8.76 TWh per year — equivalent to the annual electricity usage of around 800,000 US homes, or roughly the total electricity consumption of a mid-sized country like Lebanon. At current US commercial electricity rates of $0.07–$0.12 per kWh, the annual power bill ranges from $613 million to $1.05 billion before any efficiency optimizations.

How many GPUs can a 1GW data center support?

Ans-Using NVIDIA Blackwell GB200 NVL72 racks (140 kW/rack, 72 GPUs/rack) and assuming a facility PUE of 1.1, a 1GW data center delivers approximately 910 MW to compute, supporting roughly 6,500 racks and 468,000 B200 GPUs. At H100 density, the same facility supports more racks but delivers substantially less inference throughput per megawatt — making Blackwell the economically preferred option despite its higher infrastructure requirements.

Why is transmission a bigger problem than generation at 1GW scale?

Ans-At 1GW, generation capacity is often available — the US alone has hundreds of gigawatts of approved but unbuilt renewable and gas generation. The problem is that interconnection queues for large industrial loads now exceed 1,500 GW nationally, with wait times of 4–7 years in major markets. Substation capacity frequently caps delivery at 250–500 MW regardless of generation availability. A facility that has secured 1GW of power purchase agreements may still only receive 400 MW of delivered power for the first several years of operation.

Are SMRs necessary for 1GW AI data centers?

Ans-SMRs are not necessary but are increasingly preferred for operators with 10+ year planning horizons. They provide firm, carbon-free baseload power that bypasses transmission queues — the most significant advantage at 1GW scale. The constraints are real: SMR projects require 5–10 years from permitting to first power, and upfront capital in the hundreds of millions. For most enterprises and even mid-tier hyperscalers, SMRs are not viable within current planning cycles. For Tier-1 hyperscalers with sovereign-scale infrastructure ambitions, they are becoming a strategic necessity.

What cooling system is required for a 1GW Blackwell facility?

Ans-Direct liquid cooling (DLC) is the mandatory baseline. At 120–150 kW per Blackwell rack, air cooling cannot physically dissipate the thermal load — GPU temperatures exceed safe operating limits within minutes without active liquid cooling. A 1GW DLC installation requires thousands of coolant distribution units (CDUs), a campus-scale coolant distribution network, leak detection systems throughout, and heat rejection infrastructure (cooling towers or dry coolers) sized for the full 1GW thermal load. Water consumption at this scale typically runs 500 million to 1 billion gallons annually.

What is the total cost to build a 1GW AI data center in 2026?

Ans- Greenfield construction of a 1GW AI data center in the US currently runs $10B–$15B in total CAPEX, including land, civil works, power infrastructure, cooling systems, and building construction — before any computer hardware. In India, the same facility costs $6–9B due to lower labor and materials costs. In the EU, costs run $15–20B due to regulatory requirements and higher construction costs. GPU hardware adds another $10–15B for a full Blackwell deployment, bringing total facility-plus-compute investment to $20–30B for a fully equipped 1GW campus.

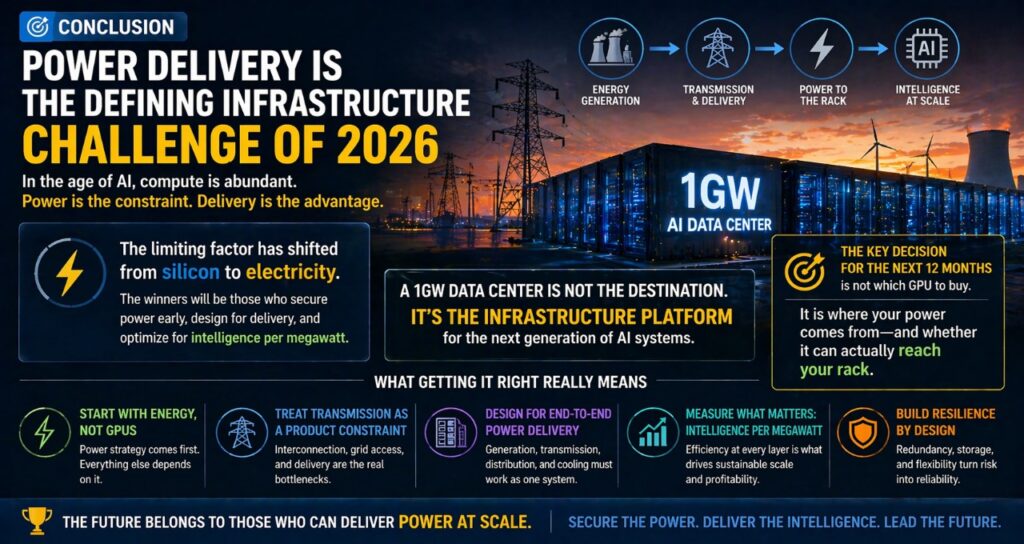

Conclusion: Power Delivery Is the Defining Infrastructure Challenge of 2026

A 1GW data center in 2026 represents a turning point in the history of computing infrastructure. It is the moment when AI compute requirements crossed from large industrial to utility-scale — when the limiting factor shifted permanently from silicon to electricity.

The organizations that will define the next decade of AI infrastructure are not those with the best GPU procurement deals or the most aggressive construction timelines. They are the ones who understood, years in advance, that power delivery was the real constraint — and built their strategies around securing it.

The 1GW facility is not the destination. It is the infrastructure platform on which the next generation of AI systems runs. Getting it right means starting with energy, not GPUs. It means treating transmission as a product development constraint, not a facilities problem. And it means measuring success in intelligence per megawatt — not megawatts under management.

If you are planning at this scale, the most important decision you will make in the next 12 months is not which GPU to buy.

It is where your power comes from — and whether it can actually reach your rack.

Sources & References

This article is based on a combination of third-party research, public infrastructure data, and aggregated industry benchmarks from leading organizations in energy, AI infrastructure, and data center operations.

Primary Research & Industry Reports

- Gartner — Global data center energy demand forecasts (2025–2026)

- Lawrence Berkeley National Laboratory — Interconnection queue data and grid capacity analysis

- International Energy Agency — Global electricity consumption and infrastructure trends

- McKinsey & Company — AI infrastructure scaling and energy demand projections

Infrastructure & AI Hardware Benchmarks

- NVIDIA — Blackwell architecture specifications and performance benchmarks (B200, GB200 NVL72 systems)

- Microsoft — Data center cooling and energy optimization disclosures

- Amazon Web Services — Hyperscale infrastructure and energy procurement strategies

- Google — AI infrastructure expansion and energy sourcing strategies

Energy & Grid Data

- U.S. Energy Information Administration — Electricity consumption benchmarks and residential comparisons

- European Commission — Energy efficiency directives and data center regulation frameworks

- Electric Reliability Council of Texas — Demand response and grid curtailment policies (SB6 context)

Author bio- Saameer is a technology journalist and infrastructure analyst covering AI systems, data center architecture, and EU digital policy. His work focuses on the gap between AI vendor claims and real-world enterprise deployment.