Enterprise Knowledge Is Broken — AI Is Rebuilding It from Scratch

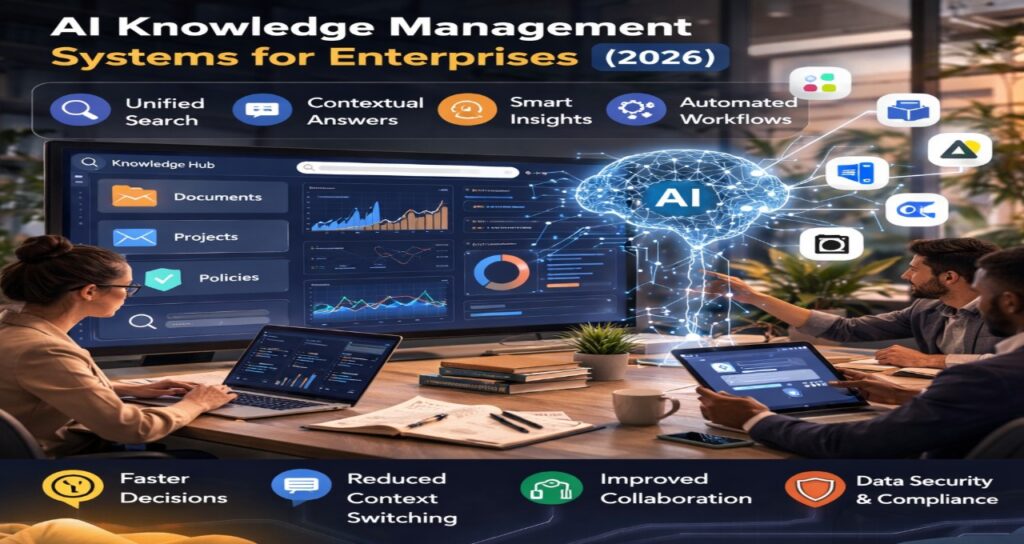

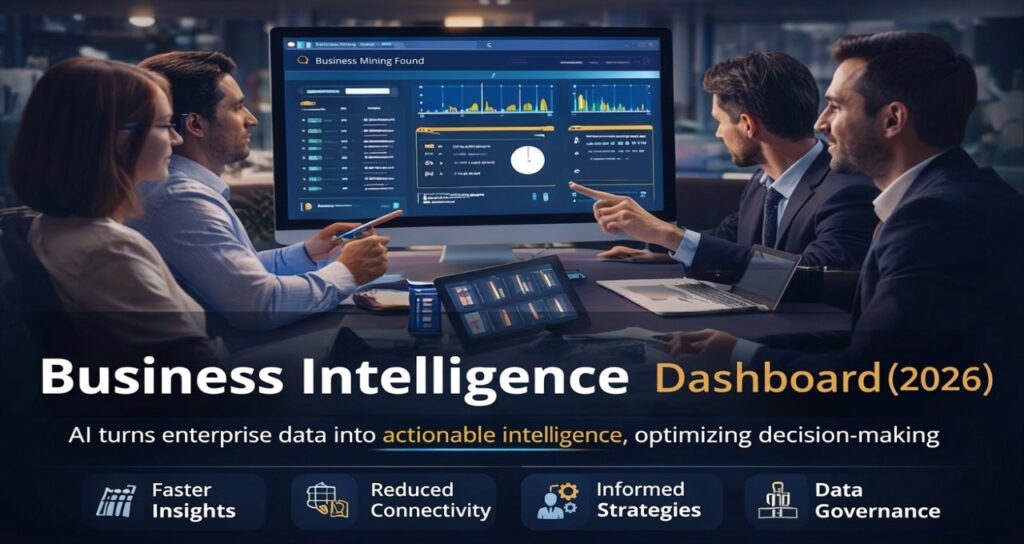

AI knowledge management systems are transforming how enterprises store, retrieve, and use internal data. Instead of static knowledge bases, organizations now rely on AI-powered memory layers that continuously update, enforce permissions, and deliver context-aware insights—making enterprise knowledge searchable, secure, and actionable in real time.

For years, enterprise knowledge lived in silos—Slack threads, Google Docs, Jira tickets, and forgotten wikis. Finding the right information often meant searching five tools, asking three people, and still getting an outdated answer.

That model is collapsing.

In 2026, companies aren’t searching for knowledge anymore. They’re querying memory.

AI knowledge management systems are emerging as the backbone of modern organizations, quietly powering decisions, workflows, and automation. And as AI agents begin to execute work autonomously, the quality of that underlying knowledge layer is becoming a competitive advantage.

Core Technology: From Search to Memory

Traditional knowledge systems were built around keyword search. They relied on humans to organize, tag, and maintain content.

AI changes that completely.

Modern systems use embeddings, vector databases, and large language models to create what can be described as:

The Enterprise Memory Layer

The Enterprise Memory Layer is a persistent AI system that continuously ingests, organizes, and retrieves company knowledge across tools—without requiring manual updates.

Instead of asking:

“Where is this document?”

Users now ask:

“What did we decide about this feature last quarter?”

And the system responds with context, not just links.

This shift—from retrieval to reasoning—is the defining change in enterprise knowledge systems.

Why It Matters Now

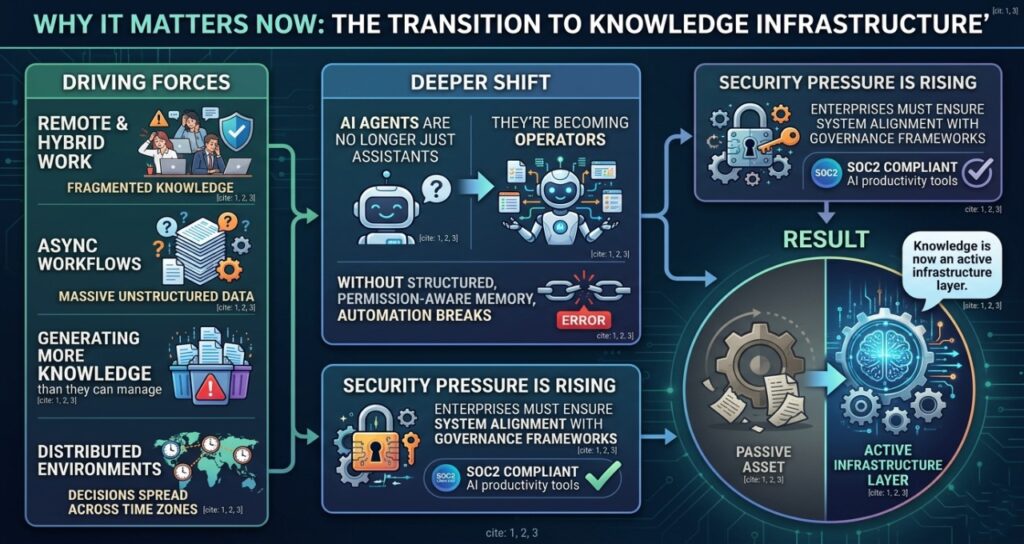

Several forces are driving this transition.

Remote and hybrid work fragmented knowledge across dozens of tools. At the same time, asynchronous workflows created massive volumes of unstructured data. Teams now generate more knowledge than they can realistically manage.

This challenge becomes more visible in distributed environments shaped by async AI tools for global teams, where decisions are spread across time zones and communication layers.

There’s also a deeper shift happening.

AI agents are no longer just assistants—they’re becoming operators. But agents are only as effective as the knowledge they can access. Without structured, permission-aware memory, automation breaks.

Security pressure is rising too. Enterprises must ensure that internal knowledge systems align with governance frameworks, particularly those explored in SOC2 compliant AI productivity tools.

The result?

Knowledge is no longer a passive asset. It’s now an active infrastructure layer.

Architecture: How AI Knowledge Systems Work

Under the surface, these systems rely on a multi-layered architecture.

1. Data Ingestion Layer

Connects to tools like Slack, Google Drive, Notion, and Jira. Continuously pulls structured and unstructured data.

2. Embedding Layer

Transforms text, conversations, and documents into vector representations for semantic understanding.

3. Vector Database

Stores embeddings and enables fast similarity search across massive datasets.

4. Retrieval Engine (RAG)

Uses Retrieval-Augmented Generation to fetch relevant context before generating answers.

5. Permission Layer

Enforces access control dynamically. The system only retrieves information the user is allowed to see.

6. LLM Inference Layer

Generates human-like responses based on retrieved context.

This architecture mirrors coordination patterns emerging in agentic AI workflow automation systems, where systems must reason across multiple data sources to execute tasks.

Traditional vs AI Knowledge Systems

| Feature | Traditional Knowledge Base | AI Knowledge System |

| Search Type | Keyword-based | Semantic + contextual |

| Updates | Manual | Continuous automatic |

| Context Awareness | Limited | Deep contextual reasoning |

| Permissions | Static roles | Dynamic enforcement |

| Integration | Fragmented | Unified across tools |

| Usability | Search-heavy | Answer-driven |

Leading Tools in 2026

The market is evolving fast, but a few platforms stand out.

Glean

Often considered the gold standard, Glean provides enterprise-wide search with permission-aware AI. Its “permissions mirroring” ensures zero data leakage across teams.

Notion AI

Notion has evolved into a unified knowledge + workflow system, automatically updating documentation based on team activity.

Microsoft Copilot

Deeply integrated into the Microsoft ecosystem, Copilot leverages organizational data across Outlook, Teams, and SharePoint.

Guru AI

Focuses on verified knowledge delivery, ensuring that answers are both accurate and trusted.

Slite AI

Designed for async teams, Slite emphasizes simplicity and real-time collaboration.

Many organizations first encounter these tools through curated stacks like AI productivity tools for remote teams, before expanding into full knowledge infrastructure.

Strategic Industry Implications

The shift toward AI knowledge systems is reshaping the entire tech stack.

- Microsoft is embedding AI memory into its ecosystem via Copilot and Graph.

- OpenAI is driving advances in embeddings and retrieval systems powering enterprise AI.

- Nvidia provides the GPU infrastructure enabling real-time inference at scale.

This transformation also impacts coordination layers. Knowledge systems increasingly integrate with scheduling and planning architectures similar to those described in AI scheduling agents for remote teams.

At a broader level, governance is becoming inseparable from knowledge. As AI systems access more sensitive data, organizations must align with frameworks like those discussed in conversational AI governance systems.

“Real-World Enterprise Deployments”

Real-World Examples of AI Knowledge Management Systems (2026)

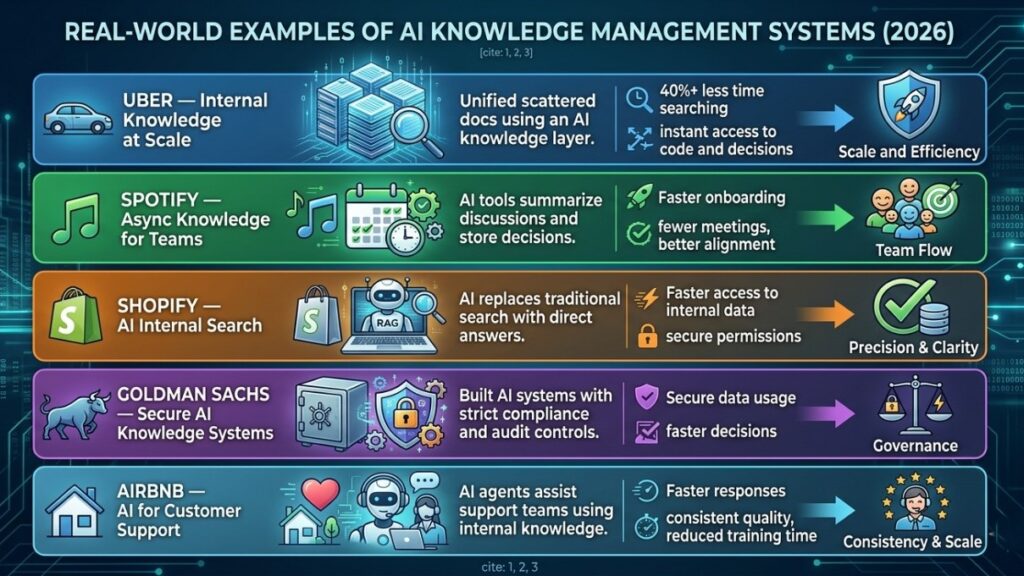

1. Uber — Internal Knowledge Unification at Scale

Uber operates across thousands of microservices, teams, and regions. Historically, engineers struggled to locate internal documentation spread across tools like Google Docs, Slack, and internal wikis.

What Changed:

Uber implemented an AI-powered knowledge layer (similar to Glean) to unify internal data.

Impact:

- Engineers reduced time spent searching for documentation by 40%+

- AI surfaces relevant code, past decisions, and service dependencies instantly

- Context-aware answers replaced manual searching across tools

This is a textbook example of the Enterprise Memory Layer in action

2. Spotify — Async Knowledge for Distributed Teams

Spotify’s global engineering teams operate across Europe and the US. Knowledge fragmentation became a major bottleneck for product velocity.

What They Did:

They adopted AI-driven documentation systems integrated with Slack and internal tools.

Key Innovation:

- AI summarizes engineering discussions automatically

- Decisions are stored as structured knowledge

- Teams can query past product decisions conversationally

This aligns directly with architectures seen in

async AI tools for global teams

Result:

- Faster onboarding for new engineers

- Reduced dependency on meetings

- Improved cross-team alignment

3. Shopify — AI-Powered Internal Search

Shopify faced a classic enterprise challenge: too much internal knowledge, not enough discoverability.

Solution:

They implemented AI search systems that:

- Index internal tools, tickets, and documents

- Provide instant answers instead of search results

- Maintain strict permission controls

Why It Matters:

Shopify’s system demonstrates how AI replaces traditional enterprise search entirely

This model mirrors platforms like Microsoft Copilot

4. Goldman Sachs — Secure Knowledge Systems (High Compliance)

In finance, knowledge systems must meet strict regulatory requirements.

Implementation:

Goldman Sachs uses AI knowledge systems with:

- Private cloud (VPC) deployment

- Zero data retention policies

- Full audit logs for compliance

Strategic Insight:

This is where AI knowledge systems intersect with governance models like

SOC2 compliant AI productivity tools

Result:

- Secure AI usage across sensitive financial data

- Reduced compliance risk

- Faster internal decision-making

5. Airbnb — Customer Support Knowledge AI

Airbnb uses AI knowledge systems not just internally—but for customer operations.

What They Built:

- AI agents trained on internal knowledge base

- Real-time support suggestions for agents

- Automated response generation

Outcome:

- Faster customer response times

- Consistent support quality globally

- Reduced training time for new employees

Future Outlook (2026–2028)

The next phase of evolution is already visible.

AI knowledge systems will become:

- Autonomous — agents will update and manage knowledge without human input

- Predictive — systems will surface insights before users ask

- Contextual at scale — memory will span years of organizational history

- Regulated by design — compliance will be embedded into every query

We’re moving toward a world where companies don’t just store knowledge—they operate on top of it.

And in that world, the Enterprise Memory Layer becomes as critical as cloud infrastructure itself.

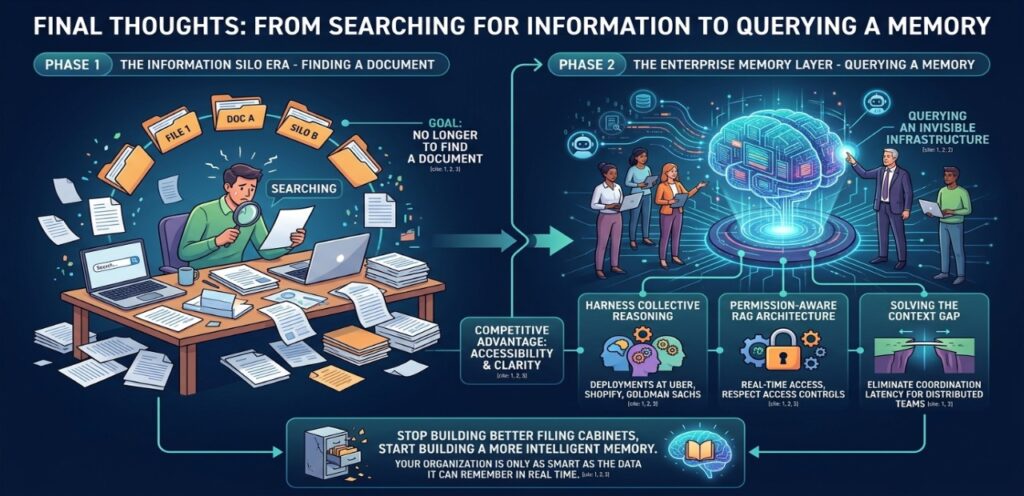

Final Thoughts: From Searching for Information to Querying a Memory

The transition from traditional enterprise search to AI knowledge management marks the end of the “Information Silo” era. In 2026, the competitive advantage of a firm is no longer just the talent it employs, but the accessibility and clarity of its Enterprise Memory Layer.

As we have seen through the deployments at Uber, Shopify, and Goldman Sachs, the goal is no longer to find a document, but to harness the collective reasoning of the entire organization. By implementing a permission-aware, RAG-driven architecture, enterprises are finally solving the “Context Gap” that has plagued distributed teams for decades.

We are moving toward a future where knowledge is not a destination you visit, but an invisible infrastructure that powers every AI agent, every decision, and every meeting. For the modern leader, the mandate is clear: Stop building better filing cabinets and start building a more intelligent memory. In the age of agentic work, your organization is only as smart as the data it can remember in real time.

FAQ — AI Knowledge Systems in 2026

1.What is an AI knowledge management system?

Ans-An AI knowledge management system uses machine learning and language models to organize and retrieve company knowledge. It goes beyond search by delivering context-aware answers based on internal data and conversations.

These systems rely on embeddings, vector databases, and retrieval pipelines to understand meaning rather than keywords.

2.How is AI knowledge different from traditional knowledge bases?

Ans-Traditional systems rely on manual input and keyword search. AI systems automatically ingest and understand data across tools.

They provide answers instead of links, significantly reducing time spent searching for information.

3.Are AI knowledge systems secure for enterprises?

Ans-Yes, leading platforms use permission-aware architectures and comply with enterprise standards. They ensure users only access data they are authorized to see.

Many systems also align with compliance frameworks similar to those required in regulated AI environments.

4.What is the Enterprise Memory Layer?

Ans-The Enterprise Memory Layer is a system that continuously stores and retrieves organizational knowledge across tools.

It acts as a centralized intelligence layer that powers AI agents, workflows, and decision-making processes.

5.Which companies lead this space in 2026?

Ans-Companies like Glean, Microsoft, and OpenAI are leading innovation in enterprise knowledge systems.

They are shaping how organizations move from search-based workflows to AI-driven memory systems.

Sources

European Commission – Artificial Intelligence Act (EU AI Act)

https://digital-strategy.ec.europa.eu/en/policies/european-ai-act

European Commission – Digital Strategy and AI Governance

https://digital-strategy.ec.europa.eu/

OECD – Artificial Intelligence Policy Observatory

https://oecd.ai/

Stanford University – AI Index Report

https://aiindex.stanford.edu/

Nvidia – Enterprise AI Infrastructure and Agentic Systems

https://www.nvidia.com/en-us/ai/

Microsoft – AI Productivity and Copilot Research

https://www.microsoft.com/en-us/ai

McKinsey Global Institute – The Future of Work in the Age of AI

https://www.mckinsey.com/mgi

Gartner – AI-Augmented Workforce and Autonomous Systems Research

https://www.gartner.com/en/artificial-intelligence

Author Bio

Saameer is the founder of Tech Plus Trends and an AI infrastructure strategist specializing in distributed systems, enterprise AI platforms, and digital governance. His research focuses on how organizations deploy agentic workflows, knowledge systems, and secure AI architectures to scale globally. Saameer analyzes emerging trends across AI infrastructure, compliance frameworks, and technology economics, helping enterprises understand the systems shaping the future of intelligent work.

AI Transparency & Editorial Disclosure

Editorial Process & Integrity This industry analysis was developed by Tech Plus Trends using a collaborative “Human-in-the-Loop” (HITL) AI workflow. While advanced agentic systems and Retrieval-Augmented Generation (RAG) were used to synthesize cross-platform data, case studies, and architectural frameworks, the final strategic conclusions and the “Enterprise Memory Layer” concept were verified and finalized by Saameer, our founder and lead analyst. We prioritize technical insight over automated volume.

EU AI Act Compliance (Article 50) In accordance with global transparency requirements for AI-assisted content, be advised that the analysis of “Autonomous Knowledge Systems” and “Semantic Search Layers” described herein involve interaction with generative AI models. This content is intended to provide an objective, expert-led evaluation of how these technologies function within a modern enterprise infrastructure.

Data Privacy & Security The discussion of “Enterprise Memory” and “Unified Knowledge Ingestion” refers to emerging standards for private, encrypted enterprise AI environments. Tech Plus Trends advocates for Privacy-by-Design. We recommend that organizations implementing these systems prioritize VPC-hosted solutions and local data residency to ensure that internal intellectual property is never used for training public foundation models.

2 thoughts on “How AI Knowledge Management Systems Are Replacing Enterprise Search in 2026”