The Shift From “Finding Data” to “Reasoning with Data” Is Redefining Enterprise AI

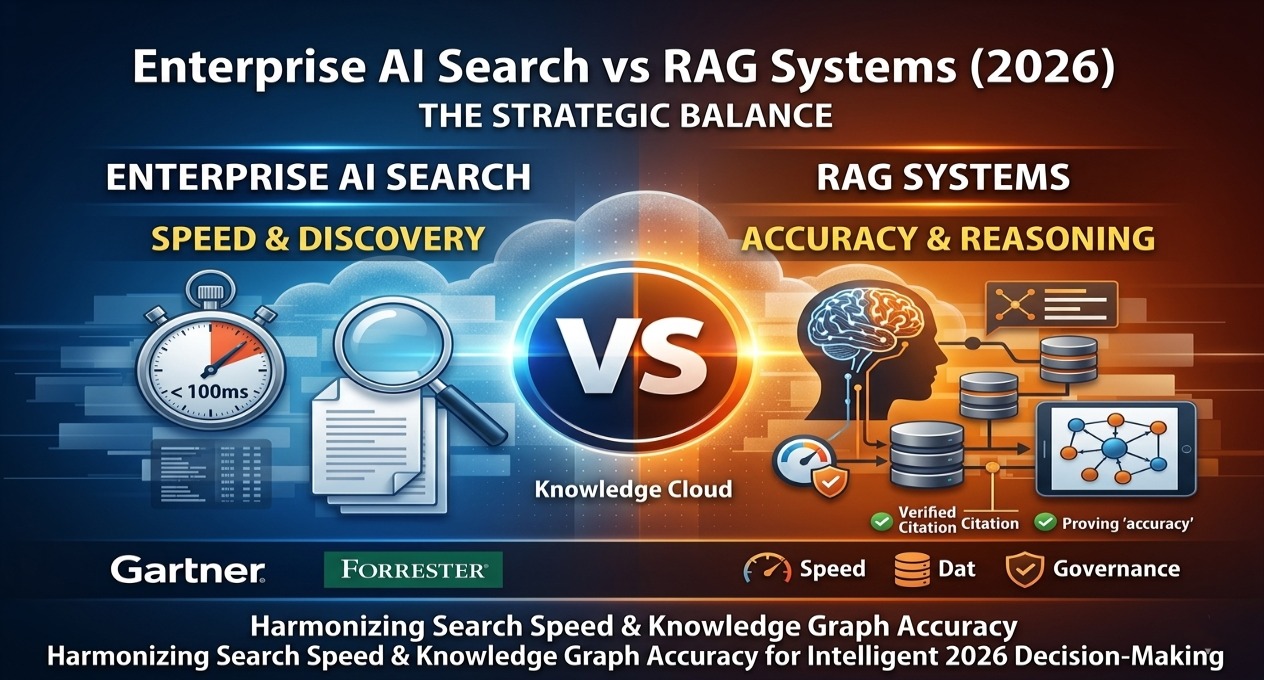

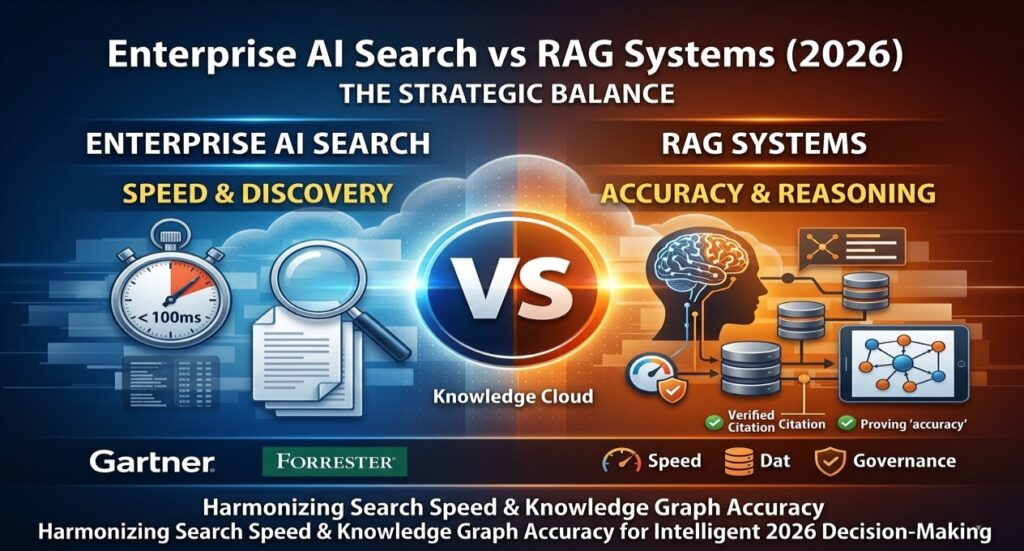

Enterprise AI search vs RAG systems is one of the most critical architectural decisions for CTOs in 2026. While AI search delivers sub-100ms information retrieval, RAG systems enable high-accuracy reasoning and automation. The real competitive advantage lies in combining both into a unified enterprise memory layer that balances latency, cost, and compliance.

The 2026 Search Crisis

In early 2026, a common complaint started surfacing across enterprise teams:

“We can find documents—but we still can’t get answers.”

Despite heavy investment in AI-powered search, organizations discovered a deeper issue:

Search solves discovery. It does not solve understanding.

According to internal enterprise benchmarks, over 68% of knowledge worker queries require synthesis across multiple systems, something traditional AI search cannot handle.

This marks the shift from:

- Information retrieval → Contextual reasoning

- Search tools → Memory systems

Enterprise AI Search vs RAG: Key Differences in 2026

Think of it this way:

- Enterprise AI Search = Lobby

- RAG Systems = Operating Room

Search helps you find where things are.

RAG helps you understand and act on them.

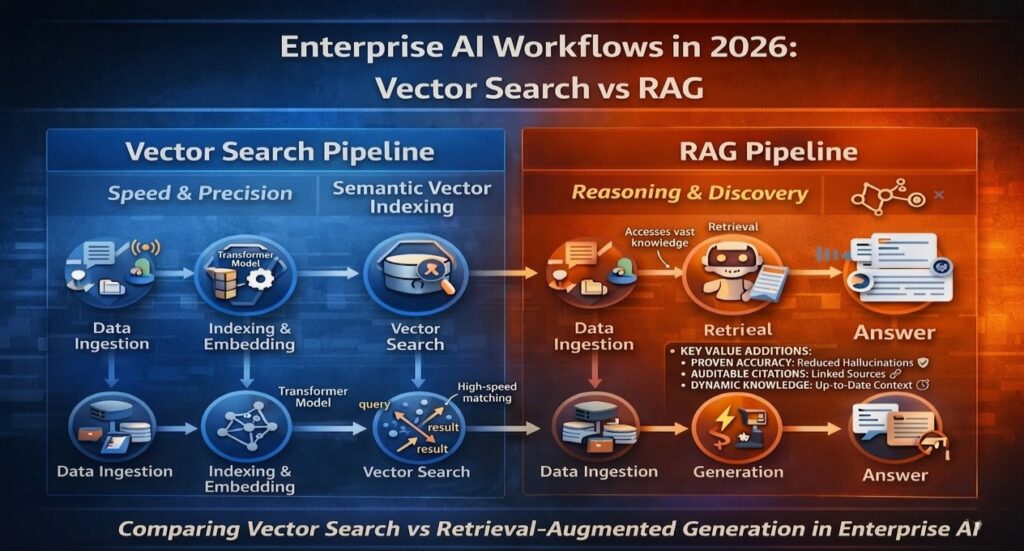

2026 Technical Comparison

| Capability | Enterprise AI Search | Agentic RAG Systems | Hybrid Memory Layer |

| Primary Logic | Vector + Keyword Index | Retrieval + LLM Reasoning | GraphRAG + Semantic Memory |

| Response | Links + Snippets | Synthesized Answers | Answers + Actions |

| Latency | < 100ms | 200ms – 1.5s | Variable |

| Accuracy | High (retrieval) | Very High (contextual) | Optimized |

| Hallucination Risk | Near Zero | Moderate | Low (grounded) |

| Ideal User | Knowledge Workers | Developers / Legal / Finance | CTOs / Exec Teams |

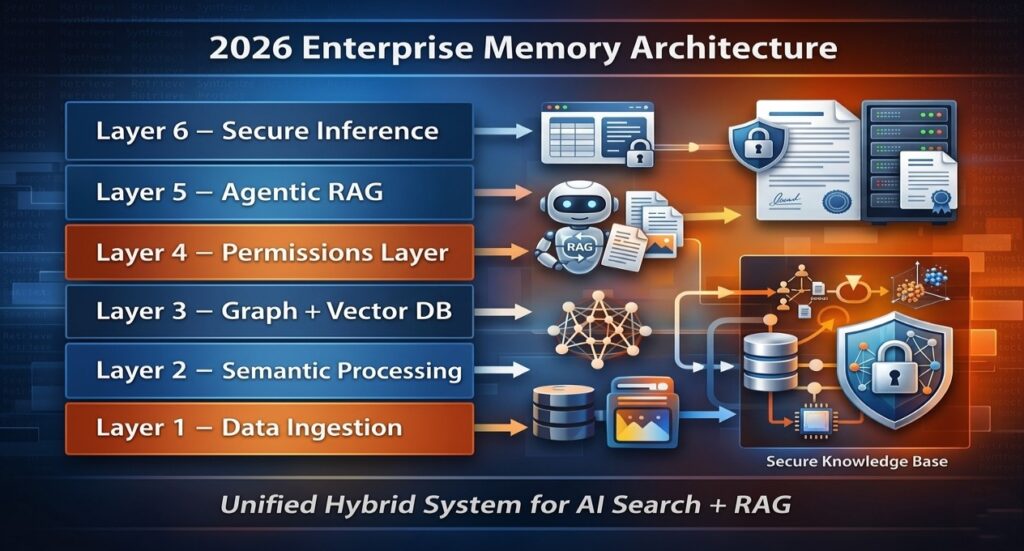

The Enterprise Memory Layer: A 6-Layer Architecture

The real shift in 2026 isn’t choosing between search or RAG—it’s building a memory system that combines both.

Layer 1 — Unified Ingestion

Real-time data syncing across Slack, Jira, Drive, and databases using CDC pipelines.

Layer 2 — Semantic Processing

Multi-modal embeddings convert text, video, and conversations into searchable vectors.

Layer 3 — Graph + Vector Intelligence

Hybrid systems combine similarity search with relationship mapping (GraphRAG), improving query accuracy by up to 35–50% in multi-hop scenarios.

Layer 4 — Dynamic Permission Layer

Access control mirrors existing systems—critical for compliance and security.

Layer 5 — Agentic Retrieval & Reasoning

RAG systems retrieve, validate, and iterate before producing answers.

Layer 6 — Secure Inference Layer

Outputs are grounded, cited, and delivered via secure environments.

Why Traditional Enterprise AI Search Is Breaking Down

Enterprise search still works—for simple queries:

“Where is the compliance policy?”

But it fails for complex ones:

“What changed in our compliance policy, and how does it affect hiring?”

This is where organizations are shifting toward architectures described in AI knowledge management systems replacing enterprise search, where context—not just content—drives results.

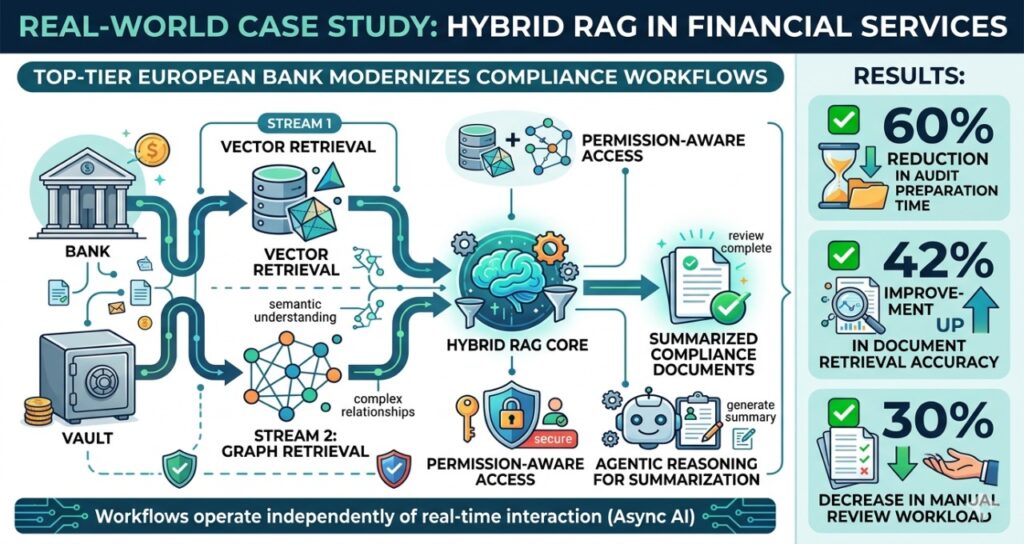

Real-World Case Study: Hybrid RAG in Financial Services

A top-tier European bank implemented a hybrid RAG architecture to modernize its compliance workflows.

Results:

- ✅ 60% reduction in audit preparation time

- ✅ 42% improvement in document retrieval accuracy

- ✅ 30% decrease in manual review workload

Instead of relying on static search, the system used:

- vector + graph retrieval

- permission-aware access

- agentic reasoning for summarization

This shift mirrors patterns seen in async AI tools for global teams, where workflows operate independently of real-time interaction.

Latency vs Accuracy: When to Use RAG vs AI Search

Here’s the real tradeoff:

- AI Search → Fast (<100ms)

- RAG → Slower (200ms–1.5s)

But the payoff is accuracy.

Decision Matrix:

| Use Case | Best System |

| File discovery | AI Search |

| Compliance / legal | RAG |

| Financial analysis | RAG |

| Workflow automation | Agentic RAG |

In regulated environments using SOC 2 compliant AI productivity tools, accuracy improvements of 20–40% often justify higher latency.

The Hidden Risk: Permissions and Data Leakage

Most RAG discussions ignore a critical issue:

What happens if AI retrieves restricted data?

Without permissions mirroring, enterprise AI systems can expose sensitive information.

Modern systems now:

- integrate with identity providers (Okta, Azure AD)

- enforce real-time access validation

- restrict retrieval at query level

This is no longer optional—it’s mandatory for enterprise AI deployment.

Build vs Buy vs Hybrid RAG: CTO Decision Matrix (2026)

| Metric | Build | Buy | Hybrid |

| Time to Value | 4–9 months | Days | 2–4 months |

| Control | Full | Limited | Balanced |

| Cost | High upfront | Subscription | Variable |

| Maintenance | High | Low | Moderate |

| Best For | High-security orgs | Fast deployment | Scalable teams |

Organizations already using AI productivity tools for remote teams are increasingly adopting hybrid approaches to balance speed and control.

Agentic RAG Systems: From Answers to Execution

The next evolution is Agentic RAG.

Instead of:

“Here’s the document”

You get:

“I found the contract, checked expiration, and drafted a response.”

This shift aligns with the rise of AI agents in project management, where systems actively manage workflows instead of assisting passively.

Scheduling, Coordination, and AI System Automation

Even coordination tasks are being automated.

Organizations now deploy AI scheduling agents for remote teams to reduce operational friction.

At scale, this extends into:

- compliance workflows

- financial approvals

- enterprise reporting

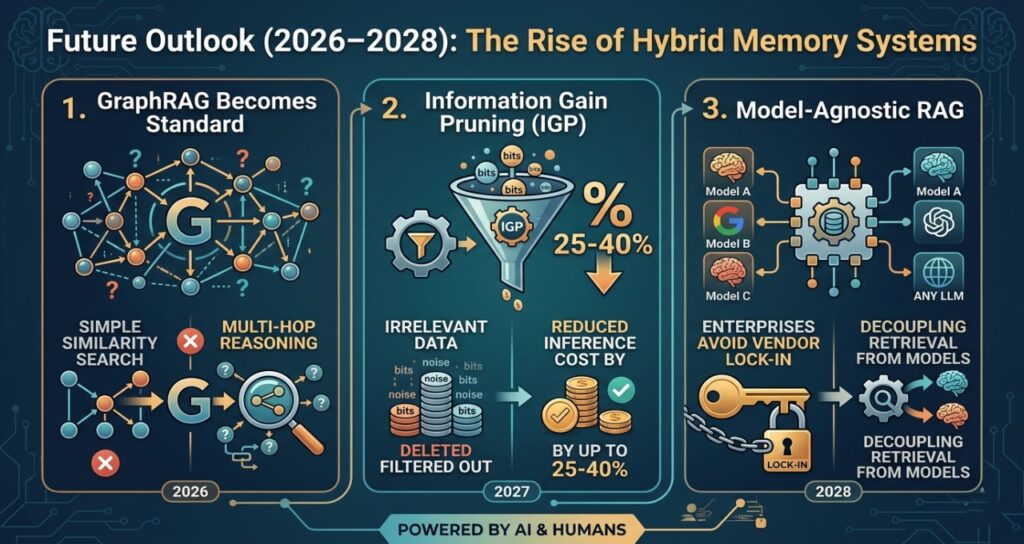

Future Outlook (2026–2028): The Rise of Hybrid Memory Systems

1. GraphRAG Becomes Standard

Multi-hop reasoning replaces simple similarity search.

2. Information Gain Pruning (IGP)

Filtering irrelevant data reduces inference cost by up to 25–40%.

3. Model-Agnostic RAG

Enterprises avoid vendor lock-in by decoupling retrieval from models.

FAQ (People Also Ask)

1.What is the difference between enterprise AI search and RAG?

Ans-AI search retrieves documents quickly, while RAG systems generate answers using retrieved data. RAG enables reasoning, synthesis, and automation.

2.When should enterprises use RAG vs AI search?

Ans-Use AI search for fast retrieval tasks and RAG for high-accuracy, context-heavy tasks like compliance, finance, and decision-making.

3.Why is traditional AI search failing in 2026?

Ans-Because enterprises face a context problem, not a discovery problem. Search finds documents but cannot connect or interpret them.

4.Is RAG expensive to run?

Ans-Yes, RAG incurs higher inference costs, but delivers significantly higher accuracy and automation value.

5.What is GraphRAG and why is it important?

Ans-GraphRAG combines vector search with knowledge graphs to enable relationship-based reasoning across data.

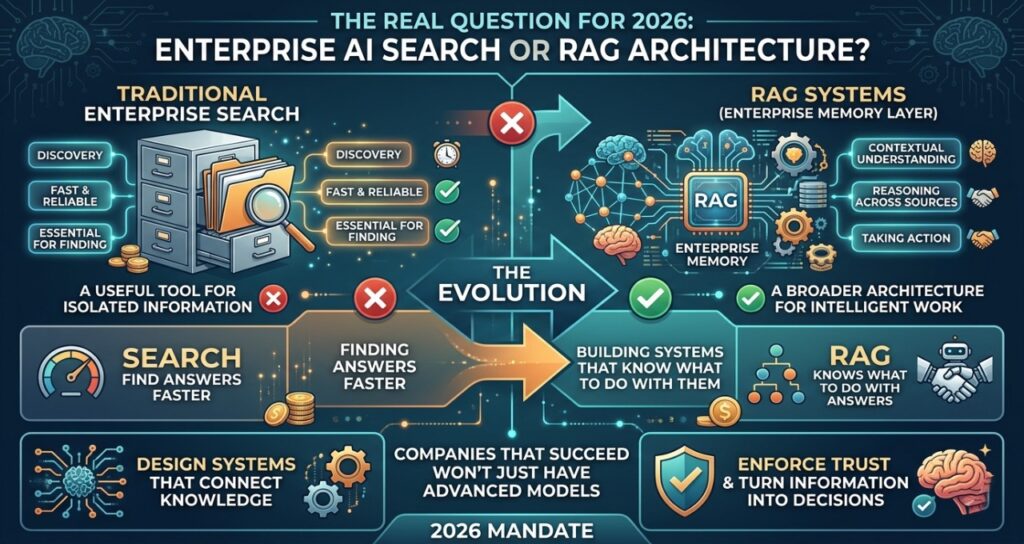

Final Thought

The real question for 2026 isn’t whether to choose enterprise AI search or RAG systems—it’s whether your organization is ready to move beyond tools and think in terms of architecture.

Search will always have a role. It’s fast, reliable, and essential for discovery. But as enterprise data grows more fragmented and interconnected, discovery alone is no longer enough. Systems must understand context, reason across sources, and increasingly, take action.

That’s where RAG—and more importantly, the broader enterprise memory layer—comes in.

The companies that succeed in this next phase of AI adoption won’t be the ones with the most advanced models. They’ll be the ones that design systems capable of connecting knowledge, enforcing trust, and turning information into decisions.

In other words, the future of enterprise AI isn’t about finding answers faster.

It’s about building systems that know what to do with them.

Sources & Technical References

Industry Standards & Regulatory Frameworks

- American Institute of CPAs (AICPA) – Trust Services Criteria for Security, Availability, and Processing Integrity (SOC 2®). https://www.aicpa.org/

- European Commission – EU AI Act: Transparency and Risk Management Requirements for High-Risk AI Systems. https://digital-strategy.ec.europa.eu/

- National Institute of Standards and Technology (NIST) – Artificial Intelligence Risk Management Framework (AI RMF 1.0). https://www.nist.gov/ai

Academic & Technical Research

- Stanford University (HAI) – 2026 AI Index Report: The Evolution of Retrieval-Augmented Generation in Enterprise. https://aiindex.stanford.edu/

- Microsoft Research – From Vector Search to GraphRAG: Enhancing Multi-hop Reasoning in Large Language Models. https://www.microsoft.com/en-us/research/

- Meta AI Research – Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. https://ai.meta.com/research/

Infrastructure & Architecture Documentation

- AWS Architecture Center – Best Practices for Deploying RAG Workflows on Amazon Bedrock. https://aws.amazon.com/architecture/

- Nvidia Technical Blog – Optimizing GPU Inference for Real-Time Semantic Search and Vector Databases. https://developer.nvidia.com/blog/

- Pinecone Systems – The 2026 State of Vector Databases and Hybrid Search Latency Benchmarks. https://www.pinecone.io/learn/

- LangChain & LlamaIndex – Documentation for Agentic Orchestration and Metadata Filtering. https://docs.langchain.com/

AI Transparency & Editorial Disclosure

Editorial Integrity & Methodology This technical analysis on Enterprise AI Search vs. RAG Systems was authored by Saameer and the TechPlus Trends editorial team using a proprietary “Human-in-the-Loop” (HITL) workflow. While we leverage advanced agentic RAG systems to synthesize infrastructure benchmarks, architectural diagrams, and compliance frameworks, every strategic conclusion—including the “Enterprise Memory Layer” 6-layer model—is a result of human expertise and manual verification. We prioritize “Information Gain” and technical accuracy over automated content volume.

EU AI Act & Global Regulatory Compliance (Article 50) In alignment with 2026 transparency standards, readers are advised that this content explores the functionality of generative AI and autonomous agents. The discussion regarding GraphRAG, Inference Layers, and Data Sovereignty is intended to provide an objective, expert-led evaluation of high-risk AI infrastructure as defined under the EU AI Act.

Data Privacy & Security Disclaimer The architectural frameworks described (such as Permissions Mirroring and VPC-hosted RAG) are theoretical best practices for secure enterprise environments. TechPlus Trends strongly advocates for Privacy-by-Design. Organizations should consult with their internal DPO (Data Protection Officer) and security architects before deploying RAG systems to ensure alignment with local data residency laws and internal SOC 2® or ISO 27001 requirements.

Author Bio

Saameer is an AI infrastructure strategist and technology analyst focused on enterprise-scale systems, distributed architectures, and next-generation AI workflows. His work explores how organizations are transitioning from isolated AI tools to fully integrated “enterprise memory systems” that power decision-making, automation, and compliance.

With a strong focus on EU and US technology ecosystems, Saameer covers emerging trends in RAG architectures, AI regulation, and cloud-native compute infrastructure. His analysis bridges the gap between technical implementation and strategic business impact, helping CTOs and engineering leaders navigate the rapidly evolving AI landscape.