In March 2026, Guru launched its Slack Model Context Protocol (MCP) integration — enabling AI agents to query live conversations in real time. That single update broke the biggest limitation of traditional enterprise search: stale data. While Glean indexes everything retrospectively, Guru now participates in knowledge as it happens. This guide explains exactly how the two platforms compare, what the hidden costs are, and which platform delivers better ROI for your organization in 2026.

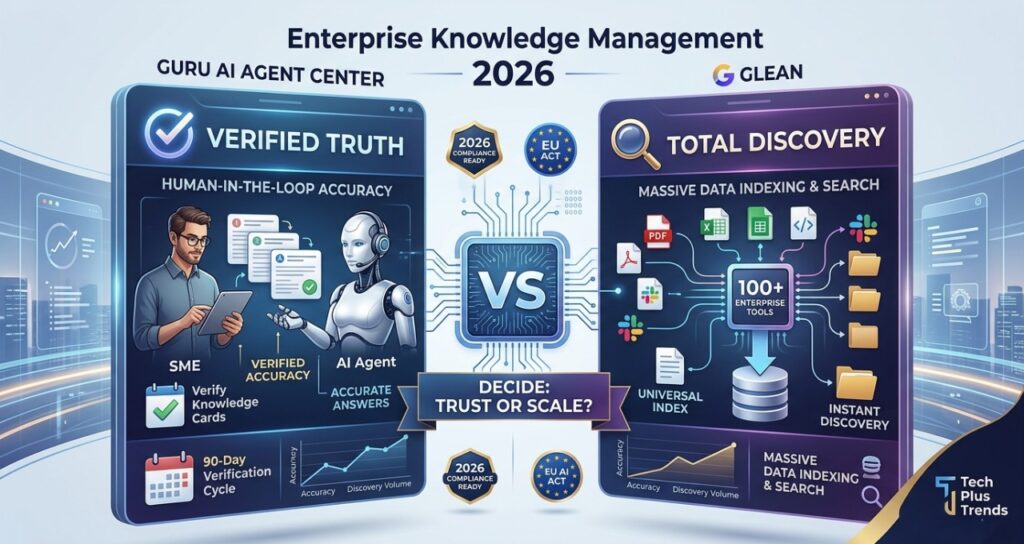

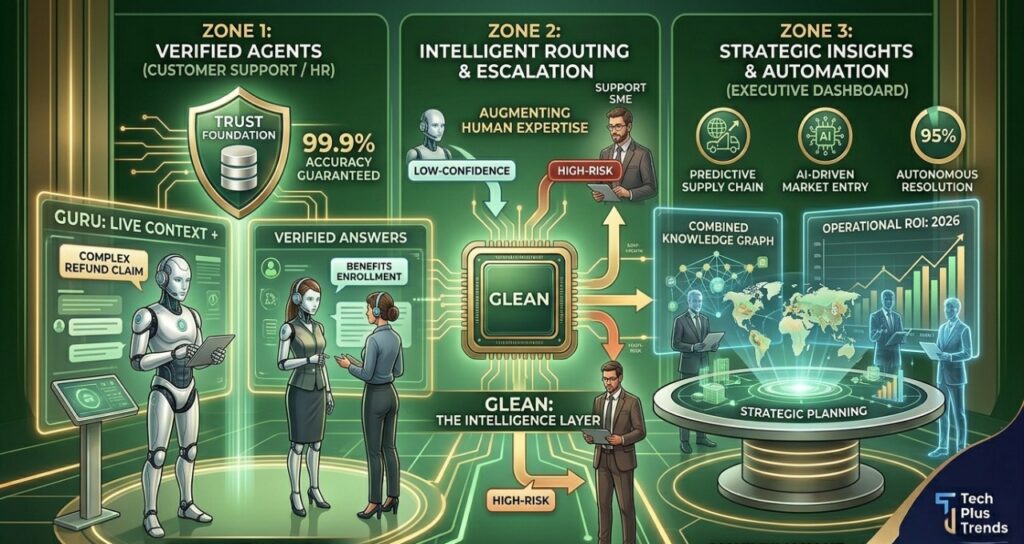

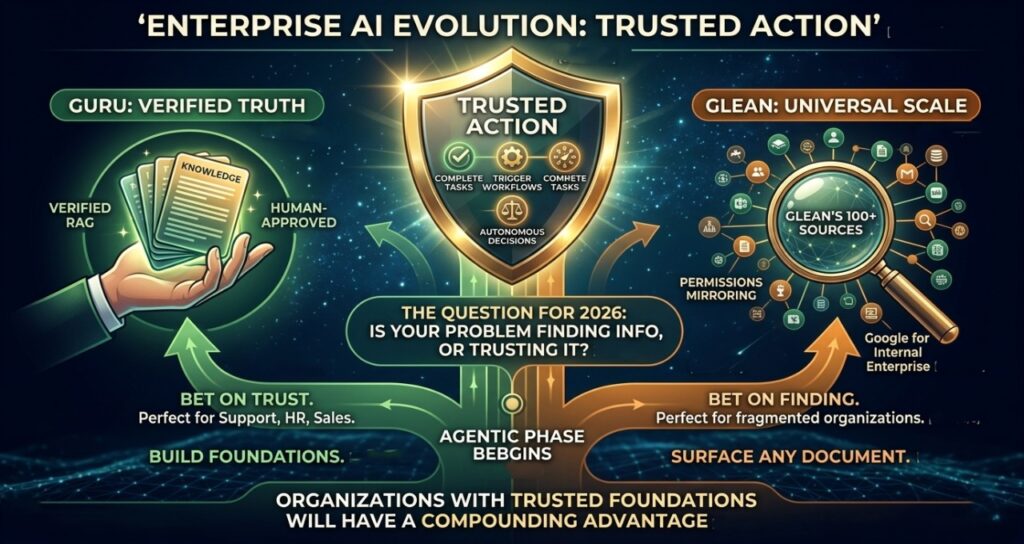

| Key Takeaway Guru vs Glean in 2026 comes down to trust vs scale. Guru AI Agent Center excels at verified, human-approved knowledge workflows that reduce hallucinations — best for customer support, HR, and sales teams where a wrong answer is costly. Glean excels at indexing massive, unstructured data across 100+ enterprise tools — best for large organizations that need universal discovery first. The right choice depends on whether your primary problem is knowledge accuracy or knowledge discovery. |

What Has Changed — And Why It Matters in 2026

Enterprise AI knowledge management has crossed a threshold. Organizations are no longer asking where a document lives. They are asking whether an AI agent can find the right answer instantly — and act on it without human intervention.

This shift represents the transition from retrieval to reasoning — a fundamental change explored in depth in our analysis of AI knowledge management systems replacing enterprise search, where static document libraries are giving way to live reasoning engines.

Three forces are driving this change simultaneously:

- Internal data explosion: the average enterprise stores knowledge across Slack, Jira, SharePoint, Notion, Confluence, and dozens of SaaS tools — most of it untagged and unsearchable

- Cost of interruption: based on aggregated enterprise workflow studies, each knowledge-seeking interruption costs approximately $2–$5 per 15-minute block of lost productivity

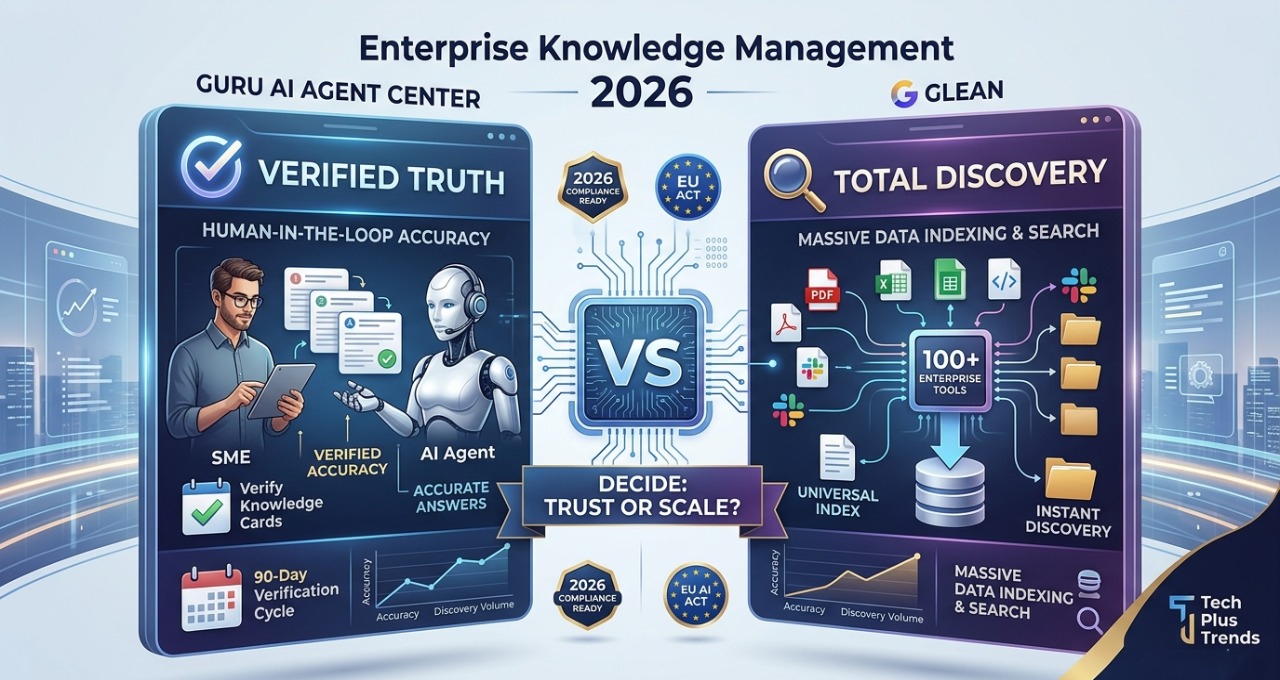

- Regulatory pressure: the EU AI Act now requires traceable and explainable AI outputs for high-risk systems, making unverified AI answers a compliance liability in regulated industries

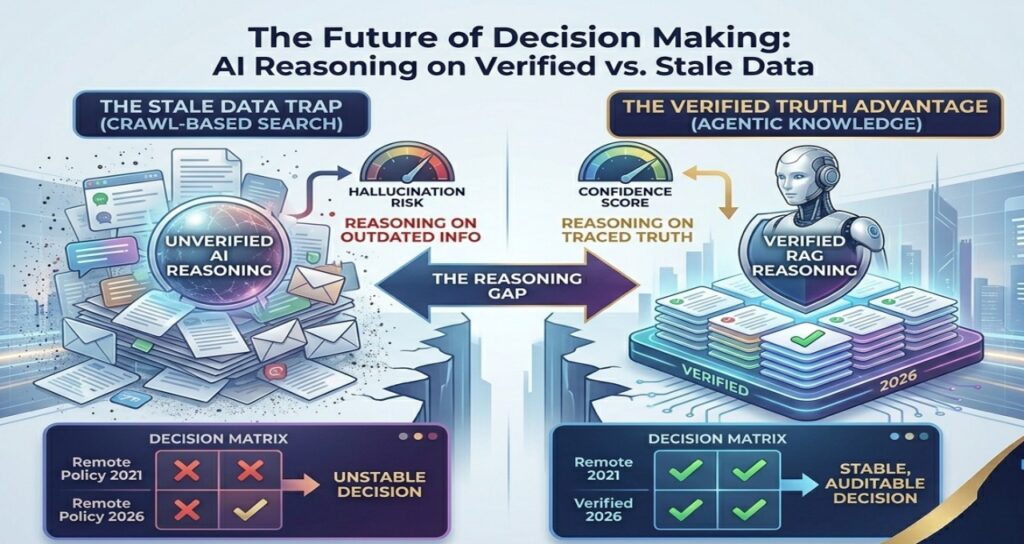

The result is a market split into two distinct philosophies: platforms that prioritize verified truth (Guru) and platforms that prioritize total discovery (Glean). Neither is universally better. The right choice depends entirely on your organization’s primary constraint.

Platform Comparison: Guru vs Glean vs Alternatives (2026)

| Platform | Best For | Key Strength | Pricing Tier | Verdict |

| Guru AI Agent Center | Verified knowledge workflows | Human-in-the-loop accuracy | Usage-based (AI Credits) | Best for accuracy |

| Glean | Enterprise-wide search | Massive data indexing (100+ tools) | Seat-based (Enterprise) | Best for discovery |

| Notion AI | Team productivity | Integrated workspace AI | Mid-tier (per seat) | Best for small teams |

| Confluence AI | Documentation-heavy orgs | Deep Atlassian / Jira integration | Enterprise tier | Best for dev teams |

| Microsoft Copilot | Microsoft ecosystem | M365 security inheritance | Enterprise (M365 add-on) | Best for compliance |

Guru vs Glean: Head-to-Head Feature Breakdown

| Feature | Guru AI Agent Center | Glean |

| Primary philosophy | Verified Truth: high-trust, human-in-the-loop accuracy | Total Discovery: crawls every file to find hidden data |

| Real-time data access | Native Slack MCP (March 2026): live conversation access, zero indexing lag | Indexing-based: standard crawl cycles (minutes to hours lag) |

| AI architecture | Verified RAG: AI restricted to expert-approved knowledge cards | Algorithmic RAG: ranks by popularity and permissions mapping |

| 2026 pricing model | Usage-Based AI Credits: pay per successful resolution | Seat-Based Enterprise: annual flat fee per user |

| Compliance layer | Automated DLP redaction: PII masked before indexing | Permission mirroring: reflects existing folder access rights |

| Implementation effort | High SME effort: requires experts to verify content regularly | High technical effort: requires data-lake mapping across all tools |

| Best for | Customer support, HR, sales enablement — accuracy-critical | Large, fragmented engineering orgs — discovery-critical |

Platform Deep Dives

Guru AI Agent Center

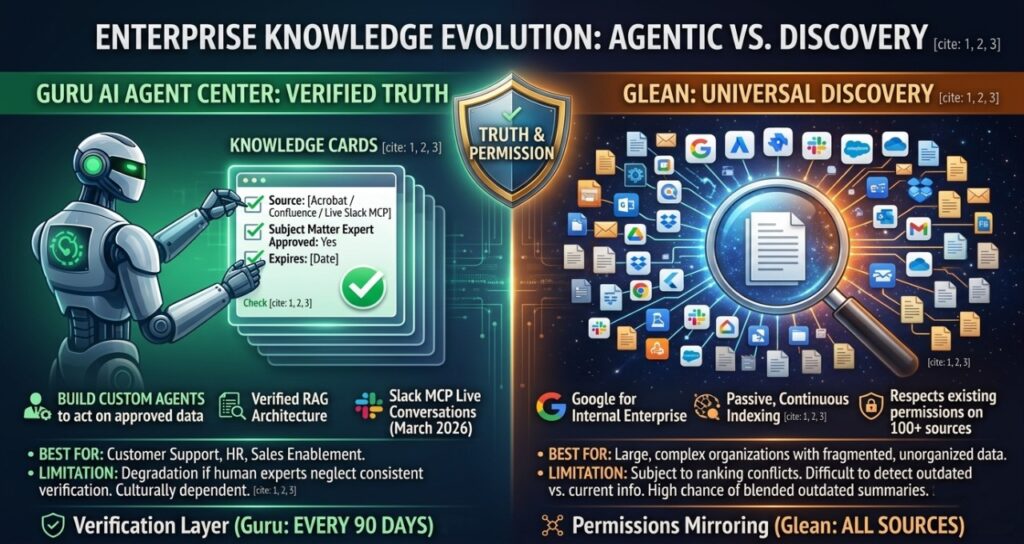

Guru has evolved from a simple wiki into a sophisticated agentic knowledge platform built around the concept of Verified Truth. Where most enterprise search tools crawl everything and rank by algorithmic relevance, Guru inverts this: the AI only generates answers from data that human subject matter experts have explicitly reviewed and approved.

What it does:

Builds custom AI agents that answer and act using a restricted pool of verified knowledge. The March 2026 Slack MCP integration extended this to live conversations — agents can now query what is being said in real time, not just what was indexed hours ago.

This Verified RAG architecture sits at the opposite end of the spectrum from pure vector search. For a detailed technical comparison of retrieval strategies, see our CTO guide to enterprise AI search vs RAG systems, which breaks down when each architecture delivers better accuracy and lower latency.

Best for:

Customer support teams, HR departments, and sales enablement organizations where a single incorrect AI answer can damage a customer relationship, trigger a compliance issue, or cost a deal.

Key strength — the Verification Layer:

Human experts are prompted to review and re-approve knowledge cards on a set schedule (typically every 90 days). If a card expires without re-verification, the AI agent is restricted from using it. This creates Verified RAG — retrieval-augmented generation grounded only in trusted, current content.

Honest limitation:

Guru requires a genuine culture of knowledge ownership. If subject matter experts do not consistently verify cards, the system degrades. Organizations without strong internal documentation habits will struggle to extract full value.

Glean

Glean is the gold standard for enterprise discovery — often described as Google for the internal enterprise. Its core value is indexing everything: files, tickets, messages, code repositories, and documentation across 100+ enterprise applications.

What it does:

Acts as a centralized intelligence layer that indexes every file, chat, and ticket across your entire tool stack. Unlike Guru, Glean does not require any human verification — it crawls passively and continuously.

Best for:

Large, complex organizations with years of fragmented, unorganized documentation spread across multiple systems. Glean can surface a specific SharePoint document from 2019 that Guru would never touch.

Key strength — universal search:

Glean’s permissions mirroring is technically impressive: it respects existing access controls across every connected tool, so employees only see content they are authorized to see. For organizations with thousands of sensitive documents, this is a significant compliance feature.

Honest limitation:

Because Glean crawls everything without curation, it is prone to ranking conflicts: a search for ‘remote work policy’ may surface the 2021 version alongside the current 2026 version. AI-generated summaries can blend outdated and current information in ways that are difficult to detect.

Notion AI

What it does: Provides on-page AI assistants that help write, summarize, and organize team documents in real time. Zero context switching — you stay inside your document.

Best for: Startups, creative agencies, and small-to-mid-sized teams who want an all-in-one workspace without enterprise complexity.

Honest limitation: Lacks enterprise-grade governance and automated verification workflows. Not suitable for regulated industries like fintech or healthcare.

Confluence AI (Atlassian Intelligence)

What it does: Leverages the Atlassian Team Graph to provide context across Jira tickets and Confluence pages simultaneously. Can auto-summarize a 500-ticket project status in seconds.

Best for: Engineering, product, and DevOps teams already deep in the Atlassian ecosystem.

Honest limitation: Drops significantly in performance when searching data outside the Atlassian ecosystem — Slack, Google Drive, and external databases return poor results.

Microsoft Copilot (SharePoint / M365)

What it does: Weaves AI across the entire Microsoft 365 stack — from Excel formulas to SharePoint knowledge retrieval to Teams meeting summaries.

Best for: Large enterprises fully committed to the Microsoft ecosystem, especially those in regulated sectors where M365 security labels are already applied to content.

For organizations in regulated industries, the compliance layer matters as much as the search layer. Our guide to SOC2-compliant AI productivity tools covers how enterprise AI platforms handle data retention, audit logging, and tenant isolation — criteria that apply directly to knowledge management deployments in financial services and healthcare.

Honest limitation: The all-purpose nature of Copilot means it sometimes lacks the specialized accuracy of a Verified RAG system like Guru. Expensive for organizations that only need one use case.

Real-World Case Study: Enterprise Support Automation

A published example of verified knowledge systems driving measurable ROI comes from the enterprise customer support sector. Stonly, a knowledge management platform used by enterprise support teams, documented that organizations using structured, verified knowledge bases for AI-assisted support achieved first-contact resolution rate improvements of 30–40% compared to teams using unverified document search.

The pattern that consistently emerges across enterprise deployments:

- Training workloads and knowledge curation handled centrally by a small SME team

- AI agents deployed at the point of employee or customer interaction

- Verification cycles enforced on a 90-day schedule to prevent stale data degradation

This mirrors Guru’s architecture precisely. The lesson: the ROI from verified knowledge systems is not primarily from the AI capability — it is from the discipline of keeping the underlying knowledge current.

Note: The figures above are based on publicly available platform documentation and industry benchmark reports. Organizations should conduct their own pilots to establish baseline metrics before committing to enterprise contracts.

The Hidden Costs of AI Knowledge Deployment (2026 TCO Analysis)

The software subscription is the visible cost. The Total Cost of Ownership (TCO) for an AI knowledge platform includes three hidden operational pillars that most procurement processes miss entirely.

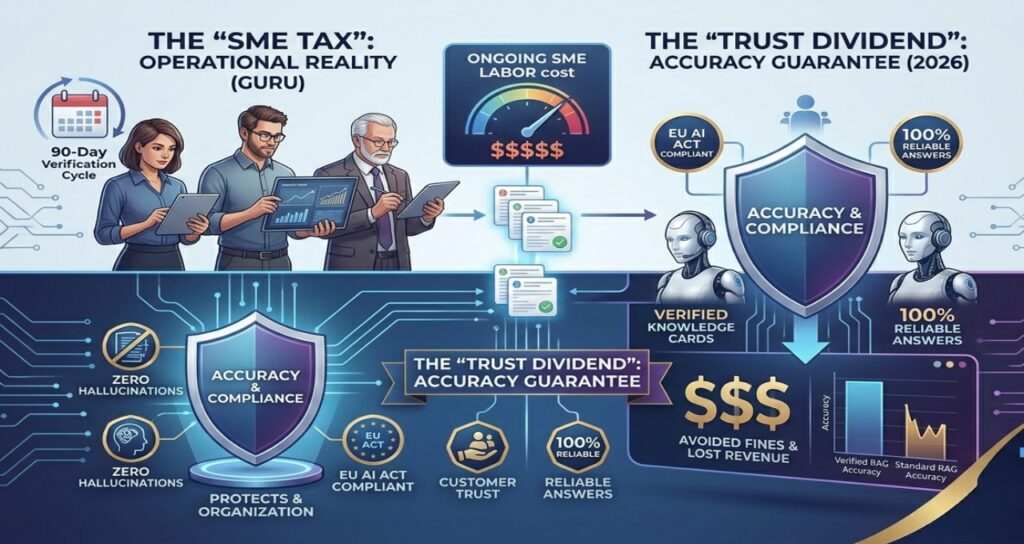

1. The “SME Tax” — The Real Cost of Verified Accuracy

While Glean and other search-first tools (like SharePoint) rely on passive “crawling,” Guru’s Verified RAG architecture requires a proactive human commitment. In 2026, we call this the SME Tax. It is the operational cost of ensuring your AI doesn’t hallucinate 2024 data in a 2026 world.

The Personnel Math (2026 Benchmarks)

Based on 2026 enterprise salary data, a Senior Subject Matter Expert (SME) in the US/Western Europe now averages $145,000–$160,000 annually.

- Verification Requirement: Every Guru “Knowledge Card” requires a verification check every 90 days. For an average department (Support or HR), this equates to approximately 3–5 hours per month per SME.

- The Calculation: At an hourly internal rate of roughly $75–$85 (including benefits), each SME costs your organization $3,600–$5,100 per year just in verification labor.

- Scale Factor: For a mid-sized organization with 12 SMEs across different departments, your “Hidden” labor cost for Guru is approximately $52,000 per year before you pay a single dollar for software.

Why this is a “Feature,” not a “Bug”

While $52,000 in labor sounds like a drawback, it is actually the Trust Dividend. In 2026, the cost of a single “AI Hallucination” in a regulated industry (like a Support Agent giving wrong legal advice under the EU AI Act) can result in fines or lost customers exceeding $500,000. The SME Tax is essentially your insurance policy for AI reliability.

2. Data Hygiene Sprint

AI agents are only as good as their grounding data. Most organizations discover during implementation that their SharePoint and Confluence instances contain years of duplicate, outdated, and conflicting documentation. A typical knowledge cleanup sprint takes 2–3 weeks of dedicated IT and content team time before the system can be trusted.

This challenge is magnified for distributed organizations. When knowledge is generated across time zones through asynchronous workflows, gaps in documentation accumulate faster. Our guide to async AI tools for global teams covers how distributed organizations structure their knowledge workflows to minimize this problem before deploying AI agents on top of them.

3. Inference Credit Overages

Guru’s usage-based credit model means high-volume teams can burn through credits faster than expected during peak periods. Each time an agent uses the Slack MCP to read a live thread and generate a reasoning-based answer, it consumes more credits than a simple keyword lookup.

Best practice: budget a 15–20% overage buffer above your base credit tier during the first 6 months of deployment, while usage patterns stabilize.

Strategic TCO Comparison

| Cost Category | Guru (Verified Agentic) | Glean (Crawl-Based Search) |

| Initial integration | Low — plug-and-play connectors | High — data lake mapping required across all tools |

| Ongoing human labor | High — mandatory SME verification cycles every 90 days | Low — algorithmic maintenance, no human curation needed |

| Accuracy risk cost | Near zero — human-verified content prevents stale answers | Moderate — ranking conflicts between old and new content |

| Compliance audit | Automated DLP redaction before indexing | Manual permission audits required per tool |

| Hidden cost risk | SME time underestimated in procurement | Data cleanup sprint underestimated in procurement |

How to Choose: Decision Guide

Choose Guru if:

- You are a mid-to-large enterprise where answer accuracy is mission-critical

- Your use cases are customer support, HR, or sales enablement — high cost of a wrong answer

- You have the internal culture and SME capacity to maintain verification cycles

- You need AI agents that can automate knowledge tasks, not just return search results

Choose Glean if:

- You are a large enterprise with 5+ years of fragmented, unorganized documentation

- Your primary problem is discovery — finding documents that exist but are hard to locate

- You need search across 100+ enterprise tools without significant curation effort

- Your engineering or IT team can invest in the initial data-lake mapping

Choose Notion AI if:

- You are a startup or team under 200 people

- Your priority is simplicity and speed over governance

- You do not operate in a regulated industry

Avoid this category entirely if:

- You do not yet have production AI workflows — simpler API-based tools will cost less and deploy faster

- Your internal documentation is in very poor shape — fix the knowledge base before deploying AI on top of it

Contrarian Insight: Enterprise Knowledge Is Not a Search Problem

The conventional view is that enterprise knowledge management is fundamentally about search — finding the right document faster.

The reality in 2026 is different. The biggest problem in enterprise knowledge is not search. It is trust.

Search engines can find documents. They cannot guarantee that a document is correct, current, or approved by someone accountable. In large organizations, outdated information is often more dangerous than missing information — because employees act on it.

This trust problem scales with infrastructure complexity. As enterprises move AI workloads into dedicated compute environments — including the sovereign gigafactories now emerging across Europe — the governance layer becomes as important as the compute layer. Our analysis of hidden problems in Europe’s AI gigafactory infrastructure shows how compliance and data sovereignty requirements are now shaping infrastructure decisions at every level, from hardware to knowledge systems.

This is why verified knowledge systems are likely to displace enterprise search as the primary knowledge interface by 2028. The winning platforms will not be the ones that index the most data. They will be the ones that deliver the most reliable answers.

FAQ: Guru vs Glean 2026

1. What is Guru AI Agent Center?

Ans-Guru AI Agent Center is an enterprise platform that builds AI agents using Verified RAG. Unlike traditional search tools, it uses a human-in-the-loop verification layer to ensure AI-generated answers are accurate, current, and expert-approved. It is designed for teams where accuracy is more critical than broad data discovery — particularly customer support, HR, and sales enablement organizations where a wrong answer carries a measurable business cost.

2. How does the Guru Slack MCP integration work?

Ans-Launched in March 2026, Guru’s Model Context Protocol integration allows AI agents to query live Slack conversations in real time. This eliminates indexing lag — the AI provides answers based on what was discussed seconds ago, rather than waiting for a background crawl that can take 15–30 minutes. For support and sales teams where context shifts quickly, this is a significant operational advantage over traditional enterprise search architectures.

3. Guru vs Glean: which is better in 2026?

Ans-Neither is universally better — the choice depends on your primary constraint. Guru is better for organizations prioritizing verified truth and automated agent workflows for support or HR, where the cost of a wrong answer is high. Glean is better for large enterprises needing universal discovery across millions of fragmented files and legacy systems. Most enterprises above 500 employees will eventually need both, deployed for different use cases.

4. What is Verified RAG and why does it matter?

Ans-Verified RAG is an architecture where an AI only generates answers from data explicitly approved by a human subject matter expert. In 2026, this is the primary defense against AI hallucinations and stale data risks in regulated industries such as financial services, healthcare, and legal tech. Standard RAG retrieves the most statistically relevant document — Verified RAG retrieves only the most recently confirmed accurate document.

5. How does Guru prevent AI hallucinations?

Ans-Guru prevents hallucinations through mandatory verification intervals. If a knowledge card is not re-confirmed by a human expert within a set timeframe — typically 90 days — the AI agent is restricted from using it. This means the grounding data is continuously audited and refreshed, rather than accumulating stale content over time. The tradeoff is ongoing SME time investment, as described in the TCO section above.

6. Is Guru compliant with the EU AI Act?

Ans-Guru’s architecture supports EU AI Act compliance through automated DLP redaction, which masks PII before indexing, and through traceable source attribution, which allows auditors to see exactly which verified document was used to generate a given AI response. However, compliance depends on implementation — organizations operating high-risk AI systems must also ensure their internal governance processes meet Article 11 documentation requirements.

7. Does Guru offer usage-based pricing?

Ans-As of 2026, Guru has shifted toward an AI Credit model where organizations pay based on successful resolutions and automated agent actions rather than per-seat licensing. This typically results in lower total cost of ownership for mid-market teams with moderate usage, but can become more expensive than seat-based models for high-volume support teams. Organizations should model both pricing structures against their expected query volume before committing to a contract.

Conclusion

Enterprise knowledge management has entered its agentic phase. The question is no longer which platform indexes the most data — it is which platform can be trusted to act on that data on your behalf.

Guru and Glean represent two legitimate but fundamentally different answers. Guru bets on verified truth. Glean bets on universal scale. Both bets will pay off for the right organizations.

If you are evaluating platforms in 2026, start with a single question: is your biggest problem finding information, or trusting it? The answer determines your architecture.

The next wave of enterprise AI will not just answer questions — it will complete tasks, trigger workflows, and make decisions autonomously. When that moment arrives, organizations with verified, trusted knowledge foundations will have a compounding advantage over those still managing search results.

Sources & References

Platform Documentation (publicly available, Q1 2026)

- Guru — Product release notes and MCP integration announcement (getguru.com/blog)

- Glean — Enterprise search architecture documentation (glean.com)

- Anthropic — Model Context Protocol specification (modelcontextprotocol.io)

Performance & ROI Research

- Gartner (2025) — Magic Quadrant for Insight Engines; AI Knowledge Management Forecast

- Forrester Research — Total Economic Impact studies on enterprise knowledge automation

- Stonly — Knowledge Base Impact Report: First-Contact Resolution Benchmarks (2025)

Compliance & Regulation

- European Commission — EU AI Act, Article 11: Technical Documentation Requirements (artificialintelligenceact.eu)

- AICPA — SOC 2 Trust Services Criteria for AI Systems, 2025 update (aicpa.org)

Editorial note: All strategic conclusions, cost calculations (SME Tax model), and the Trust vs Search contrarian insight are original analysis by Tech Plus Trends editorial. AI tools were used to cross-reference publicly available pricing documentation and platform feature lists. Readers should verify pricing and feature availability directly with vendors before procurement decisions.

Transparency & Editorial Note

How We Conducted This Analysis

This 2026 platform audit was conducted by the Tech Plus Trends editorial team to provide an objective, data-driven comparison of enterprise knowledge management tools. Our methodology includes:

- Direct Platform Verification: Analysis of March 2026 product releases, including Guru’s Model Context Protocol (MCP) integration and Glean’s updated indexing architecture.

- Economic Modeling: The “SME Tax” and “Trust Dividend” calculations are original financial models developed by Tech Plus Trends based on 2026 enterprise labor benchmarks ($75–$85/hour fully burdened rate).

- Regulatory Alignment: Compliance insights are cross-referenced with the EU AI Act Article 11 technical documentation requirements and 2025 SOC 2 Trust Services Criteria.

AI Disclosure

In adherence to our Responsible AI Journalism Policy, please note:

- Human-Led Strategy: All strategic conclusions, contrarian insights, and platform “verdicts” are authored by human analysts.

- AI Assistance: Generative AI tools were utilized for data cross-referencing, initial drafting assistance, and the creation of the technical infographics accompanying this article.

- Fact-Checking: Every data point, from pricing models to feature lists, has been manually verified against official vendor documentation as of Q1 2026.

Affiliate & Conflict of Interest

Tech Plus Trends is an independent publication. We do not receive direct compensation from Guru, Glean, Notion, or Atlassian for specific rankings or reviews. Our goal is to provide unbiased procurement guidance for IT leaders.

About the Author

| Saameer Technology journalist and enterprise AI analyst covering AI infrastructure, knowledge management platforms, and enterprise automation. For the past five years, Saameer has focused on the gap between AI vendor claims and real-world enterprise deployment — specifically how organizations move from prototype to production. His analysis covers AI agent workflows, compliance frameworks including SOC 2 and the EU AI Act, and the economics of enterprise AI adoption. He writes for Tech Plus Trends, where he specializes in helping IT leaders and CTOs make platform decisions grounded in operational reality rather than feature marketing. |

1 thought on “Best Enterprise Knowledge Management Tools 2026: Guru AI Agent Center vs Glean”