Across Germany and France, B2B contractors working with banks and insurers are increasingly using AI tools to write, refactor, test, and document code. In most cases, this usage is informal: personal large language model (LLM) accounts, browser-based assistants, or tools that sit outside a client’s approved development environment.

What has changed is not the technology itself, but how risk is now traced and assigned.

Under the Digital Operational Resilience Act (DORA) and the EU Artificial Intelligence Act, regulated financial institutions must demonstrate control over all ICT-related risks, including those introduced by third parties. As a result, contractors are no longer evaluated only on deliverables, but also on how those deliverables were produced.

This creates the “Shadow AI” liability trap: unmanaged AI use that is not illegal, but contractually and operationally exposed.

Key Takeaways (TL;DR / SGE Box)

- “Shadow AI” means AI tools used outside a client’s approved and auditable stack

- Regulators rarely fine freelancers directly

- Indirect enforcement happens through contracts and audits

- DORA enables banks to assess risk down to individual contributors

- The biggest exposure is termination, indemnity gaps, and uninsured loss

My Information Gain

Most public analysis of DORA and the EU AI Act focus on enterprise compliance programs. What is largely missing is a practical explanation of how these frameworks affect individual contractors, especially freelancers embedded in regulated delivery chains.

This article explains:

- How DORA’s third-party risk logic reaches individual freelancers

- Why personal AI tooling is treated as ICT risk

- What contractors are expected to prove during audits

- Where professional indemnity coverage quietly fails

My Deep Analysis

How DORA Reaches Individual Contractors

DORA does not instruct regulators to fine freelancers directly. Instead, it operates through indirect enforcement.

Under DORA Article 30(2), financial institutions must ensure that third-party ICT risks can be terminated, mitigated, or exited without destabilising operations. In practice, banks respond by strengthening contracts — particularly with individual contractors — to include:

- Mandatory tooling declarations

- Audit rights extending to development practices

- Termination-for-convenience clauses where risk transparency cannot be demonstrated

This is how regulatory pressure reaches the individual level: not through fines, but through contractual exit mechanisms. Contractors who cannot provide a clear tooling declaration are increasingly classified as unmanaged ICT risk.

This dynamic is already visible in audit preparation cycles supporting German-regulated banks, especially in near-shore hubs such as Warsaw, where developers are being asked to document environments and tooling choices as part of DORA readiness reviews

(see: https://techplustrends.com/dora-2026-audit-warsaw-banking-java-25/).

Why “Shadow AI” Is Flagged

Unmanaged AI tools create three audit problems for regulated institutions:

- No governance — the tool has not been risk-assessed or approved

- No traceability — outputs cannot be reliably reproduced

- No accountability chain — responsibility for defects becomes unclear

As outlined in banking delivery contexts involving AI-assisted development, personal LLM usage is increasingly treated as uncontrolled ICT input, even when the resulting code functions correctly

(see: https://techplustrends.com/shadow-ai-dora-2026-warsaw-banking-guide/).

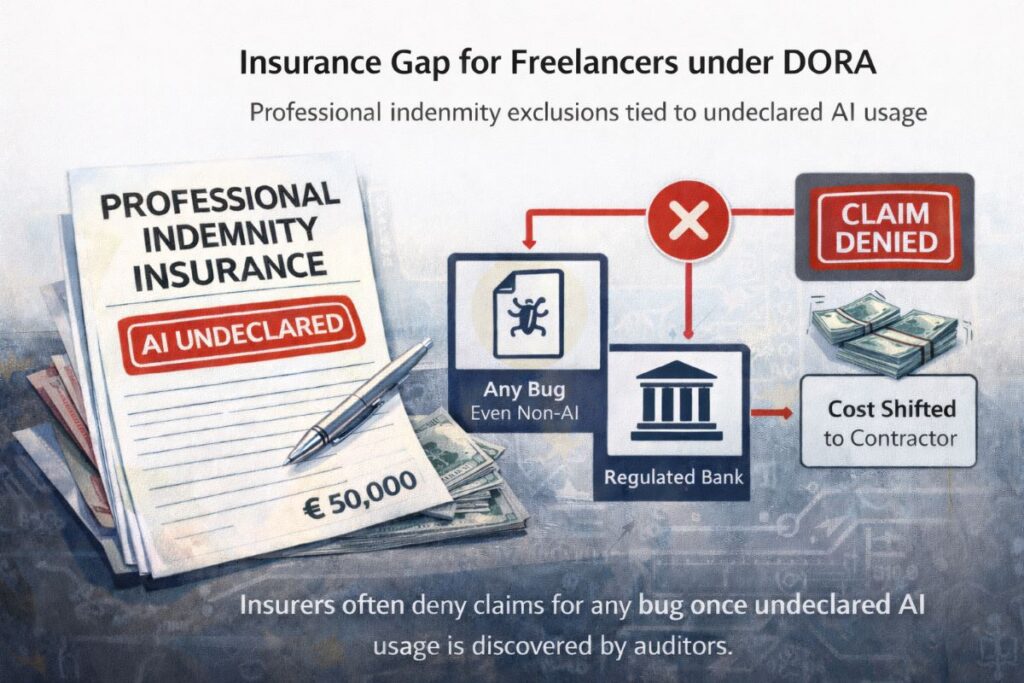

The Insurance Gap Contractors Miss

A second, less visible exposure sits in professional indemnity (PI) insurance.

Many EU PI policies issued or renewed in 2025–2026 now include:

- AI-use disclosure requirements, or

- Broad “technology usage declarations” covering generative tools

If a contractor uses a personal LLM account that has not been declared, insurers may treat this as a material non-disclosure. In practice, this means:

Even a non-AI-related bug can be used as grounds to deny a claim once undeclared AI usage is discovered.

This is where the liability trap tightens: contractual termination removes income, and insurance exclusion removes protection.

Technical Proof: What “Audit-Safe” Contractors Are Starting to Do

To survive audits, some contractors are adopting practices borrowed from software supply-chain security.

One emerging best practice is maintaining a Software Bill of Materials (SBOM) for delivered code, with an additional flag indicating:

- which components were AI-suggested, and

- which were human-authored or manually reviewed

This does not eliminate risk — but it provides traceability, which is the core requirement under DORA-driven audits. SBOM-style documentation turns AI use from an invisible liability into a governed input.

Case Study / Real-World Scenario

Composite scenario based on multiple real contractor experiences

A freelance backend developer works on a payment reconciliation system for a German bank. To speed up delivery, the contractor uses a personal LLM account to generate unit tests and refactor legacy logic.

During a DORA readiness review:

- The bank’s internal audit team requests a tooling declaration

- The contractor discloses personal AI usage

- The AI tool is not on the bank’s approved list and has no audit trail

No regulator intervenes. No system outage occurs.

However:

- The contract allows termination for unmanaged ICT risk

- The engagement is ended early

- A later defect claim is denied by the contractor’s professional indemnity insurer due to an AI-related exclusion clause

The loss is contractual and uninsured — not regulatory — but fully borne by the contractor.

Who Benefits — and Who Gets Exposed — in 2026

| Actor | Who Benefits | Who Gets Exposed |

| Banks & insurers | Stronger audit defensibility | Reduced contractor flexibility |

| Large consultancies | Approved AI platforms | Higher compliance overhead |

| Independent contractors | Short-term productivity | Termination, insurance gaps |

| Insurers | Clearer exclusions | Client disputes |

Comparison Matrix

| Contractor Choice | Contract Risk | Audit Outcome | Insurance Coverage | Market Signal |

| Undeclared personal AI | High | Audit flag | Excluded | Negative |

| Declared but unmanaged AI | Medium-High | Conditional | Uncertain | Neutral |

| Client-approved enterprise AI | Low | Acceptable | Covered | Positive |

| No AI usage | Low | Neutral | Covered | Baseline |

CoE Framing (Center of Excellence Perspective)

From a bank’s Center of Excellence perspective, the issue is not whether AI improves productivity. The issue is traceability.

If AI influences code, tests, architecture, or documentation, it must be governed like any other ICT component. This governance pressure is already shaping contractor selection and rate premiums in DORA-exposed markets

(see: https://techplustrends.com/dora-2026-warsaw-banking-b2b-rates/).

Strategic Implications for 2026

- AI tooling declarations will become standard contract annexes

- Contractors without governance discipline will be filtered out

- Rate premiums will favor “audit-safe” profiles

- Migration toward compliant delivery hubs will accelerate

(see: https://techplustrends.com/bucharest-exodus-warsaw-dora-premium-300-euro/)

Why This Matters

This is not about banning AI.

It is about where responsibility settles when systems fail. DORA and the EU AI Act push accountability downward, while insurance coverage quietly retracts. Contractors who do not adapt will not be fined — they will simply lose access to regulated work.

What To Do Now

- Inventory all AI tools you use

- Ask clients which tools are approved

- Document AI usage decisions

- Review professional indemnity exclusions

- Treat AI as governed infrastructure, not a shortcut

“People Also Ask”

1. Is using AI as a contractor illegal in Germany or France?

Ans-No. Risk arises from unmanaged and undocumented use in regulated contracts.

2. Can BaFin fine individual freelancers directly?

Ans-In practice, enforcement flows through institutions and contracts, not direct fines.

3. Does DORA explicitly mention AI tools?

Ans-DORA covers ICT risk broadly; AI tools fall under scope when they affect systems or code.

4. Does the EU AI Act require labeling AI-generated code?

Ans-The Act emphasizes transparency and documentation, which increasingly translates into contractual disclosure.

5. Will professional indemnity insurance cover AI-assisted work?

Ans-Often only if AI use is declared and compliant with policy terms.

Final Takeaway

The Shadow AI liability trap is not hypothetical. It is already reshaping how contractors are assessed in regulated environments. The contractors who remain in Germany and France’s financial sector in 2026 will not be those who avoid AI — but those who use it inside governance, documentation, and approved systems.

Sources (Official Government & EU Only)

European Commission — Digital Operational Resilience Act (DORA)

https://finance.ec.europa.eu/regulation-and-supervision/financial-services-legislation/digital-operational-resilience-act-dora_en

EUR-Lex — Regulation (EU) 2022/2554 (DORA)

https://eur-lex.europa.eu

European Commission — EU Artificial Intelligence Act

https://artificial-intelligence-act.eu

BaFin — ICT risk and DORA implementation

https://www.bafin.de

Author Bio

Saameer Go is a senior technology journalist and analyst covering enterprise software, AI platforms, infrastructure, and EU technology regulation. With over 15 years of experience analyzing how policy, labor markets, and system architecture intersect, he focuses on long-term structural risk rather than short-term hype.

Legal Disclaimer & Transparency Note

Disclaimer: This article is for informational and journalistic purposes only. It does not constitute legal, financial, or professional advice. While every effort has been made to ensure accuracy based on 2026 regulatory frameworks (DORA and the EU AI Act), laws and enforcement practices in Germany and France are subject to change. Readers should consult with a qualified legal professional or compliance officer regarding their specific B2B contracts and professional indemnity insurance coverage.

Transparency Note: At Tech Plus Trends, we believe in the responsible and transparent use of technology.

- Source Integrity: This analysis is based on primary research from official European Union and BaFin documentation.

- Editorial Standards: This article was researched and written by a human analyst. Our editorial process emphasizes “Information Gain” to provide unique insights not found in standard enterprise compliance reports.

- AI Disclosure: While we cover the impact of Artificial Intelligence on the B2B sector, Tech Plus Trends does not use “Shadow AI.” Any technical tools used in the research or production of this content are managed under our internal governance framework to ensure data privacy and accuracy.