AI data centers use 20 MW to 1 GW of electricity — up to 10x more per rack than traditional facilities. This guide covers power consumption, training vs. inference costs, grid constraints, and how to plan your power strategy in 2026.

In 2025 alone, more than 10 gigawatts of new AI data center capacity were announced globally, according to industry infrastructure reports. That sounds like growth — but it is creating a power bottleneck that utilities and governments are struggling to solve.

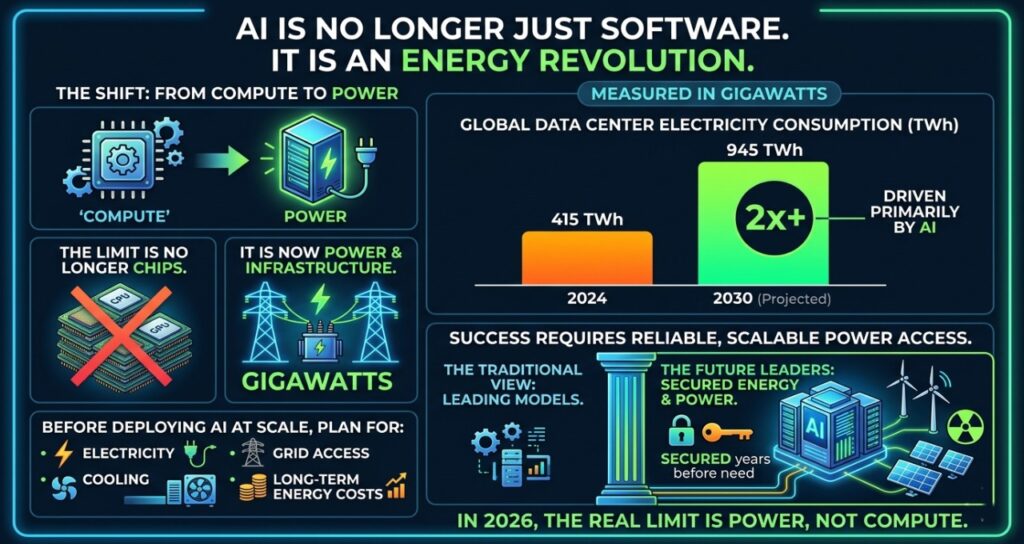

Global data center electricity consumption reached 415 TWh in 2024, representing 1.5% of total global electricity use, according to the International Energy Agency’s Energy and AI report (IEA, 2025). The IEA projects that figure will more than double to 945 TWh by 2030 — roughly equivalent to Japan’s entire annual electricity consumption today. AI is the primary driver.

This guide explains how much electricity AI data centers really use, where that power goes, why grid access has become the defining constraint in AI infrastructure, and what decision-makers must plan for before deploying large-scale AI.

Core answer: Power requirements for AI data centers are significantly higher than traditional data centers because GPUs consume more energy, racks are denser, and AI workloads run continuously. A single large AI data center can use 100 MW to 1 GW of electricity, with most power going to compute and cooling systems, making power availability the biggest constraint in AI infrastructure expansion.

What are AI data centers — and why power is now the biggest problem

AI data centers are specialized facilities designed to run machine learning training and inference workloads using high-performance GPUs instead of traditional CPUs. The difference is physical: more heat, more electricity, and more cooling infrastructure.

Traditional enterprise data centers were designed for storage and web hosting — occasional, bursty workloads. AI data centers are designed for constant, intensive computation. A single NVIDIA H100 GPU draws 700 W of power. A rack of 8 H100s draws 5.6 kW just for the processors, before cooling, networking, or storage. Scale to a 50,000-GPU training cluster and the power draw approaches 35 MW — equivalent to a small city.

This is happening across every layer of the enterprise. Teams deploying AI agents for project management, internal assistants, and real-time decision tools are adding persistent compute workloads that run 24/7 — not occasional batch jobs. The cumulative power draw of enterprise AI adoption is as significant as the headline hyperscaler builds, just less visible.

This is why power availability, not chip availability, has become the primary constraint on AI infrastructure expansion.

AI infrastructure comparison: power perspective

| Infrastructure type | Typical rack density | Power draw | Best for | Key limitation |

| Traditional data center | 5–10 kW per rack | Low | Web, storage | Not suitable for AI |

| Cloud AI cluster | 20–40 kW per rack | Medium | Model training | Expensive at scale |

| AI hyperscale facility | 40–120 kW per rack | Very high | Large AI models | Cooling complexity |

| AI gigafactory | 100 kW+ per rack | Extreme | National AI infrastructure | Requires grid-level power |

AI is increasing power density by 5–10x per rack compared to traditional data centers. This density increase is what drives the cooling complexity, grid demand, and infrastructure cost that define AI data center planning.

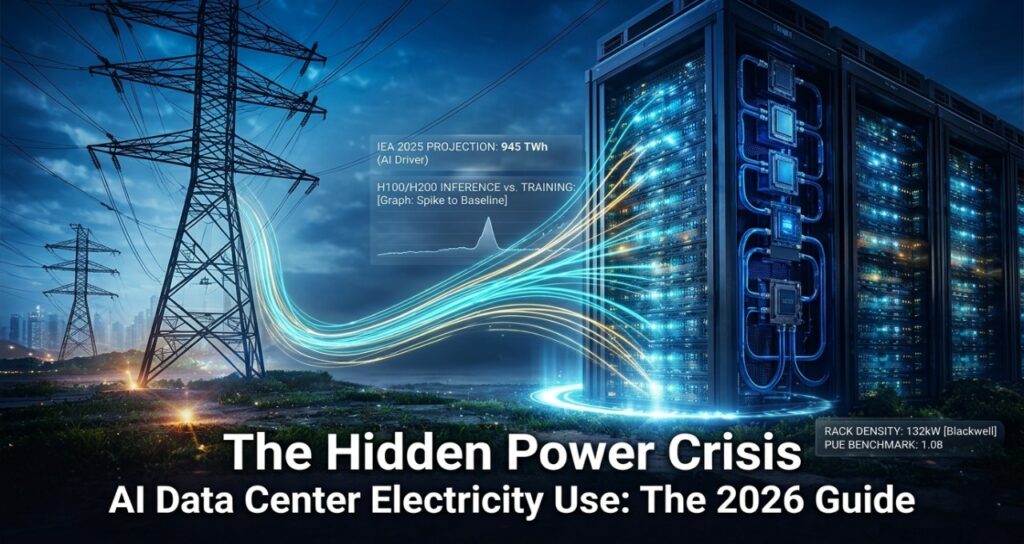

The AI power lifecycle: training vs. fine-tuning vs. inference

Most coverage treats AI power usage as a single number. That is incorrect. Electricity consumption in AI happens across a distinct lifecycle, and confusing training with inference leads to fundamentally wrong infrastructure decisions.

| AI stage | Power draw | Duration | Total energy impact |

| Data preparation | Low (10–50 MW) | Days to weeks | Low |

| Training (large model) | 25–50 MW peak | Weeks to months | Extremely high |

| Fine-tuning | 5–15 MW | Days | Medium |

| Inference (serving) | 0.3 Wh per query | Continuous, 24/7 | Very high over time |

Training a large AI model requires 25–50 MW of power during the training run itself, sustained over weeks or months. According to IEA analysis, AI-specific servers consumed an estimated 53–76 TWh globally in 2024 and are projected to grow to 165–326 TWh per year by 2028 as model training and inference both scale.

But inference is now the dominant driver. One of the fastest-growing inference loads in the enterprise is AI-powered enterprise search and RAG systems, which query live knowledge bases continuously rather than returning static results. Unlike traditional search, these systems generate a new LLM response for every query — meaning GPU time is consumed on every single search, at scale. Today, inference accounts for approximately 80–90% of total AI computing, and is expected to represent 75% of total AI energy demand by 2030 (IEA, 2025).

The key distinction for infrastructure planning:

- Training = power spike (high wattage, defined duration)

- Inference = power baseline (always on, scales with user demand)

Infrastructure is built for the baseline, not the spike. This means inference economics — not training economics — determine long-term power costs for most organizations.

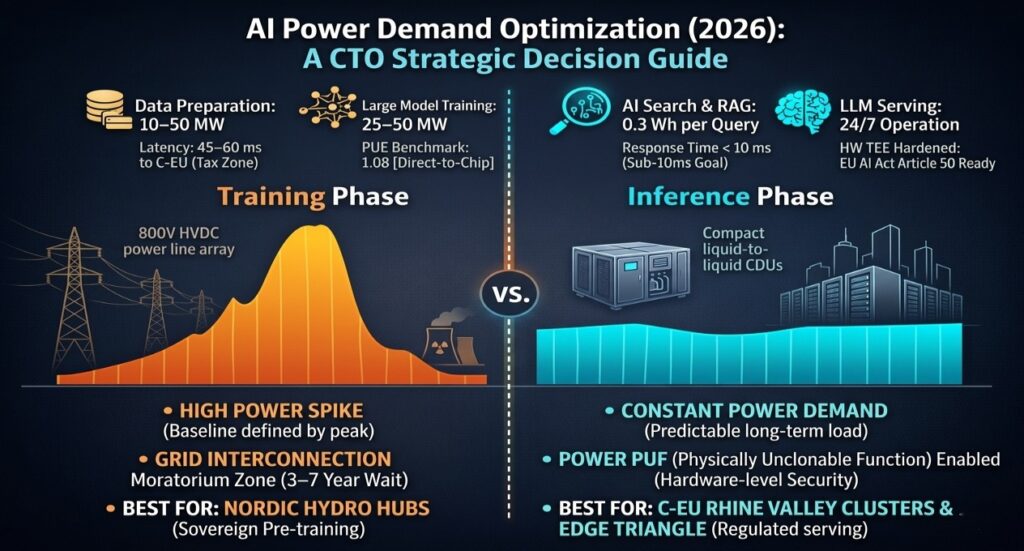

How much power does an AI data center use?

Here are typical power consumption figures for 2026-era AI facilities, with real-world equivalents for context:

| Data center type | Power consumption | Equivalent homes powered |

| Small AI facility | 20 MW | ~16,000 homes |

| Medium AI facility | 100 MW | ~80,000 homes |

| Large hyperscale AI campus | 500 MW | ~400,000 homes |

| AI gigafactory (planned) | 1 GW | ~800,000 homes |

Note: In Virginia, data centers already consume nearly 25% of the state’s total electricity supply (Virginia Business, 2025). A single large AI campus can reach 1 GW — roughly equal to the power output of one nuclear reactor.

Where does the power go?

| System | Power share | Notes |

| GPUs / compute | 55–65% | Dominant load; scales with GPU density |

| Cooling infrastructure | 30–40% | Higher for older or air-cooled facilities |

| Storage & networking | ~5–10% | Growing with NVMe and high-speed fabric |

| Lighting & misc | ~3–5% | Minimal in modern hyperscale builds |

Cooling alone consumes as much electricity as a small city in large AI facilities. A less visible but growing contributor to baseline power draw is the deployment of AI knowledge management systems across enterprise environments. These systems run continuous indexing, retrieval, and generation workloads rather than serving occasional queries — meaning they maintain a persistent GPU footprint that compounds across thousands of enterprise deployments. Power Usage Effectiveness (PUE) remains the critical efficiency metric: best-in-class hyperscale facilities achieve 1.1–1.2, while the global average sits around 1.5–1.6.

Why AI uses so much electricity: GPU vs. CPU

| Processor type | Typical TDP | Context |

| Standard server CPU | 150–250W | Traditional data center workload |

| AI GPU (e.g. H100, H200) | 700–1,200W | Training and inference |

| AI GPU rack (8 GPUs) | 40–120 kW | Before cooling and networking |

| AI training cluster (50K GPUs) | 30–50 MW | Full model training run |

More GPU density means more heat per square metre. More heat requires more cooling. More cooling means more electricity. This is why the data center industry is shifting from air cooling — which supports up to 20 kW per rack — to advanced cooling methods:

- Liquid cooling: supports up to 100 kW per rack; pipes chilled water directly to server components

- Immersion cooling: submerges servers in dielectric fluid; supports 200+ kW per rack; reduces PUE to near 1.0

- Direct-to-chip cooling: targets heat removal at the GPU die itself; deployed by Google, Microsoft, and AWS in new builds

The power demand curve is also being shaped by how enterprises schedule AI workloads. AI scheduling agents for remote teams and async AI tools for global operations distribute compute jobs across time zones — which sounds efficient, but in practice means AI data centers must maintain near-full GPU utilization across all 24 hours rather than peaking during business hours. This “always-on” demand profile is a key reason electricity demand is rising even as individual model efficiency improves.

The power supply crisis: grid constraints and interconnection queues

The biggest constraint on new AI data centers is not land, capital, or chips. It is electricity access. Specifically, it is the interconnection queue — the regulatory process for connecting a new facility to the power grid.

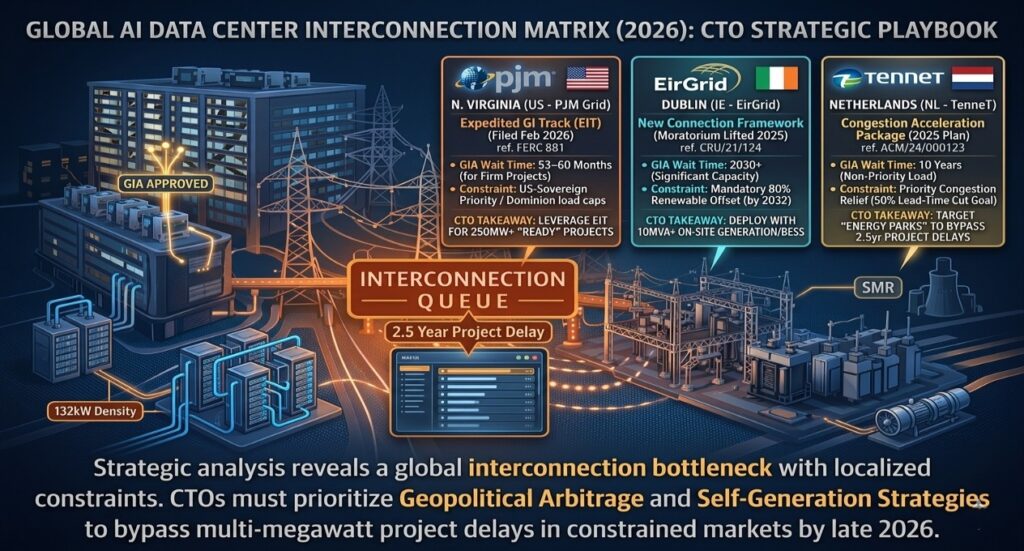

Globally, approximately 20% of planned data center projects face significant delays due to grid congestion and interconnection backlogs, according to the IEA’s Energy and AI report (2025). In advanced economies, this figure is higher.

| Region | Typical wait time | Key constraint |

| Northern Virginia (US) | Up to 7 years | Dominion Energy capacity exhaustion; 25%+ of VA electricity consumed |

| PJM (US East/Midwest) | 5–8+ years avg (2025) | Queue stretched from <2 yrs (2008) to 8+ yrs; 170,000 MW in study |

| Dublin, Ireland | Moratorium until ~2028 | Data centers hit 25% of national electricity; EirGrid froze connections |

| Netherlands | Up to 10 years | Sixfold increase in congestion costs 2019–2022 |

| Texas (ERCOT) | 18–24 months | Fastest major US grid; new PUCT rules effective 2025 |

| Germany / Frankfurt | 5–7 years | Grid congestion costs tripled 2019–2022 |

The timeline from PJM interconnection application to commercial operation has risen from under two years in 2008 to over eight years in 2025, according to FERC analysis. For AI infrastructure operators racing to deploy capacity, waiting nearly a decade for grid connection creates an untenable business reality.

The PJM grid alone projects peak demand will grow by 32 GW between 2024 and 2030, with virtually all of that growth driven by data centers. PJM consumers absorbed an extra $9.4 billion in electricity bills during summer 2025 as new data center loads exceeded available supply (FERC/Introl, 2025).

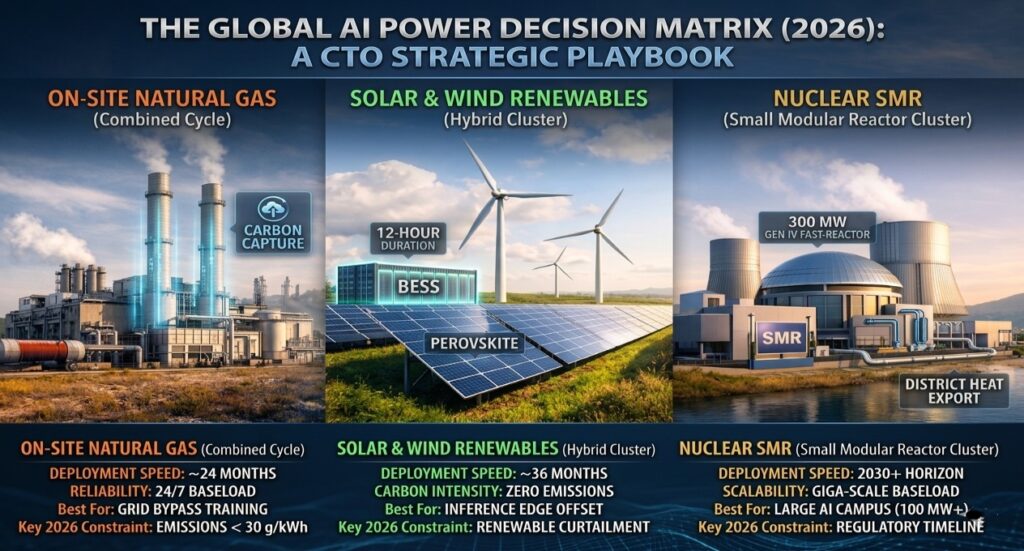

Alternative power sources for AI data centers

Unable to wait years for grid connections, the largest AI infrastructure operators are pursuing behind-the-meter and off-grid power strategies. Here is how the main options compare:

| Power source | Deployment time | Est. cost | Reliability | Key tradeoff |

| Grid connection | 5–8+ years (major markets) | Lowest long-term OpEx | High | Queue delays make it unavailable for most new builds |

| Natural gas (on-site) | 18–24 months | $4–6/W installed | Very high | Carbon emissions; regulatory pressure growing |

| Solar + battery (BESS) | 24–36 months (100 MW+) | $50–80/MWh PPA | Moderate | Good for offsets, not baseload |

| Wind PPA | 36–48 months | $30–50/MWh | Moderate | Location-dependent; curtailment risk |

| Nuclear PPA (existing) | 1–3 yrs (contractual) | $80–120/MWh | Extremely high | Limited available capacity |

| Nuclear SMR (new build) | 2030–2035 earliest | $58–89/MWh (with IRA) | Extremely high | First commercial units not yet online |

The nuclear bet: Microsoft, Google, Amazon, and Meta

Big tech companies have committed over $10 billion to nuclear partnerships, with 22 GW of SMR projects in development globally as of late 2025 (Introl, 2025). The strategic logic is straightforward: nuclear provides 24/7 carbon-free power at scale — the only energy source that matches AI data center load profiles without grid dependence.

- Microsoft: 20-year, $16B power purchase agreement with Constellation Energy to restart Three Mile Island Unit 1 (837 MW) by 2028

- Google / Kairos Power: Agreement to purchase 500 MW from a fleet of SMRs by 2035; Hermes 2 plant targeting 50 MW from 2030

- Amazon: Investment in X-energy SMR startup; agreement with Energy Northwest for 320 MW (expandable to 960 MW)

- Meta: RFP for 1–4 GW of new nuclear capacity targeting early 2030s

- Oracle: Plans for a gigawatt-scale data center powered by three SMRs

The first commercial SMR-powered data centers are expected online around 2030. With IRA production tax credits reducing levelized SMR costs from $89/MWh to approximately $58/MWh, the economics are becoming viable — though timeline risk remains significant.

Policy and regulatory landscape (2025–2026)

The power crisis has triggered regulatory responses at every level. Decision-makers deploying AI infrastructure must understand the policy environment, not just the technical one.

United States

- FERC Order RM26-4 (2025): Consistent interconnection standards for loads over 20 MW — directly targeting data centers

- FERC PJM colocation ruling (Dec 2025): Rules for data center colocation at power plants with faster connection paths

- Texas Senate Bill 6 (June 2025): Allows ERCOT to temporarily disconnect data centers over 75 MW during grid emergencies; operators share grid upgrade costs

- West Virginia microgrid districts: Legislation pairing data centers with dedicated generation, shielding other ratepayers

Europe and Asia

- Ireland: EirGrid began conditional grid-connection framework in 2025 — reopening access with grid-support requirements

- Netherlands: Average load queue wait reaches 10 years in key markets; on-site backup generation now required for large loads

- EU AI Act: Energy consumption disclosure requirements being phased in through 2026

- India: Rapid expansion in Mumbai, Chennai, and Hyderabad straining regional grids; national data center policy under development

For regulated industries, the policy picture is further complicated by compliance requirements. Enterprises deploying SOC2-compliant AI productivity tools must account for the additional infrastructure overhead these systems require — encryption processing, audit logging, access control, and data residency enforcement all add compute load on top of the base AI workload. In regulated sectors such as finance, healthcare, and government, this compliance layer can add 15–25% to baseline GPU utilization, compounding the power demand that data center operators must plan for.

How to choose your AI data center power strategy

Choose grid-connected data centers if:

- You are a large enterprise with a 5+ year infrastructure timeline

- You need the highest reliability and regulatory compliance

- You operate in a region with available grid capacity (Texas, Midwest, Scandinavia)

Choose behind-the-meter power if:

- You are building large AI infrastructure (100 MW+) and cannot wait for grid queues

- You need predictable long-term power cost and are willing to accept capex for on-site generation

- You are in a region with a moratorium or multi-year queue (Northern Virginia, Dublin, Netherlands)

Plan for nuclear or long-term PPAs if:

- You are a hyperscaler with 10-year infrastructure roadmaps

- You need baseload, carbon-free power at gigawatt scale

- You are willing to accept 2030+ timelines for SMR deployment

Avoid building large AI infrastructure if:

- You cannot secure long-term power contracts before committing capital

- You operate in a region where grid upgrades will be socialized onto your rate structure

- Your timeline does not accommodate 5–8 year interconnection waits in constrained markets

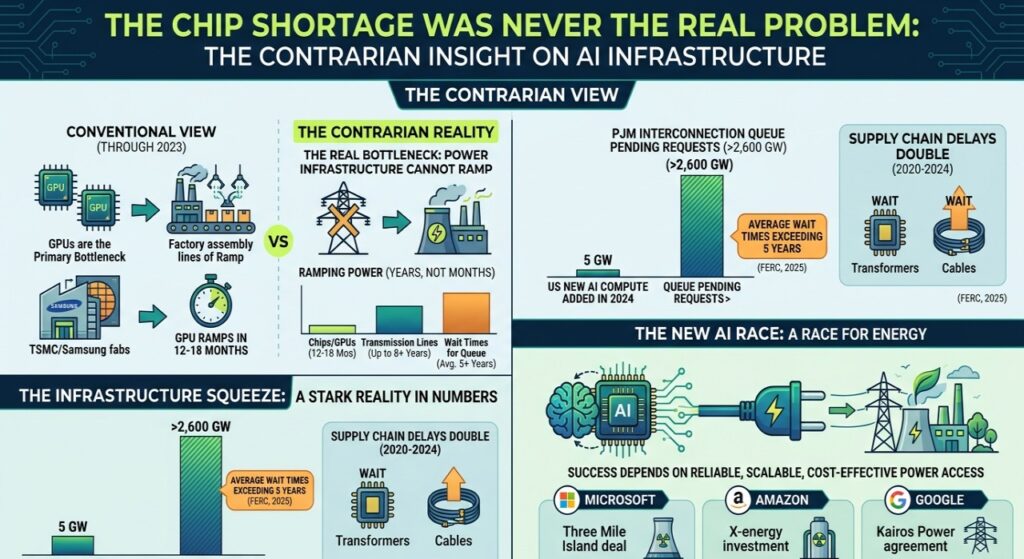

The contrarian insight: the chip shortage was never the real problem

The conventional view through 2023 was that GPUs were the primary bottleneck in AI infrastructure. Chips can be manufactured at scale. TSMC and Samsung can ramp GPU production within 12–18 months of demand signals.

Power infrastructure cannot. The US added roughly 5 GW of new AI compute capacity in 2024 while the PJM interconnection queue held over 2,600 GW in pending requests, with average wait times exceeding five years (FERC, 2025). New transmission lines take up to eight years to build in advanced economies. Transformer and cable lead times doubled between 2020 and 2024.

The AI race is now an energy race. The companies that succeed in AI over the next decade will not just be those with the best models. They will be those with the most reliable, scalable, and cost-effective access to power. Microsoft’s Three Mile Island deal, Amazon’s X-energy investment, and Google’s Kairos Power agreement are not energy policy decisions. They are competitive infrastructure decisions.

FAQ — power requirements for AI data centers

1.How much power does an AI data center use?

Ans-AI data centers typically use between 20 MW and 1 GW of electricity depending on size. Small AI facilities may use around 20 MW (equivalent to 16,000 homes). Large hyperscale AI campuses exceed 500 MW. AI gigafactories under development are expected to reach 1 GW of power consumption — similar to the output of a nuclear reactor.

2.Why do AI data centers use more power than traditional data centers?

Ans-AI data centers use significantly more power because they rely on GPUs rather than CPUs. A single AI GPU like the NVIDIA H100 draws 700–1,200 W, compared to 150–250 W for a server CPU. AI workloads also run continuously, especially inference systems, driving 24/7 electricity demand. High-density GPU racks generate extreme heat, requiring advanced cooling that consumes 30–40% of total facility electricity.

3.What is the biggest power challenge for AI infrastructure?

Ans-Grid access and interconnection queues. In major markets like Northern Virginia, PJM, and Dublin, Ireland, wait times for new grid connections now reach 5–10 years. The IEA estimates that 20% of planned data center projects globally face significant delays due to grid congestion. Even companies with capital and hardware cannot deploy AI infrastructure without secured power commitments.

4.How much electricity does AI training use?

Ans-Training a large AI model requires 25–50 MW of sustained power over weeks or months. However, inference — running the model for users — now accounts for 80–90% of AI computing and will represent approximately 75% of total AI energy demand by 2030, making it the dominant long-term electricity cost (IEA, 2025).

5.What percentage of data center power is used for cooling?

Ans-Cooling typically consumes 30–40% of total data center electricity. High-density AI GPU racks generate extreme heat, requiring liquid, direct-to-chip, or immersion cooling at scale. Best-in-class hyperscale facilities achieve PUE of 1.1–1.2. The global average remains around 1.5–1.6.

6.Will AI cause electricity shortages?

Ans-AI is significantly increasing electricity demand in regions where data centers cluster. In the US, data centers are on course to account for nearly half of all electricity demand growth between now and 2030, according to IEA projections. In Virginia, data centers already consume approximately 25% of state electricity. Without major grid investment, AI infrastructure growth could strain reliability in concentrated data center markets by the late 2020s.

7.What is behind-the-meter power for data centers?

Ans-Behind-the-meter power refers to on-site electricity generation that bypasses the utility grid — natural gas turbines, on-site solar, or off-grid nuclear. It is growing rapidly because interconnection queue wait times in major markets exceed five years. On-site gas generation can be deployed in 18–24 months, making it the fastest path to power for new AI campuses in constrained markets.

Conclusion

AI is not just a software revolution. It is an energy infrastructure revolution measured in gigawatts.

Global data center electricity consumption reached 415 TWh in 2024 and is projected to reach 945 TWh by 2030 — more than doubling in six years, driven primarily by AI. The companies that lead AI in the next decade will not simply be those with the most capable models. They will be those with the most reliable, scalable access to power — secured years before the infrastructure is needed.

Before building or deploying AI at scale, organizations must plan for electricity, cooling, grid access, and long-term energy costs. In 2026, the real limit on AI is no longer compute. It is power.

About the author: Saameer is a technology journalist and infrastructure analyst covering artificial intelligence, cloud infrastructure, and digital policy. His work focuses on the gap between AI innovation and real-world infrastructure constraints, particularly in energy, compute, and regulation across US and European technology markets.

Key sources cited

- International Energy Agency (IEA): Energy and AI report, April 2025 — iea.org/reports/energy-and-ai

- FERC: Interconnection queue data and RM26-4 rulemaking, 2025 — ferc.gov

- Introl: Nuclear power for AI data centers report, December 2025 — introl.com/blog

- Yale Clean Energy Forum: Data Centers Want Power. Regulators Say Wait., November 2025

- Latitude Media: Global grid congestion risks 20% of data center projects, April 2025

- Virginia Business: Virginia eyes nuclear to power booming data centers, September 2025

- McKinsey: AI data center demand forecast, 2025.

AI Transparency & Editorial Disclosure

Editorial Methodology & Data Synthesis The analysis in “Power Requirements for AI Data Centers: The Complete 2026 Guide” was developed by Saameer and the TechPlus Trends research team. We employed a “Human-in-the-Loop” (HITL) framework, utilizing advanced AI systems to aggregate and cross-reference multi-source infrastructure data—including IEA 2025 projections, FERC RM26-4 regulatory filings, and PJM interconnection queue statistics. All strategic conclusions, such as the “Inference Baseline vs. Training Spike” model and the “Energy-over-Chips” thesis, are original human editorial insights.

Regulatory & Energy Risk Disclaimer The data provided regarding Small Modular Reactors (SMRs), 800V HVDC power paths, and Behind-the-Meter (BTM) generation represents emerging 2026 industry standards and announced corporate roadmaps. This content is for strategic and informational purposes only. It does not constitute financial, legal, or electrical engineering counsel.

Infrastructure Planning Note TechPlus Trends strongly advises CTOs and infrastructure leads to perform site-specific feasibility studies. Grid capacity, Power Purchase Agreement (PPA) pricing, and Interconnection Study timelines vary significantly by utility territory (e.g., Dominion vs. ERCOT). Always consult with local grid operators (ENTSO-E, PJM) and certified energy consultants before committing capital to multi-megawatt AI deployments.