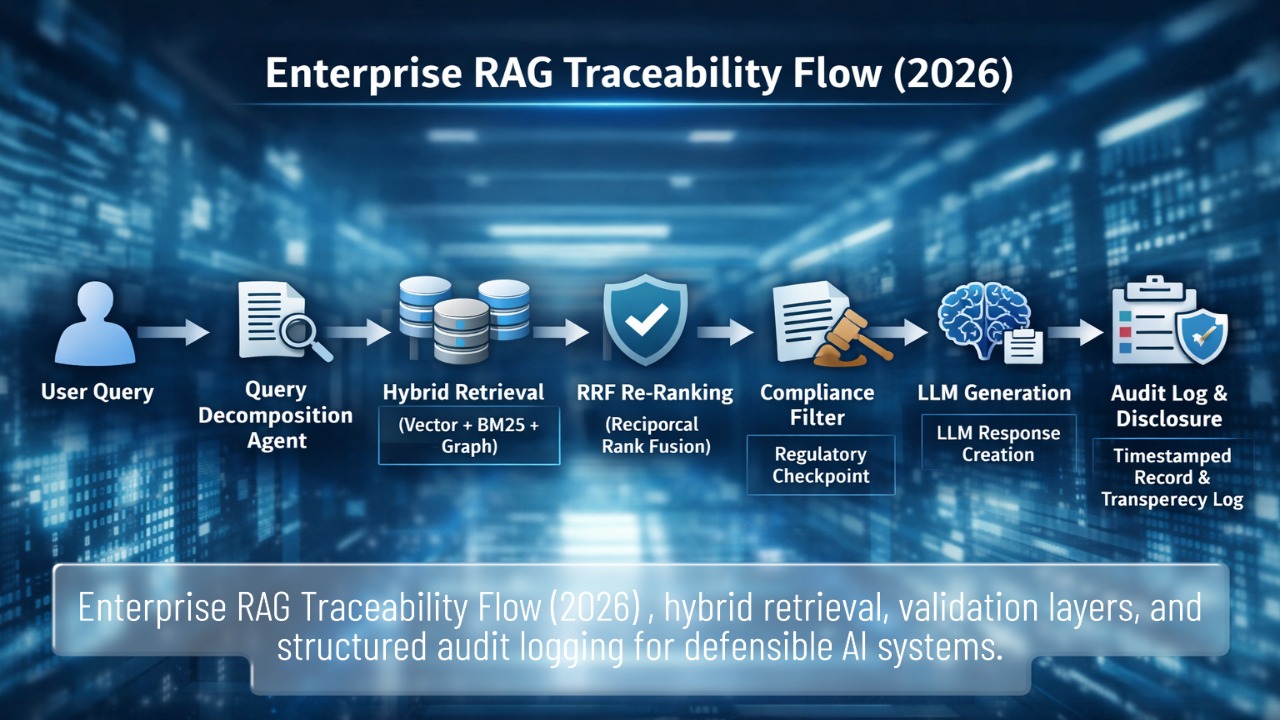

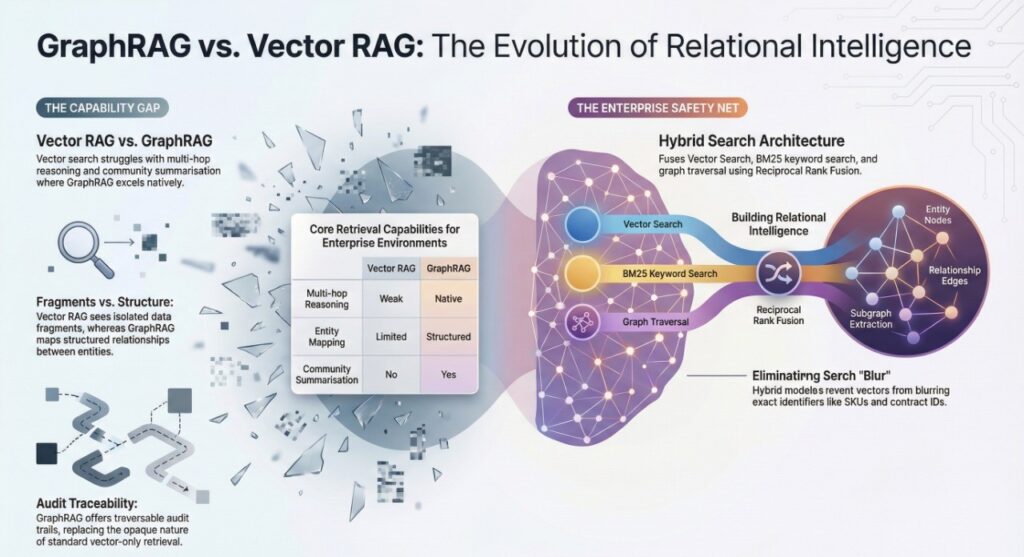

In 2026, Retrieval-Augmented Generation (RAG) is no longer a prototype architecture of “vector search + LLM.” In regulated enterprises, RAG systems now influence audit conclusions, vendor risk scoring, internal policy interpretation, and customer-facing advisory responses.

That shift transforms RAG from an engineering pattern into a governed decision infrastructure. Shadow vector silos, hallucination liability, EU AI Act transparency obligations, and uncontrolled token burn have made poorly implemented RAG systems financially and legally exposed. The 2026 enterprise mandate is clear: RAG must be defensible, traceable, centrally governed, and economically rational.

The 2026 Enterprise RAG Exposure Snapshot in 7 Structural Decisions

| Layer | 2024 Default | 2026 Enterprise Standard | Exposure if ignored |

| Retrieval | Vector-only similarity | Hybrid + GraphRAG | Relationship blind spots |

| Architecture | Single pipeline | Multi-agent orchestration | Unverified outputs |

| Evaluation | Manual testing | RAGas + DeepEval thresholds | Hallucination risk |

| Governance | Departmental silos | Central vector registry | Shadow RAG sprawl |

| Logging | Minimal traces | Retrieval + reasoning logs | NIS2 liability exposure |

| Cost Model | Token-based API | Local inference ROI model | Budget volatility |

| Transparency | Optional | AI Act disclosure logic | Regulatory breach |

In 2026, RAG design choices are governance decisions.

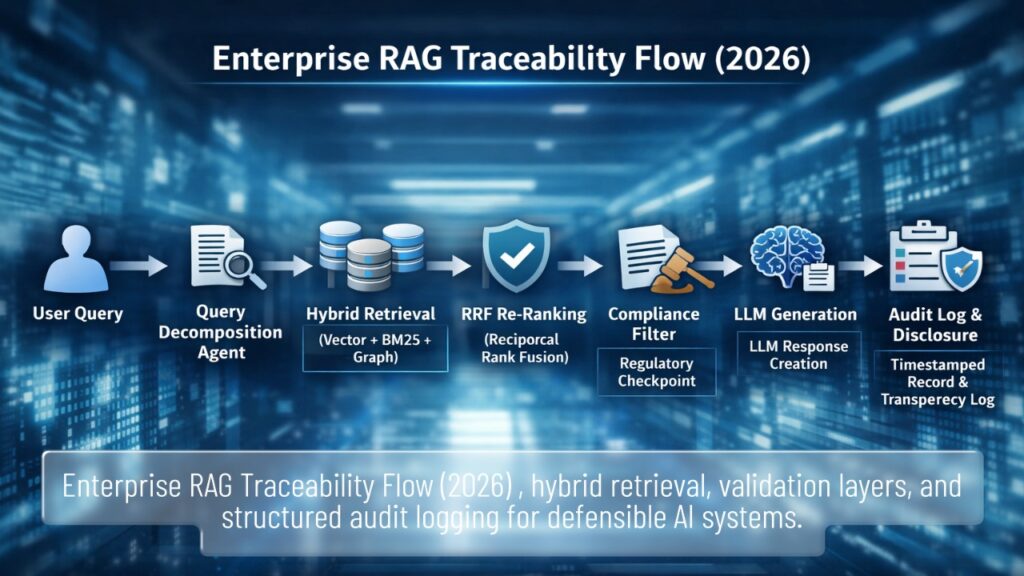

Why “Vector + LLM” Is No Longer Sufficient for Regulated Enterprises

Classic RAG architecture:

User Query → Vector Search → LLM → Answer

This fails when questions require:

- Cross-document aggregation

- Entity relationship reasoning

- Time-based risk comparison

- Compliance traceability

Example enterprise query:

“Which five vendors accumulated the highest compliance risk across the last three audit cycles?”

Vector search retrieves similar chunks.

It does not compute relationships across audits, timeframes, and vendor entities.

In regulated environments, inability to reconstruct reasoning paths can increase executive exposure — particularly under accountability structures discussed in

NIS2 CISO personal liability in Germany (2026).

RAG in 2026 must support defensibility, not just relevance.

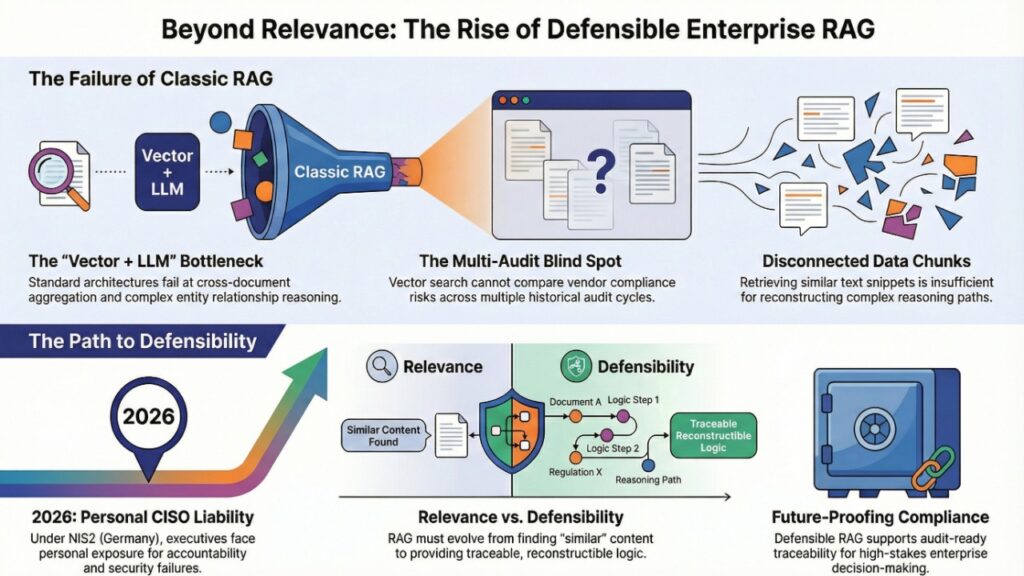

Architecture of Choice: GraphRAG & Hybrid Search

Why Vector RAG Breaks in Enterprise Context

Vector search excels at semantic similarity.

It struggles with relational intelligence.

| Capability | Vector RAG | GraphRAG |

| Similarity Matching | Strong | Moderate |

| Multi-hop Reasoning | Weak | Native |

| Entity Mapping | Limited | Structured |

| Community Summarization | No | Yes |

| Audit Traceability | Opaque | Traversable |

GraphRAG builds:

- Entity nodes (vendors, policies, controls)

- Relationship edges (risk score, timeframe, ownership)

- Subgraph extraction

- Global summarization

This enables:

“What recurring control weaknesses appear across all 2025 financial audits?”

Vector RAG sees fragments.

GraphRAG sees structure.

Without structured retrieval, organizations risk the type of fragmented AI oversight costs modeled in the

Shadow AI audit fees 2026 pricing matrix.

Relationship-blind RAG increases audit friction.

In 2026, “Hybrid Search” is the enterprise safety net. It combines Vector Search (semantic similarity), BM25 keyword search for exact identifiers (SKUs, policy numbers, contract IDs), and graph traversal—often fused using Reciprocal Rank Fusion (RRF). This prevents vectors from “blurring” exact-match compliance references that regulators expect to be precise.

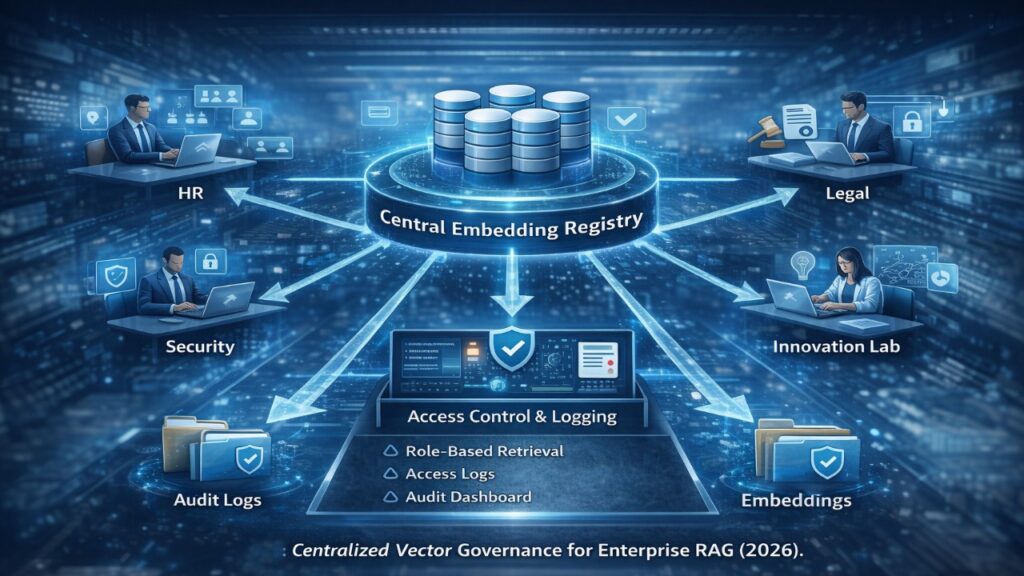

The Shadow RAG Crisis: Centralized Vector Governance in 2026

Many enterprises now operate:

- HR RAG

- Legal RAG

- Security RAG

- Innovation lab RAG

Each with separate embeddings, chunking logic, and metadata standards.

This mirrors the fragmentation patterns described in the Shadow AI audit fees 2026 pricing matrix, where uncoordinated AI deployments expand compliance exposure.

Enterprise best practice now requires:

- Central embedding registry

- Unified metadata taxonomy

- Role-based retrieval filtering

- Vector tenancy controls

- Retrieval logging retention policy

Without centralized governance, RAG becomes shadow infrastructure.

The Multi-Agent Orchestration Layer: Agentic RAG in 2026

Simple retrieve-then-generate pipelines are obsolete in regulated environments.

Modern enterprise RAG systems follow the agentic orchestration model, similar to the governance architecture explored in

Conversational AI 2026: Agentic Systems & Governance.

Instead of one pipeline, enterprises deploy:

| Agent | Role |

| Retriever Agent | Decomposes query & performs iterative retrieval |

| Critic Agent | Validates faithfulness & checks hallucinations |

| Compliance Agent | Enforces regulatory boundaries |

| Formatting Agent | Aligns output with enterprise standards |

Iterative retrieval allows:

Query → Retrieve → Assess sufficiency → Re-query → Validate → Generate

If evidence is incomplete, the system searches again.

Single-pass RAG is no longer enterprise-grade.

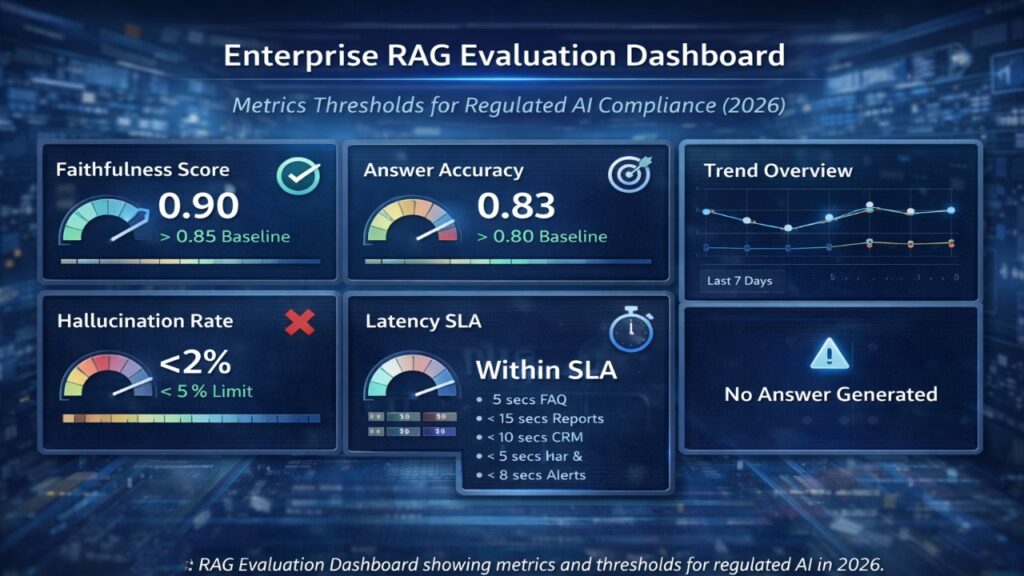

Enterprise RAG Evaluation Frameworks 2026: From Testing to Quantification

Production RAG now requires measurable thresholds:

| Metric | Enterprise Target |

| Faithfulness | > 0.85 |

| Answer Relevancy | > 0.80 |

| Context Precision | > 0.75 |

| Hallucination Rate | < 5% |

| Latency SLA | Defined per use case |

Evaluation-first deployment reduces governance exposure — which increasingly influences executive compensation negotiations, as outlined in

CISO negotiate NIS2 liability stipend Germany.

AI accountability now has compensation consequences.

Reducing RAG Latency and Cost: Cloud API Burn vs Local Inference ROI

Cloud-based RAG introduces:

- Variable token pricing

- Concurrency bottlenecks

- Context window cost amplification

Enterprise teams increasingly evaluate:

- On-prem or hybrid deployment

- vLLM inference servers

- Paged Attention memory optimization

- GPU amortization models

Stable inference cost becomes strategic.

In contractor-heavy models, infrastructure decisions intersect with tax optimization frameworks such as those compared in

Cybersecurity B2B Poland 2026: Ryczałt vs IP Box calculator.

RAG economics are no longer purely technical.

They are structural financial decisions.

EU AI Act Article 50: Transparency & Disclosure Logic

Under the EU AI Act, AI systems interacting with users must:

- Clearly disclose AI involvement

- Avoid deceptive impersonation

- Enable traceability of outputs

For RAG systems, this implies:

- Retrieval logging

- Timestamp retention

- Disclosure logic in interaction layer

- Output attribution mechanisms

Technical approaches such as those evaluated in

AI watermarking tools for EU compliance 2026

are becoming complementary safeguards.

Transparency is not optional.

Executive Risk & Talent Economics in AI Governance

Where RAG influences operational decisions, governance failures may escalate into executive accountability exposure.

This reality is already reshaping compensation benchmarks, as seen in

CISO salary Berlin vs Amsterdam 2026 net wealth comparison.

AI governance maturity is now a board-level hiring criterion.

RAG architecture choices influence executive negotiation leverage.

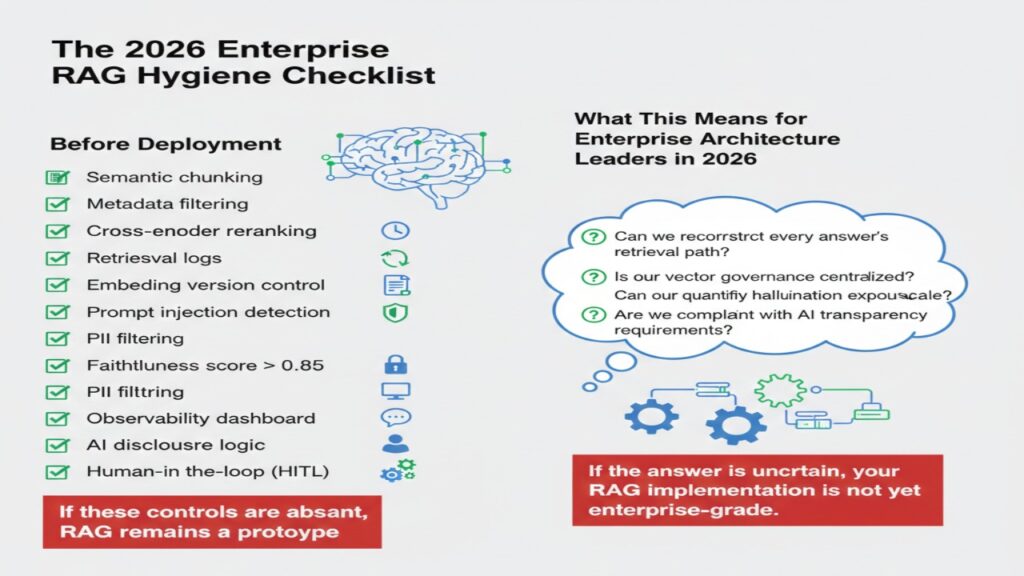

The 2026 Enterprise RAG Hygiene Checklist

Before deployment:

- Semantic chunking aligned with document structure

- “Adopt semantic/layout-aware chunking to ensure context integrity—never break a legal clause in half.”

- Metadata filtering by department or clearance

- Cross-encoder reranking enabled

- Embedding version control maintained

- Retrieval logs stored with timestamps

- Prompt injection detection active

- PII filtering before retrieval

- Faithfulness score > 0.85

- Observability dashboard enabled

- AI disclosure logic implemented

- “Human-in-the-loop (HITL) workflow for high-risk responses (e.g., policy changes or vendor termination advice).”

If these controls are absent, RAG remains a prototype.

What This Means for Enterprise Architecture Leaders in 2026

Ask:

- Can we reconstruct every answer’s retrieval path?

- Is our vector governance centralized?

- Can we quantify hallucination exposure?

- Is our cost predictable under scale?

- Are we compliant with AI transparency requirements?

If the answer is uncertain, your RAG implementation is not yet enterprise-grade.

2026 Executive FAQ on Enterprise RAG Implementation

Q: Is Vector RAG obsolete in 2026?

Ans: Not obsolete, but insufficient for relationship-heavy compliance queries. Graph or hybrid architectures become necessary when reasoning spans entities or timeframes.

Q: What evaluation threshold is acceptable before production?

Ans: Faithfulness above 0.85 is increasingly treated as baseline for regulated environments. Deployment below that threshold increases hallucination exposure.

Q: Does RAG increase executive liability under NIS2?

Ans: If operational decisions rely on unlogged AI outputs, documentation gaps may increase scrutiny. Governance controls reduce that exposure.

Q: When does local inference outperform cloud APIs?

Ans: When sustained query volume justifies GPU amortization and throughput optimization. Predictable workloads favor local deployment.

Q: How do we prevent RAG sprawl?

Ans: Centralize embeddings, standardize metadata, and enforce retrieval logging across departments.

Q: Do transparency rules apply to RAG outputs?

Ans: Yes, if the system interacts with users or influences decisions. Disclosure and traceability are mandatory in regulated contexts.

Regulatory and Institutional Sources on Enterprise RAG Governance

European Parliament & Council — EU Artificial Intelligence Act (Regulation (EU) 2024/1689)

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

European Union Agency for Cybersecurity — NIS2 Directive Resources

https://www.enisa.europa.eu/topics/nis-directive/nis2

European Commission — AI Regulatory Framework Portal

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

European Data Protection Board — AI & Data Protection Guidance

https://edpb.europa.eu

OECD AI Policy Observatory

https://oecd.ai

The 2026 Enterprise Reality of RAG

In 2026, RAG is no longer a technical enhancement layer — it is governance-sensitive decision infrastructure.

Enterprises that still treat RAG as “vector + LLM” are operating with:

- Blind similarity assumptions

- Unmeasured hallucination exposure

- Fragmented vector silos

- Volatile token-based cost models

- Incomplete regulatory logging

The defensible enterprise standard now requires:

- Graph-aware retrieval for relationship-based reasoning

- Multi-agent orchestration with validation layers

- Evaluation-first deployment with measurable thresholds

- Centralized vector governance

- Local inference cost modeling

- EU AI Act transparency controls

- Retrieval traceability aligned with NIS2 accountability

The organizations that win in 2026 are not those running the largest models.

They are the ones that can demonstrate — under audit, board scrutiny, or regulatory review:

“Here is exactly how this answer was generated, what documents it relied on, how it was validated, who approved it, and what it cost.”

If your RAG system cannot produce that evidence, it is not enterprise-grade — it is experimental infrastructure operating in a regulated environment.

In 2026, RAG maturity is defined by defensibility.

Author Bio

Saameer is an enterprise AI governance researcher and technology strategist focused on the intersection of RAG architectures, regulatory accountability, and executive risk in the EU technology landscape. His work analyzes how emerging systems — from Agentic AI and GraphRAG to NIS2 governance and AI transparency mandates — reshape enterprise liability, cost structures, and strategic decision-making.

Through Tech Plus Trends, Saameer delivers executive-level insights that bridge technical architecture with real-world compliance exposure, compensation dynamics, and operational defensibility.

His guiding principle: –Innovation is valuable. Defensible innovation is sustainable.

Disclaimer

Professional Notice: The information provided in this article is for educational and strategic informational purposes only and does not constitute legal, financial, or technical advice. While the architectural patterns (GraphRAG, Multi-Agent systems) and regulatory references (EU AI Act, NIS2) reflect the 2026 enterprise landscape, compliance requirements vary significantly by jurisdiction and industry. Implementation of these systems may carry inherent risks related to data privacy and algorithmic reliability. Readers are advised to consult with qualified legal counsel and certified AI security professionals before deploying high-stakes RAG infrastructure. The author and Tech Plus Trends disclaim any liability for decisions made based on this content.

Transparency Note (EU AI Act Art. 50 Compliance)

AI Disclosure: In accordance with the transparency obligations for “public interest text” and digital publications in 2026, please be advised that this article was developed through a collaborative “Human-in-the-Loop” (HITL) process.

- Generation: Preliminary research, structural mapping, and data synthesis were assisted by advanced Large Language Models (LLMs).

- Editorial Control: A human author (Saameer) exercised final editorial responsibility, performing fact-verification, strategic synthesis, and original analysis of the 2026 regulatory environment.

- Intent: This disclosure is provided to ensure readers can distinguish between purely human-generated content and AI-augmented professional analysis.