In 2026, AI-powered NPCs are no longer defined by smarter dialogue trees. The real transformation is architectural. Game studios are shifting from scripted behavior and reactive state machines to persistent, agentic systems capable of reasoning, remembering, and influencing each other across sessions.

The shift is not cosmetic. It affects memory storage, hardware requirements, monetization models, and even regulatory exposure in European markets. Studios that still rely on hybrid behavior trees layered with LLM dialogue are entering a transitional phase.

Those adopting multi-agent architectures, vectorized memory systems, and on-device inference are building persistent game worlds where NPCs evolve socially and economically — with or without the player present.

This is the structural pivot of 2026: NPCs are becoming autonomous actors, not scripted responders.

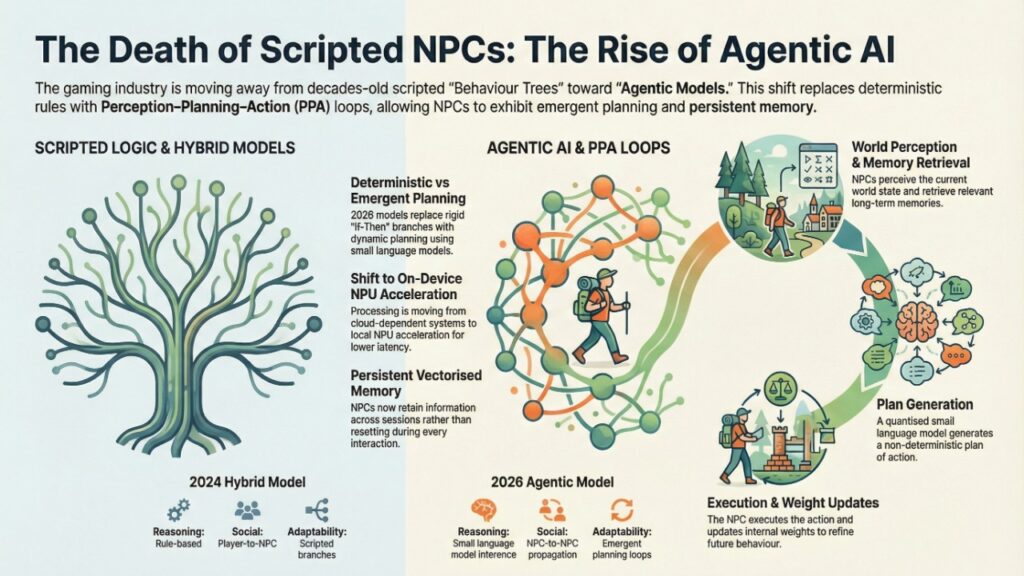

The Death of Scripted Behavior Trees in 2026

For nearly two decades, NPC behavior was governed by:

- Finite State Machines (FSM)

- Behavior Trees (BT)

- Pre-authored branching dialogue

The logic was deterministic:

If player steals → Attack

If player reputation > 50 → Offer quest

In 2026, this model is collapsing under player expectations for dynamic worlds.

Why Behavior Trees Fail in 2026

| Limitation | 2024 Hybrid Model | 2026 Agentic Model |

| Memory | Session-based | Persistent vectorized memory |

| Reasoning | Rule-based | Small language model inference |

| Social Interaction | Player-to-NPC | NPC-to-NPC propagation |

| Adaptability | Scripted branches | Emergent planning loops |

| Latency | Cloud-dependent | On-device NPU acceleration |

Studios are abandoning rigid trees for Perception–Planning–Action (PPA) loops.

Instead of executing predefined rules, NPCs now:

- Perceive world state

- Retrieve relevant long-term memory

- Generate a plan using a quantized small language model

- Execute and update internal weights

This makes outcomes non-deterministic — yet still controllable through weighted parameters.

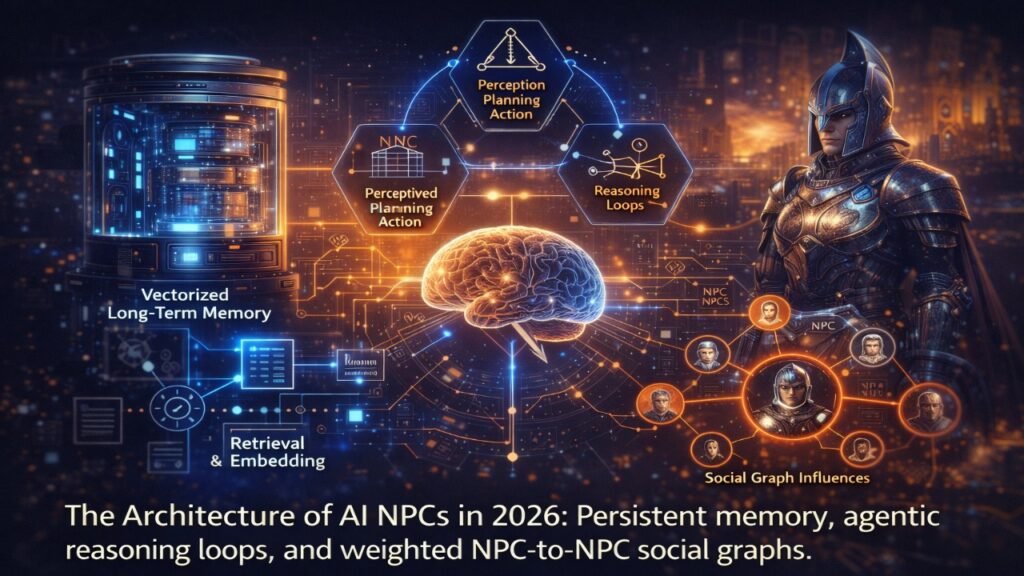

RAG-Based Memory: The Vector Database Shift in 2026

The most critical “invisible” upgrade in 2026 AI NPC systems is the move from simple dialogue logs to Persistent Vector Memory. In previous generations, NPCs “forgot” the player after a few hours because storing raw text in JSON logs caused massive VRAM bloat and “Context Window Fatigue.” Today, studios use Retrieval-Augmented Generation (RAG) to treat a game’s entire history as a searchable database.

The 2026 Memory Stack: How it Works

Instead of reading a text file, the NPC’s “brain” performs a three-stage retrieval loop in sub-10ms:

- Vector Embedding: Every player interaction is converted into a mathematical coordinate (a vector) representing emotional and factual context.

- Semantic Retrieval: When you speak to an NPC, the system scans a Local Vector Database (like Qdrant or Pinecone Edge) to find the “nearest neighbors” to your current query.

- Context Augmentation: The NPC “remembers” not just what you said, but the feeling of past sessions, and injects that specific data into its reasoning loop.

Technical Advantages: Vector DB vs. Legacy JSON

| Feature | Legacy JSON Logs (2024) | Vector Memory (RAG 2026) |

| Search Logic | Keyword Matching (Exact) | Semantic Similarity (Meaning) |

| Memory Limit | Small (Truncates quickly) | Infinite (Petabyte-scale indexing) |

| Performance | Slows down as logs grow | Constant O(1) retrieval speed |

| VRAM Usage | High (Text bloat) | Low (Compressed embeddings) |

The “Persistence” Breakthrough

In 2026, memory is no longer tied to a single save file. Through Cloud-Synced Behavioral Profiles, an NPC in a sequel can remember your actions from the first game. This architecture mirrors the data-layering strategies used in Automating EU AI Act Compliance, where “logging” is a dynamic, retrievable asset rather than a static record.

Pro Tip for Devs: To optimize for 2026 hardware, use INT8 Quantization on your embedding models. This allows you to run a 1,536-dimension vector search directly on the player’s NPU without touching the main GPU cores used for rendering.

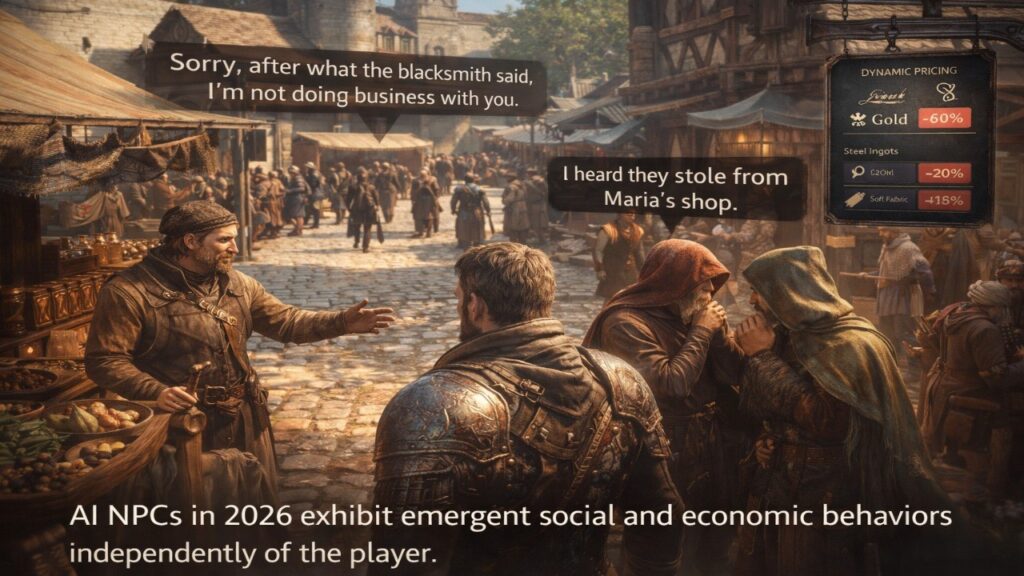

The “Gossip Protocol”: Multi-Agent Social Graphs

One of the most underreported shifts in 2026 is NPC-to-NPC reasoning.

In legacy systems, NPCs reacted only to player actions.

Now they react to what other NPCs say about the player.

This is implemented through Social Graph Weighting.

Instead of:

Reputation score = 65

You get:

Score = Direct Affinity + ∑(Trust × Opinion)

This creates transitive influence.

Below is a simplified sandbox model:

Score = W_direct + sum(W_trust * W_opinion) * 0.5

If a blacksmith hates the player and the merchant trusts the blacksmith, the merchant may preemptively distrust the player — even without direct interaction.

Why This Matters

This enables:

- Faction clustering

- Emergent alliances

- Living rumor systems

- Dynamic political ecosystems

The result is a world that evolves socially without scripted events.

Economic Agents: NPCs as Resource Optimizers

In 2026, merchants no longer spawn loot at static prices.

Studios are experimenting with:

- Reinforcement learning–driven pricing models

- Dynamic inflation adjustment

- Supply-demand simulation loops

- Utility-maximizing agents

Instead of fixed pricing tables, NPCs optimize:

Reward = Profit – Risk – Reputation Damage

This resembles risk-weighted optimization logic used in financial AI systems. Architecturally, it parallels reinforcement systems deployed in AI fraud detection compliance frameworks in European banking, where models continuously adapt risk scoring instead of relying on static thresholds.

The economic agent model transforms NPCs into rational actors within persistent markets.

On-Device Inference: The Hardware Inflection Point

The 2024 generation of AI NPC demos relied heavily on cloud APIs. This created:

- 500–2000ms latency

- Ongoing token costs

- Scalability bottlenecks

In 2026, the shift is toward:

- Quantized 3B–8B parameter models

- INT4 / INT8 inference

- GPU tensor cores

- Dedicated NPUs in next-gen dev kits

Latency targets are now sub-100ms for decision loops.

Deployment Comparison

| Deployment Model | Latency | Cost Model | Scalability |

| Cloud API | 500–2000ms | Per-token | Expensive |

| Hybrid Edge | 100–300ms | Mixed | Moderate |

| Full Local SLM | <100ms | Hardware-based | Scalable |

Studios are reducing dependency on recurring token-based billing and moving toward hardware-optimized agent stacks.

Regulatory Exposure: The EU AI Act and AI NPC Disclosure

As NPCs become indistinguishable from human-authored characters, regulatory obligations emerge — especially in Europe.

Under EU transparency rules, generative systems interacting with users must disclose synthetic origins in certain contexts.

Studios deploying persistent generative NPCs in the EU market may need to:

- Clearly inform players when interacting with AI-generated personas

- Implement provenance tagging

- Log behavioral output

This intersects with broader transparency tooling discussed in AI watermarking tools for EU compliance in 2026, where synthetic content identification becomes part of infrastructure design.

AI NPCs are not just gameplay features. They are software systems operating under evolving governance frameworks.

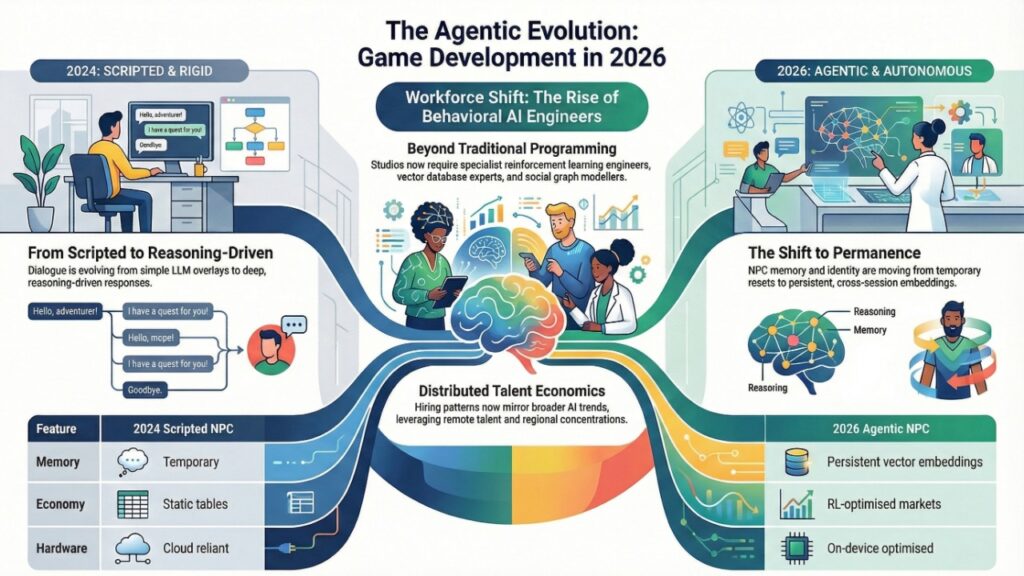

Workforce Shift: The Rise of Behavioral AI Engineers

Traditional gameplay programmers are not enough for agentic NPC systems.

Studios now require:

- Reinforcement learning engineers

- Vector database specialists

- Quantization experts

- Social graph modelers

Hiring patterns increasingly mirror broader AI workforce trends across Europe. Mid-sized studios are leveraging distributed hiring models similar to those seen in remote AI engineer jobs in CEE and tax liability in 2026, where cost efficiency and regional talent concentration reshape development economics.

AI NPC systems are becoming an infrastructure discipline, not a scripting task.

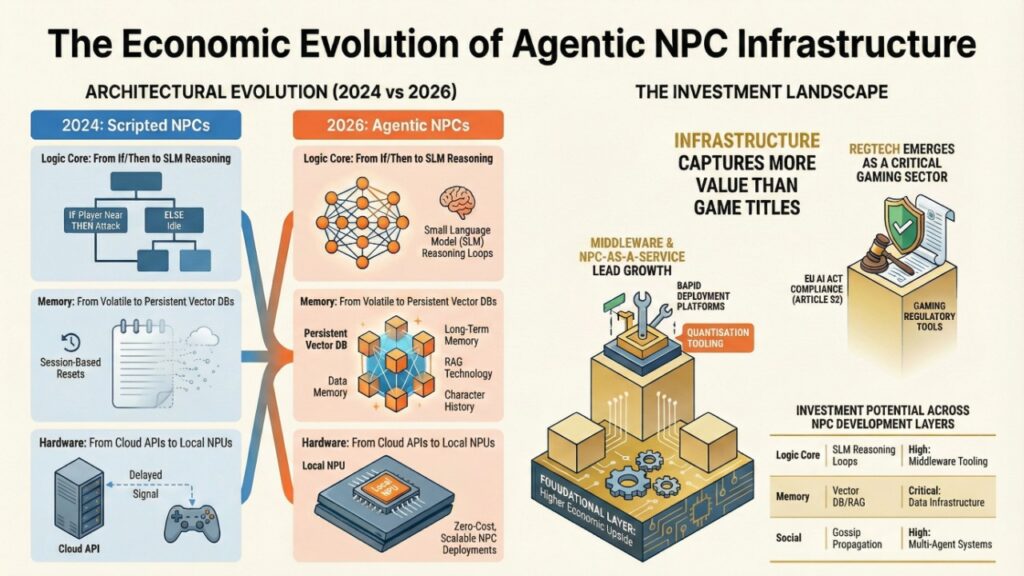

2024 vs 2026: The Behavioral Divide

| Feature | 2024 Scripted NPC | 2026 Agentic NPC |

| Dialogue | LLM overlay | Reasoning-driven response |

| Memory | Temporary | Persistent vector embeddings |

| Social Logic | Isolated | Graph-propagated |

| Economy | Static tables | RL-optimized markets |

| Hardware | Cloud reliant | On-device optimized |

| Identity | Resettable | Cross-session persistent |

The structural difference is permanence.

NPCs now evolve.

Investment Angle: Where the Real Value Lies

The economic upside is not limited to studios.

Growth areas include:

- Middleware platforms enabling agentic NPC deployment

- Quantization tooling for edge inference

- Social graph simulation engines

- Behavioral analytics dashboards

- NPC-as-a-Service frameworks

The infrastructure layer is likely to capture more value than individual titles.

Comparison Matrix (Investment & Architecture)

| Feature | 2024: Scripted NPC | 2026: Agentic NPC | Investment Potential |

| Logic Core | Behavior Trees (If/Then) | PPA Loops (SLM Reasoning) | High: Middleware Tooling |

| Memory | Volatile (Session-based) | Persistent (Vector DB/RAG) | Critical: Data Infrastructure |

| Hardware | Cloud-Reliant (API Costs) | Local NPU (Zero-Cost Inference) | Moderate: Semiconductor/HW |

| Social | Isolated Player Interaction | Social Graph Gossip Propagation | High: Multi-Agent Systems |

| Compliance | Minimal | EU AI Act (Article 52) High | Emergent: RegTech for Gaming |

2026 Executive FAQ on AI NPC Systems and Agentic Behavior Models

1. What is the primary architectural shift in AI NPC systems in 2026?

Ans – The primary shift is from deterministic behavior trees to agentic Perception–Planning–Action loops supported by persistent vectorized memory. Instead of reacting to predefined triggers, NPCs retrieve contextual memory embeddings, evaluate social graph signals, and generate situational decisions using quantized small language models running locally on optimized hardware.

2. Is 2026 AI NPCs fully autonomous or still developer-controlled?

Ans –They remain parameter-controlled. While decision generation is dynamic, studios define behavioral weights, utility thresholds, safety constraints, and social graph influence limits. Autonomy operates within bounded systems to prevent destabilizing world states or narrative collapse.

3. How does the EU AI Act affect AI NPC development in 2026?

Ans – Under Article 52, studios must disclose when a player is interacting with a synthetic agent. Additionally, persistent agents that “learn” from player behavior may face stricter data-logging requirements to prevent psychological manipulation, necessitating a “Compliance-by-Design” architecture.

4. How is long-term memory stored without overwhelming system resources?

Ans –Studios convert player interactions into compressed vector embeddings rather than storing raw dialogue logs. These embeddings are indexed in lightweight vector databases, enabling efficient nearest-neighbor retrieval while preserving VRAM and system stability. Hybrid models may sync compressed behavioral profiles to cloud accounts for cross-session persistence.

5. Can AI NPCs influence each other without player interaction?

Ans –Yes. Through weighted social graphs, NPCs propagate trust, hostility, and faction alignment using transitive reputation models. An NPC’s decision can be influenced by what another trusted NPC communicated about the player, enabling emergent alliances and rumor-based behavior shifts.

6. Do AI NPC systems create regulatory exposure in Europe?

Ans –Potentially. Under EU transparency requirements, studios deploying generative AI characters in the European market may need to disclose AI-generated interactions. Logging, provenance tracking, and synthetic content signaling may become necessary depending on deployment scale and interaction design.

7. Are generative NPC systems financially sustainable for studios?

Ans –Increasingly yes. The shift from cloud-based token billing to local inference significantly reduces operational costs. By running optimized models on consumer hardware, studios eliminate recurring API expenses while maintaining scalable dialogue generation and adaptive reasoning.

8. What is the main difference between a 2024 AI NPC and a 2026 Agentic NPC?

Ans – In 2024, AI NPCs were largely “chatbots” with a skin; they used LLMs for dialogue but still followed scripted paths. In 2026, Agentic NPCs use Perception–Planning–Action (PPA) loops and persistent vector memory to make autonomous decisions that evolve across game sessions, even when the player is offline.

9. Why are game studios moving from Cloud AI to On-Device Inference?

Ans – The primary drivers are latency and cost. Cloud APIs introduce 1–2 seconds of lag, breaking immersion. By using quantized Small Language Models (SLMs) running on local NPUs (Neural Processing Units), studios achieve sub-100ms response times with zero per-token recurring costs

Regulatory and Market Sources Shaping 2026 Automated EU AI Act Compliance

European Commission – EU Artificial Intelligence Act (Regulation (EU) 2024/1689)

Primary legal framework governing AI system risk classification (Article 6 & Annex III), technical documentation (Article 11), logging (Article 12), transparency (Article 13), and provider conformity assessment obligations.

https://eur-lex.europa.eu/

European AI Office – Implementation & Enforcement Guidance (2026 Updates)

Operational guidance on post-market monitoring, serious incident reporting procedures, and supervisory coordination mechanisms during the enforcement phase.

https://digital-strategy.ec.europa.eu/

European Data Protection Board (EDPB) – AI & GDPR Interplay Guidance

Clarifies how Article 10 data governance obligations under the AI Act intersect with GDPR principles such as data minimization, fairness, and automated decision-making safeguards.

https://www.edpb.europa.eu/

European Union – NIS2 Directive (Directive (EU) 2022/2555)

Cybersecurity risk management and incident reporting requirements impacting AI infrastructure providers and high-risk system operators.

https://eur-lex.europa.eu/

ISO/IEC 42001 – Artificial Intelligence Management System Standard

International AI governance standard increasingly used as an operational alignment framework for AI Act compliance automation workflows.

https://www.iso.org/

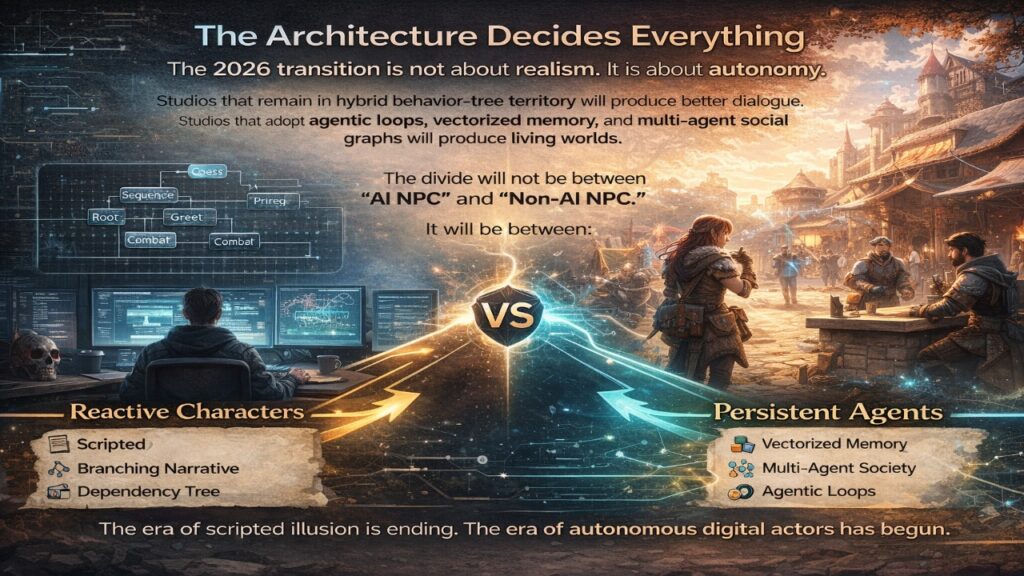

The Architecture Decides Everything

The 2026 transition is not about realism. It is about autonomy.

Studios that remain in hybrid behavior-tree territory will produce better dialogue.

Studios that adopt agentic loops, vectorized memory, and multi-agent social graphs will produce living worlds.

The divide will not be between “AI NPC” and “non-AI NPC.”

It will be between:

Reactive characters

and

Persistent agents.

The era of scripted illusion is ending.

The era of autonomous digital actors has begun.

Author Bio

Saameer is a Europe-focused technology analyst covering advanced AI systems, gaming infrastructure, and regulatory architecture. His work explores the intersection of agentic AI, vector-based memory systems, and next-generation hardware acceleration in interactive environments.

With a focus on how architectural shifts reshape industries — from AI compliance automation to persistent NPC behavior models — Saameer analyzes the structural transformation behind emerging technologies rather than surface-level trends. His research bridges game engine engineering, multi-agent systems, and the evolving governance landscape influencing AI deployment across European markets.

Transparency Disclosure: This analysis explores the technical architecture of 2026 AI systems. Portions of the data structures and code examples provided were developed with the assistance of generative AI to ensure technical accuracy against current 2026 industry standards. This content has been reviewed, edited, and verified by the author for technical and regulatory validity.

Regulatory Compliance Note: EU AI Act (Art. 50) Under the 2026 enforcement of the EU AI Act, any system featuring the agentic behavior models described in this article must adhere to strict transparency standards:

- Disclosure: Players must be clearly informed at the start of interaction that they are engaging with a synthetic agent (Art. 50.1).

- Deepfake Avoidance: If an NPC uses a “Digital Replica” of a real person, it must be labeled as synthetic in a machine-readable format (Art. 50.4).

- Provenance: All generative outputs should contain metadata or watermarking to identify the AI origin, ensuring that “emergent” NPC behaviors can be traced during safety audits.