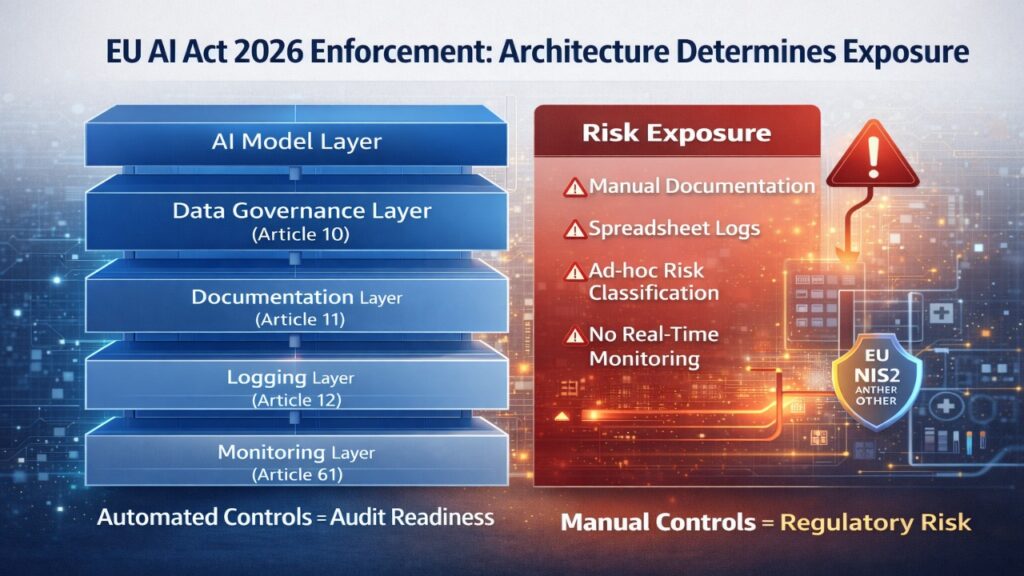

If your organization operates a high-risk AI system in the EU, manual compliance is no longer viable. By August 2, 2026, automated controls for documentation, logging, and post-market monitoring will be essential to avoid enforcement exposure.

Automating EU AI Act compliance requires embedding regulatory checks directly into your AI development lifecycle — from risk classification and technical documentation to real-time monitoring and incident reporting. The companies that operationalize compliance as code in 2026 will reduce conformity assessment friction, audit delays, and liability risk.

Automated EU AI Act Compliance in 2026: What Changes for High-Risk Systems

To automate EU AI Act compliance in 2026, organizations must integrate risk classification, Article 11 technical documentation, bias testing, and logging directly into their CI/CD pipelines. High-risk systems require continuous monitoring and automated evidence generation to support conformity assessments. Manual documentation alone is insufficient under enforcement-phase scrutiny.

Enforcement Snapshot (2026)

| Requirement | Manual Approach | Automated Approach | Exposure Delta |

| Risk Classification (Art. 6) | Spreadsheet inventory | Dynamic discovery engine | Reduced misclassification risk |

| Technical Documentation (Art. 11) | Static PDF files | Documentation-as-Code in Git | Continuous audit readiness |

| Logging (Art. 12) | Partial event logs | Structured telemetry hooks | Strong traceability |

| Post-Market Monitoring (Art. 61) | Periodic review | Real-time dashboards | Faster incident response |

By 2026, enforcement authorities will expect demonstrable system-level controls — not retrospective compliance statements.

Why Most AI Teams Misjudge Automation Risk Under the EU AI Act

Many teams assume compliance is a legal reporting exercise. It is not. Under the Act, particularly for Annex III high-risk systems, compliance obligations are architectural.

The blind spot lies in three areas:

- Technical Documentation (Article 11) is often treated as a narrative file, rather than a continuously updated artifact.

- Bias Governance (Article 10) is interpreted as dataset documentation, rather than automated bias drift detection.

- Logging (Article 12) is reduced to infrastructure logs, ignoring decision traceability.

For financial use cases such as transaction monitoring or fraud analytics, automation complexity increases further. A detailed sector-specific breakdown is available in our analysis of AI fraud detection compliance for European banks in 2026, which maps conformity assessment automation to PSD3 and DORA exposure.

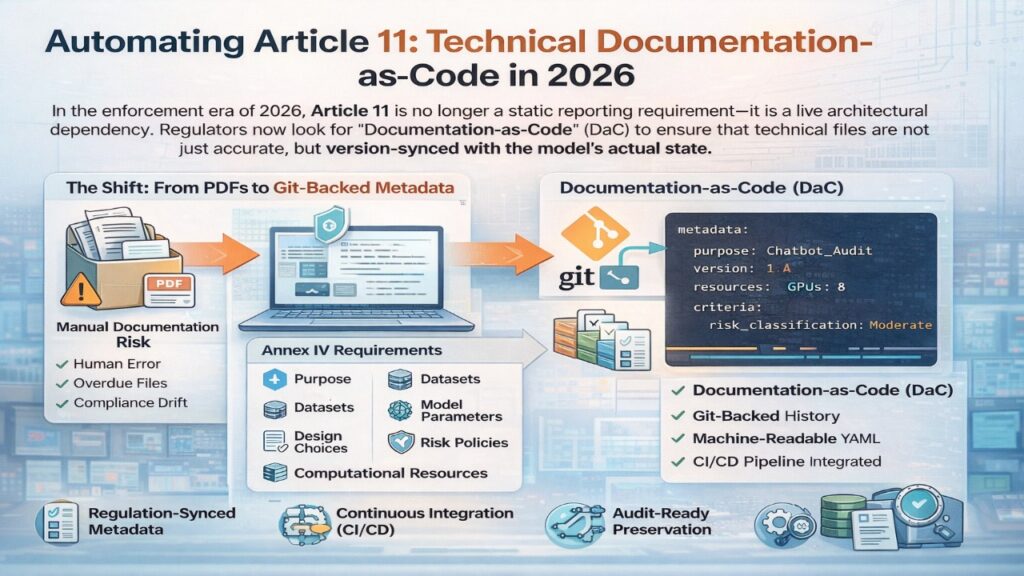

Automating Article 11: Technical Documentation as Code in 2026

In the enforcement era of 2026, Article 11 is no longer a static reporting requirement—it is a live architectural dependency. Regulators now look for “Documentation-as-Code” (DaC) to ensure that technical files are not just accurate, but version-synced with the model’s actual state.

The Shift: From PDFs to Git-Backed Metadata

Annex IV requires a granular breakdown of your AI system, from its “intended purpose” to its “computational resources.” Manual documentation for a fast-moving CI/CD pipeline is a recipe for compliance drift. In 2026, leading teams use machine-readable YAML files to auto-generate the Annex IV technical file.

Machine-Readable Metadata Template

By storing this compliance.yaml in your model’s root directory, you create a “single source of truth” for both developers and auditors:

YAML

system_id: “eu-credit-risk-v4”

article_11_compliance:

intended_purpose: “Automated retail credit scoring”

interaction_hardware: [“Standard Cloud VM”, “NVIDIA H100”]

versioning:

software_version: “2.4.1”

firmware_requirements: “N/A”

architecture:

logic: “Gradient Boosted Decision Trees”

data_lineage: “s3://compliance-bucket/v4/lineage.json”

human_oversight:

protocol_id: “HOSP-09”

override_mechanism: “Manual review trigger on 0.8+ score”

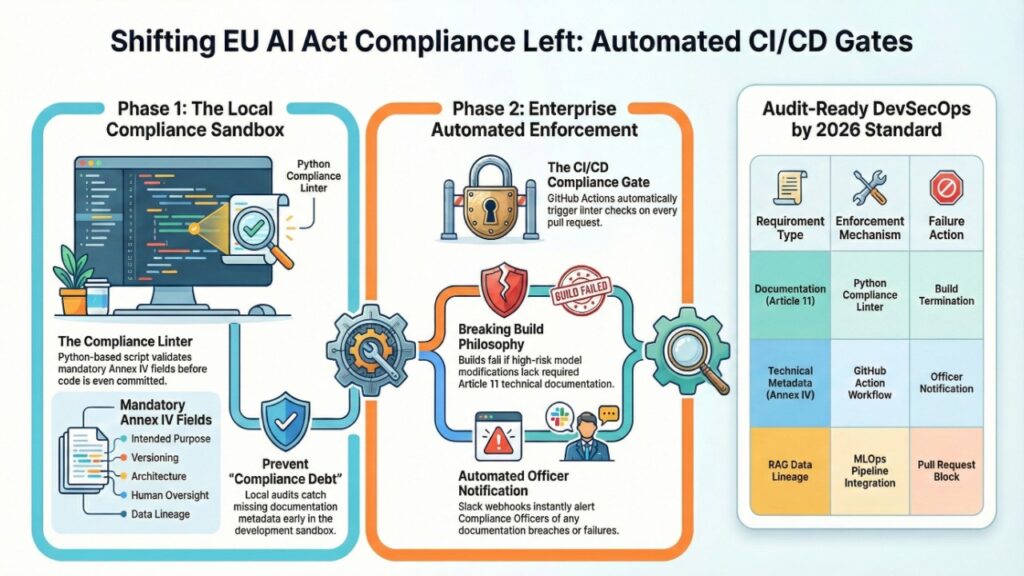

Developer’s Sandbox: Automating the Compliance Gate

To prevent “Compliance Debt,” your CI/CD pipeline must treat Article 11 and 12 requirements as breaking tests. If a pull request modifies a high-risk model without updating its technical documentation, the build should fail.

1. The Compliance Linter (ai_act_check.py)

This script acts as a localized “Compliance Sandbox” for your developers. It validates that all mandatory Annex IV fields are present before code is even committed.

Python

import yaml

import sys

# Required per Annex IV for High-Risk Systems

MANDATORY_ANNEX_IV = [

“intended_purpose”, “versioning”, “architecture”,

“human_oversight”, “data_lineage”

]

def run_sandbox_audit(file_path):

with open (file_path, ‘r’) as f:

data = yaml.safe_load(f).get(‘article_11_compliance’, {})

missing = [key for key in MANDATORY_ANNEX_IV if key not in data]

if missing:

print(f”❌ SANDBOX FAILURE: Missing mandatory Article 11 fields: {missing}”)

sys.exit(1)

print(“✅ SANDBOX PASS: Documentation metadata is audit-ready.”)

if __name__ == “__main__”:

run_sandbox_audit(“compliance.yaml”)

2. The GitHub Action: Automated Enforcement

Place this YAML in .github/workflows/compliance-gate.yml to automate enforcement at the enterprise level. In 2026, this is the standard for Audit-Ready DevSecOps.

YAML

name: EU AI Act Compliance Gate

on: [pull_request]

jobs:

validate-article-11:

runs-on: ubuntu-latest

steps:

– uses: actions/checkout@v4

– name: Set up Python

uses: actions/setup-python@v4

– name: Run Compliance Linter

run: python scripts/ai_act_check.py

– name: Notify Compliance Officer

if: failure()

run: |

curl -X POST -H ‘Content-type: application/json’ \

–data ‘{“text”:”🚨 Compliance Breach: PR #${{ github.event.number }} lacks required AI Act metadata.”}’ \

${{ secrets.SLACK_COMPLIANCE_WEBHOOK }}

For enterprises deploying Retrieval-Augmented Generation (RAG) architectures, documentation must also capture retrieval data lineage. Our implementation guide on enterprise RAG implementation best practices in 2026 explains how to align documentation-as-code with MLOps pipelines.

Bias Drift Automation Under Article 10: Continuous Gate-keeping

Manual fairness reports fail under production conditions.

Instead, integrate bias drift detection tools (e.g., Great Expectations, Deepchecks) into CI/CD:

CI Gate Example Logic

- If disparate impact < 0.8 → Block deployment

- If error rate exceeds validated baseline → Require compliance review

- If training data source changes → Trigger documentation refresh

This ensures that compliance is enforced before model deployment — not during audit panic.

Automated Logging & Incident Reporting (Articles 12 & 61)

High-risk systems must automatically record:

- Period of use

- Input data references

- Decision outputs

- Human oversight interventions

Implement structured telemetry (e.g., Open Telemetry) within inference APIs.

Example pseudo-workflow:

- User query processed

- Model inference generated

- Event log structured in immutable ledger

- Threshold breach → Trigger compliance alert

For systems deploying autonomous multi-step workflows, logging becomes exponentially more complex. As explored in conversational AI and agentic systems governance in 2026, distributed agents create traceability challenges that require centralized compliance orchestration.

Transparency & Watermarking Controls for Generative AI

Where high-risk or limited-risk AI generates content, transparency mechanisms become relevant.

Automated provenance marking and watermarking reduce Article 13 disclosure exposure. A practical implementation breakdown is provided in our review of AI watermarking tools for EU compliance in 2026, detailing how embedded signals can support traceability audits.

Talent & Cross-Border Compliance Exposure in Automated Systems

Automation frameworks also affect workforce structuring.

Organizations hiring distributed AI engineers across Central and Eastern Europe must align tax structuring, liability distribution, and documentation workflows. A detailed operational breakdown is available in remote AI engineer jobs in CEE and 2026 tax liability risks, particularly where engineering decisions affect high-risk AI classification.

Compliance automation is not only technical — it is contractual and operational.

Winners vs. Exposure Divide: Automated vs. Manual Compliance (2026)

| Dimension | Manual Model | Automated Compliance Architecture |

| Conformity Assessment Time | 3–6 months prep | 2–4 weeks |

| Audit Evidence Collection | Manual retrieval | Real-time artifact generation |

| Risk of Substantial Modification | High | Automatically flagged |

| Incident Reporting | Reactive | Trigger-based |

| Audit Stress | Elevated | Continuous readiness |

In 2026, regulators will increasingly differentiate between:

- Organizations with structured automation frameworks

- Organizations producing retrospective documentation

That divide determines enforcement intensity.

Strategic Reassessment: Should You Automate Now or Wait?

If your AI system falls under Annex III high-risk classification, waiting is economically irrational.

Automation requires:

- Refactoring documentation workflows

- Integrating compliance checks into DevSecOps

- Training cross-functional teams

- Updating governance policies

Delaying until enforcement pressure increases compresses implementation timelines and increases operational disruption.

Automating compliance is no longer optional infrastructure — it is competitive risk mitigation.

2026 Executive FAQ on Automated EU AI Act Compliance

1.Can the entire conformity assessment be automated?

Ans-Technical evidence gathering, bias validation, and documentation generation can be automated. However, certain Annex III systems still require human oversight or notified body involvement before market placement.

2.Does automation eliminate legal review?

Ans-No. Automation reduces operational burden but does not replace legal interpretation of risk classification and system boundaries.

3.What is the first system to automate?

Ans-Start with Article 11 documentation and logging. These generate the core audit artifacts regulators examine first.

4.How does automation reduce liability exposure?

Ans-Continuous monitoring reduces the risk of unreported incidents and undocumented substantial modifications.

5.What if the Digital Omnibus delays some obligations?

Ans-Even if specific obligations shift, structural documentation and logging controls remain foundational compliance expectations.

6.Is compliance automation mandatory for limited-risk systems?

Ans-Not strictly mandatory, but automation significantly reduces scaling risk as systems evolve toward higher-risk classifications.

Regulatory and Market Sources Shaping 2026 Automated EU AI Act Compliance

European Commission – EU Artificial Intelligence Act (Regulation (EU) 2024/1689)

Primary legal framework governing AI system risk classification (Article 6 & Annex III), technical documentation (Article 11), logging (Article 12), transparency (Article 13), and provider conformity assessment obligations.

https://eur-lex.europa.eu/

European AI Office – Implementation & Enforcement Guidance (2026 Updates)

Operational guidance on post-market monitoring, serious incident reporting procedures, and supervisory coordination mechanisms during the enforcement phase.

https://digital-strategy.ec.europa.eu/

European Data Protection Board (EDPB) – AI & GDPR Interplay Guidance

Clarifies how Article 10 data governance obligations under the AI Act intersect with GDPR principles such as data minimization, fairness, and automated decision-making safeguards.

https://www.edpb.europa.eu/

European Union – NIS2 Directive (Directive (EU) 2022/2555)

Cybersecurity risk management and incident reporting requirements impacting AI infrastructure providers and high-risk system operators.

https://eur-lex.europa.eu/

ISO/IEC 42001 – Artificial Intelligence Management System Standard

International AI governance standard increasingly used as an operational alignment framework for AI Act compliance automation workflows.

https://www.iso.org/

Automated EU AI Act Compliance in 2026 Is an Architectural Decision — Not a Legal Checkbox

By 2026, automated compliance under the EU AI Act is no longer a documentation exercise. It is an infrastructure mandate.

Organizations treating conformity assessment as a static legal deliverable will face escalating exposure — from delayed market access and notified body bottlenecks to post-market incident scrutiny and cross-regulatory escalation under NIS2 and sectoral regimes.

The competitive divide is now clear:

- Lagging organizations automate reports.

- Resilient organizations automate controls.

Automated risk classification, documentation-as-code, bias gatekeeping, logging pipelines, and real-time incident triggers must be embedded directly into CI/CD and MLOps architectures. Anything less creates structural fragility.

The August 2, 2026 enforcement threshold is not simply a compliance deadline. It is a systems test:

Can your AI infrastructure produce auditable evidence on demand?

If the answer requires manual compilation, legal interpretation, or spreadsheet reconciliation, your architecture is misaligned with the enforcement environment.

The organizations that will lead in 2026 are not those that interpret the AI Act most accurately.

They are those that encode it directly into production systems.

Compliance automation is no longer optional optimization.

It is the operating system for high-risk AI in Europe.

Author Bio

Saameer is a European tech analyst specializing in AI governance, regulatory automation, and enterprise AI infrastructure. His work focuses on translating the EU AI Act, DORA, and cross-border compliance frameworks into executable engineering workflows for high-risk AI systems. Saameer writes at the intersection of regulatory strategy and production AI architecture, helping technical and compliance teams operationalize audit readiness before enforcement deadlines.

Legal Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute legal advice. While this guide outlines technical strategies for automating compliance with the EU AI Act (Regulation (EU) 2024/1689), the regulatory landscape in 2026 remains subject to updates from the European AI Office and the potential “Digital Omnibus” package. Implementing these technical controls does not guarantee a successful conformity assessment. Organizations should consult with qualified legal counsel and regulatory experts to ensure their specific AI systems meet all current and sector-specific obligations (e.g., DORA, NIS2, and GDPR).

Transparency Note: Human-AI Collaboration

In alignment with the transparency principles of the EU AI Act, we disclose the following regarding the creation of this resource:

- Authorship: This article was co-authored by Saameer (Technical Analyst) in collaboration with Gemini, an AI system.

- Human Oversight: All legal citations, technical code snippets (Python/YAML), and strategic recommendations have been reviewed and verified by human experts for accuracy and relevance to the 2026 regulatory environment.

- AI Assistance: Generative AI was utilized to assist in structuring data tables, generating code templates for the “Developer’s Sandbox,” and optimizing the text for search engine visibility.

- Data Source: This content is based on the official text of the EU AI Act and supplementary guidance published by the European Commission as of February 2026.