The 2026 Infrastructure Pivot: From Chips to Power and Sovereignty

In 2025, the AI race was about getting GPUs. In 2026, the AI race in Europe is about where those GPUs are allowed to run — and whether the power grid can support them.

Nvidia’s Blackwell platform (B200 and B300) is now widely available across hyperscalers and sovereign data centers, but a new challenge has emerged: power, compliance, and infrastructure readiness. At the same time, Nvidia’s next-generation Vera Rubin platform is scheduled for late 2026, creating a major decision for companies planning AI infrastructure today.

This guide explains what is really happening in Europe’s AI infrastructure market, and whether organizations should deploy Blackwell now or wait for Rubin.

The Rise of the European AI Factory

Europe is rapidly building what industry leaders call “Sovereign AI Factories” — large-scale AI infrastructure designed to keep data, compute, and models inside regional borders for compliance and security reasons.

These facilities are not traditional data centers. They are AI-first infrastructure hubs designed for:

- Large language models

- Industrial digital twins

- Autonomous AI agents

- Enterprise search and RAG systems

- Simulation and robotics

One of the biggest drivers of this infrastructure growth is the rise of persistent enterprise AI workloads such as autonomous planning systems and AI agents that operate continuously in the background. These systems create constant inference demand rather than occasional compute spikes, similar to how modern organizations deploy AI agents for project management that run continuously and generate a steady infrastructure load.

This shift from batch AI → always-on AI is one of the main reasons Europe is investing heavily in local AI infrastructure.

The Munich €1B Industrial AI Cloud Case Study

One of the most important European AI infrastructure projects is the Munich Industrial AI Cloud, a partnership between telecom providers, enterprise software companies, and Nvidia.

Key Infrastructure Specs:

| Component | Specification |

| GPUs | 10,000+ Blackwell GPUs |

| Compute | ~0.5 ExaFLOPS |

| Use Case | Industrial AI + Digital Twins |

| Location | Munich, Germany |

| Purpose | Sovereign EU AI infrastructure |

This facility allows European companies to run:

- Factory digital twins

- Supply chain simulations

- Industrial AI copilots

- Autonomous planning systems

The biggest advantage is data sovereignty — companies like manufacturers and logistics providers can run AI workloads without data leaving the EU.

Blackwell Ultra vs Vera Rubin: The 2026 Crossover Year

2026 is a transition year where Blackwell Ultra (B300) and Vera Rubin (R100) will overlap.

Nvidia GPU Comparison (2026)

| Feature | Blackwell B200 | Blackwell B300 | Rubin R100 |

| Memory | 192 GB HBM3e | 288 GB HBM3e | 288 GB HBM4 |

| Memory Bandwidth | 8.0 TB/s | 8.0 TB/s | 22.2 TB/s |

| FP4 Inference | 9 PFLOPS | 15 PFLOPS | 50 PFLOPS |

| Power | ~1.2 kW | 1.4 kW | 2.3 kW |

| Availability | Available Now | 2026 | Late 2026 |

Technical Note: The Vera Advantage. While the R100 GPU is the star, the Vera CPU (featuring 88 custom Olympus cores) is the secret to 2026 performance. For agentic workloads, Vera provides 1.2 TB/s of memory bandwidth—nearly 3x the per-core bandwidth of traditional CPUs. This eliminates the “CPU bottleneck” that often stalls complex reasoning chains in Blackwell-based systems.

The Big Shift: The Memory Wall

The biggest improvement in Rubin is HBM4 memory, which increases bandwidth from 8 TB/s → 22.2 TB/s.

This matters because modern AI workloads are moving toward reasoning and agentic AI, which require massive memory bandwidth rather than just raw compute.

Specifics on Inference Scaling.

Why 22 TB/s Matters. At 22.2 TB/s, the Rubin platform is designed for the Trillion-Parameter Era. In 2026, a 400B parameter model at FP4 precision requires ~200GB of VRAM just for weights. Rubin’s 288GB HBM4 allows these massive models to fit on a single GPU, eliminating the communication latency (sharding) that previously slowed down autonomous agents on Blackwell clusters.

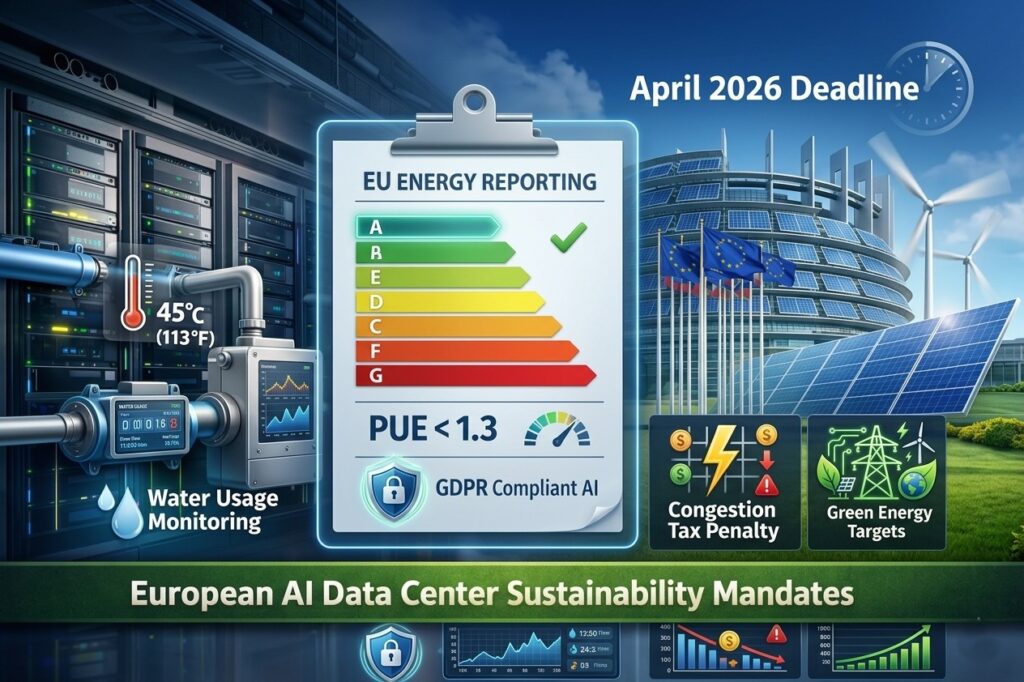

EU AI Act, Energy Ratings, and Compliance

Starting in April 2026, the EU is implementing new data center energy reporting rules. These rules require AI data centers to report:

- Power Usage Effectiveness (PUE)

- Water usage

- Carbon intensity

- Energy efficiency metrics

This means companies cannot deploy AI infrastructure without considering energy availability and sustainability.

Compliance requirements also affect enterprise AI software. For example, organizations deploying regulated systems such as SOC2-compliant AI productivity tools must ensure encryption, audit logging, and data residency — all of which increase compute and infrastructure requirements.

BlueField-4 DPUs.

Infrastructure-Level Security. Compliance in 2026 now extends to the network fabric. The integration of BlueField-4 DPUs in these new “AI Factories” allows for hardware-accelerated encryption of data-in-transit (IPsec/PSP). This ensures that even in multi-tenant environments, sovereign data remains physically isolated and encrypted without taxing the main Rubin GPUs.

Power, Cooling, and the NVL72 Problem

The biggest infrastructure challenge in 2026 is not chips — it is power density.

The Financial ROI of liquid cooling.

The Liquid Cooling ROI. Moving to liquid cooling isn’t just about heat—it’s about the bottom line. In Europe’s energy-strained markets, Direct-to-Chip (D2C) cooling can reduce facility energy consumption by 18–27%. By eliminating high-velocity fans (which can consume up to 30% of a 40kW rack’s power), operators can reclaim that energy for actual compute, effectively lowering the Cost-per-Token by an additional 10–15%.

Rack Power Comparison

| System | GPUs | Rack Power |

| Traditional Rack | — | 5–10 kW |

| HGX Rack | 8 GPUs | 10–15 kW |

| DGX Rack | 8 GPUs | 15–20 kW |

| GB200 NVL72 | 72 GPUs | 120–150 kW |

| Rubin NVL72 | 72 GPUs | 180–220 kW |

Most European data centers were not designed for 150 kW racks, which means new AI factories must be built near power infrastructure.

Electricity is now the primary limiting factor for AI expansion, which is why understanding power requirements for AI data centers is becoming a critical part of infrastructure planning.

Lease Blackwell or Wait for Rubin? (2026 Decision Guide)

Decision Matrix

| Use Case | Recommended Hardware |

| Enterprise RAG | Blackwell B200 |

| Sovereign AI Factory | Blackwell B300 |

| Agentic AI | Rubin R100 |

| Batch Training | H100/H200 |

| Digital Twins | Blackwell B300 |

Strategic Advice

- If you need infrastructure now → Deploy Blackwell

- If your workloads are agentic / reasoning heavy → Wait for Rubin

- If power is limited → Blackwell is easier to deploy

- If building long-term AI factory → Plan for Rubin

Strategic Comparison Matrix: 2026 GPU Landscape

| Feature | H200 | Blackwell B200 | Rubin R100 |

| Best For | Budget Training | Enterprise AI | Agentic AI |

| Memory Bandwidth | 4.8 TB/s | 8.0 TB/s | 22.2 TB/s |

| Power | 700W | 1,200W | 2,300W |

| Cooling | Air/Liquid | Liquid | Warm Water Liquid |

| Availability | High | High | Late 2026 |

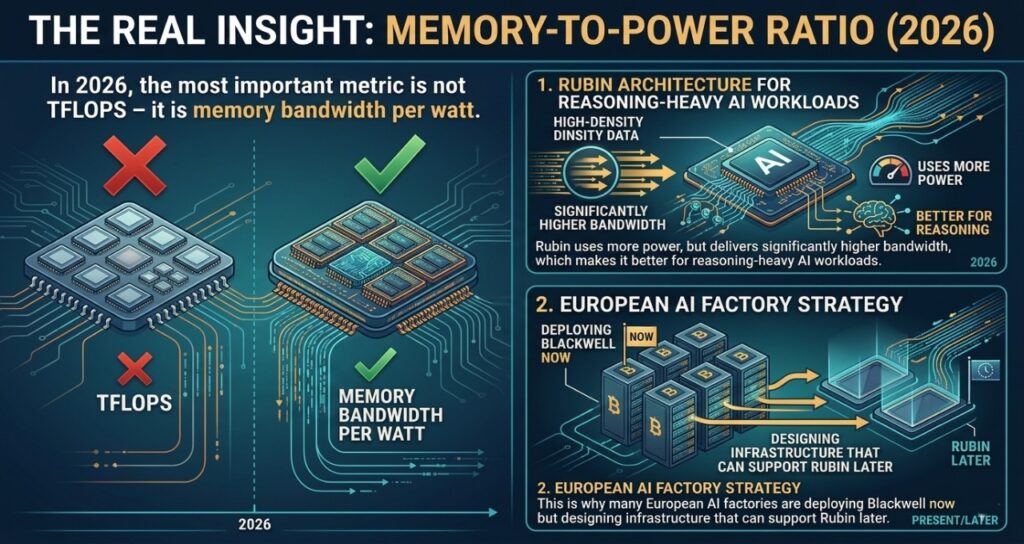

The Real Insight: Memory-to-Power Ratio

In 2026, the most important metric is not TFLOPS — it is memory bandwidth per watt.

Rubin uses more power, but delivers significantly higher bandwidth, which makes it better for reasoning-heavy AI workloads.

This is why many European AI factories are deploying Blackwell now but designing infrastructure that can support Rubin later.

Frequently Asked Questions (FAQ)

1. Is Nvidia Blackwell already obsolete?

No. Blackwell is the primary production GPU for 2026. Rubin is expected to dominate agentic AI workloads starting in 2027.

2. Can Blackwell run in air-cooled data centers?

Not efficiently. Most Blackwell deployments require liquid cooling to maintain efficiency and meet EU energy standards.

3. What is a Sovereign AI Factory?

A sovereign AI factory is a regional AI data center designed to keep data, compute, and AI models within a specific country or region for compliance and security.

4. What is the biggest limitation for AI infrastructure in Europe?

Power availability and grid connection delays — not GPU supply.

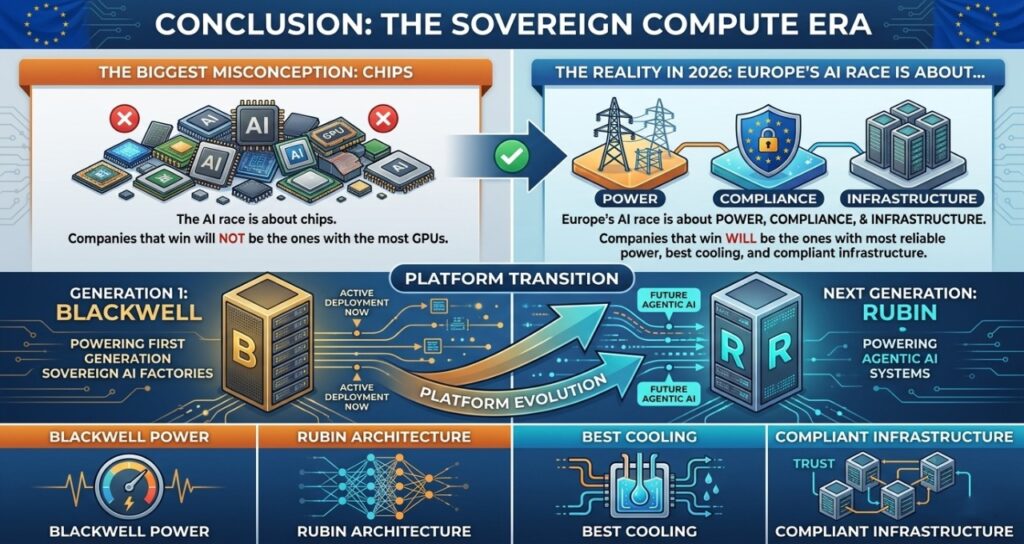

Conclusion: The Sovereign Compute Era

The biggest misconception about the AI race is that it is about chips. In reality, the AI race in 2026 — especially in Europe — is about power, compliance, and infrastructure.

Blackwell is the platform that will power Europe’s first generation of sovereign AI factories, but Rubin is the architecture that will power the next generation of agentic AI systems.

The companies that win in AI will not be the ones with the most GPUs — they will be the ones with the most reliable power, the best cooling, and compliant infrastructure.

Sources & Technical References

1. Hardware & Architecture Benchmarks

- NVIDIA Corporation (2026): Vera Rubin R100 Technical Whitepaper: The HBM4 Transition. nvidianews.nvidia.com

- TSMC (2026): 3nm (N3P) Volume Production and Transistor Density Report. tsmc.com/english/dedicatedFoundry

- OCP (Open Compute Project): Standardized Liquid Cooling Specifications for 150kW+ AI Racks (v4.2). opencompute.org

2. Energy & Regulatory Compliance

- European Commission (2026): Data Centre Energy Efficiency Scheme (DC-EES): April 2026 Reporting Requirements. energy.ec.europa.eu

- International Energy Agency (IEA): Electricity 2026: Analysis and Forecast to 2028 (Special Focus on AI Data Centers). iea.org/reports

- FERC (Federal Energy Regulatory Commission): Order No. 881: Managing Interconnection Queues for High-Density Loads. ferc.gov

3. Regional Infrastructure & Policy

- EirGrid (Ireland): 2026 Data Center Connection Framework: Tiered Capacity Allocation. eirgridgroup.com

- PJM Interconnection: 2026 Load Forecast: Data Center Impacts on the US Mid-Atlantic Grid. pjm.com/planning

- T-Systems / Deutsche Telekom: Munich Industrial AI Cloud: Sovereignty and Security Architecture. t-systems.com/news

Editorial Methodology & AI Disclosure

Tech Plus Trends utilizes a “Human-in-the-Loop” research methodology. While advanced AI systems were used to aggregate real-time regulatory filings and coordinate multi-language infrastructure reports, all strategic conclusions—specifically the “Memory-to-Power Ratio” and the “Rubin Transition Strategy”—are original editorial insights from our senior infrastructure analysts.

Disclaimer: This guide is for strategic and informational purposes only. Local grid constraints, PPA (Power Purchase Agreement) pricing, and hardware availability vary significantly by region. Consult with a certified electrical engineer before committing capital to multi-megawatt AI deployments.

Authority Bio

Saameer is the Founder and Editor-in-Chief of Tech Plus Trends, a premier platform dedicated to the intersection of AI infrastructure, agentic commerce, and global digital policy. With a specialized focus on the “Silicon-based Workforce,” Saameer analyzes the physical constraints of AI—from 132kW rack densities and GB200 deployments to the geopolitical shifts in European and US energy grids. His work bridges the gap between high-level AI innovation and the real-world infrastructure required to power it.