Enterprises deploying agentic AI systems in 2026 face a new challenge: controlling token economics and inference costs across autonomous multi-agent workflows.

Artificial intelligence is evolving rapidly. What started as simple prompt-response systems has transformed into agentic AI architectures capable of autonomous planning, reasoning, and execution. These systems can automate complex business workflows that previously required human specialists.

However, while agentic AI unlocks enormous productivity gains, it also introduces a new economic challenge: token consumption and inference cost management.

Every reasoning step, API call, and contextual memory expansion consumes tokens. When organizations deploy large-scale autonomous agents, these token costs multiply quickly. Without proper cost engineering strategies, even highly effective AI systems can become financially unsustainable.

In 2026, enterprises are no longer asking “How powerful is this AI model?” Instead, they are asking “How efficiently can we run AI at scale?”

This shift has given rise to a new discipline: AI cost engineering and token economics.

What is Agentic AI Cost Engineering?

Agentic AI cost engineering is the discipline of optimizing token usage, inference cycles, and model routing to minimize the operational cost of autonomous AI systems.

It combines AI architecture design, token budgeting strategies, and infrastructure optimization to ensure that large-scale agentic systems remain economically sustainable.

The Rise of Agentic AI Systems

Agentic AI systems differ fundamentally from traditional AI applications.

Instead of executing a single prompt, an agentic system can:

- break tasks into subtasks

- reason about solutions

- interact with tools and databases

- verify outputs and correct errors

This workflow resembles how human professionals approach complex problems.

As explored in agentic AI workflow automation for enterprises, modern enterprise platforms are increasingly deploying autonomous agents to automate operations such as financial analysis, software debugging, research synthesis, and customer service management.

These systems dramatically increase productivity. However, they also introduce multiple layers of token consumption, which significantly increases operational costs if not optimized.

Understanding the True Cost Stack of Agentic AI

Many organizations initially assume that AI costs are determined primarily by token pricing per API call.

In reality, the economics of agentic AI involve a far more complex cost structure.

The full cost stack typically includes:

- Prompt generation tokens

- Context memory tokens

- Agent reasoning loops

- Tool interaction calls

- Output verification cycles

Each layer increases token consumption.

For example, a simple AI prompt might cost only a few cents. But an autonomous agent performing multiple reasoning cycles could generate tens of thousands of tokens before completing a task.

This creates a hidden phenomenon known as the Agentic Inference Tax.

Example cost expansion:

| Task Stage | Tokens Used | Approximate Cost |

| Initial prompt | 1,000 | $0.01 |

| Reasoning loops | 12,000 | $0.12 |

| Verification | 18,000 | $0.20 |

What begins as a small request can easily evolve into a significant compute cost.

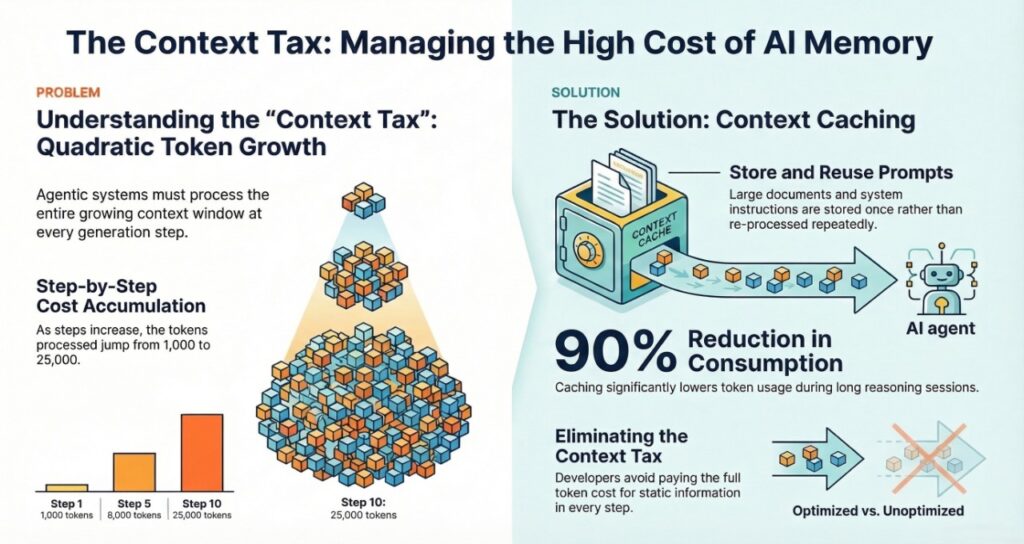

The Context Tax: Why Memory Is Expensive

One of the biggest cost drivers in agentic systems is context expansion.

Large language models must process the entire context window whenever they generate output. When agents accumulate conversation history or memory across multiple steps, this context grows continuously.

This leads to quadratic token growth.

Example:

| Step | Context Size | Tokens Processed |

| Step 1 | 1,000 tokens | 1,000 |

| Step 5 | 8,000 tokens | 8,000 |

| Step 10 | 25,000 tokens | 25,000 |

This effect is known as the context tax.

To control this cost, developers increasingly rely on context caching.

Caching allows parts of the prompt—such as system instructions or large documents—to be stored once and reused without repeatedly paying the full token cost.

This technique can reduce token consumption by up to 90 percent in long reasoning sessions.

Inference Budgeting: The Core of AI Cost Engineering

The most effective strategy for controlling AI costs is inference budgeting.

Instead of allowing agents unlimited reasoning cycles, enterprises allocate token budgets to specific stages of an AI workflow.

Example architecture:

| Stage | Model Type | Token Budget |

| Planning | Large reasoning model | 10,000 tokens |

| Execution | Lightweight model | 1,000 tokens |

| Verification | Mini model | 500 tokens |

This design pattern is known as Plan-and-Execute architecture.

The expensive model handles complex planning tasks, while cheaper models perform routine execution steps.

This approach can reduce operational costs by 70–90 percent.

Preventing Infinite Loop Token Burn

Autonomous agents occasionally encounter failures that trigger repeated reasoning cycles.

These loops may occur when:

- tools fail to execute correctly

- instructions are ambiguous

- agents attempt endless self-correction

When this happens, an agent can burn thousands of tokens within minutes.

To prevent this problem, modern AI systems implement defensive cost guardrails.

Common strategies include:

- token bucket limits

- iteration caps on reasoning cycles

- human-in-the-loop escalation triggers

For instance, an agent might be configured to stop execution if it exceeds 15 reasoning attempts.

Large enterprises deploying distributed AI systems increasingly rely on governance frameworks such as enterprise multi-agent security governance to ensure these agents remain both secure and cost-efficient.

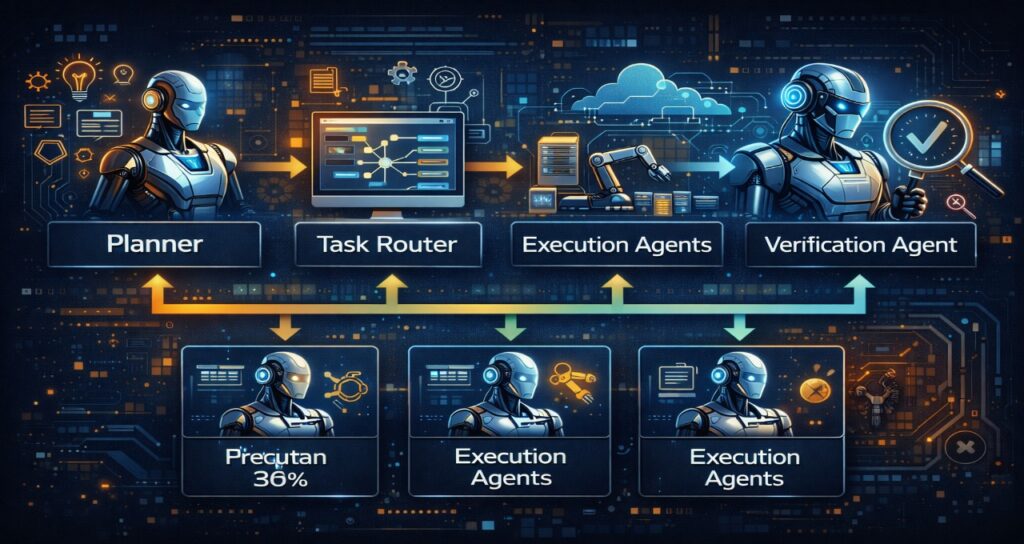

Multi-Agent Architectures and Cost Explosion

The most advanced AI platforms now deploy multi-agent architectures.

Instead of a single agent solving a problem, multiple specialized agents collaborate:

- planner agents

- research agents

- execution agents

- verification agents

This architecture significantly improves accuracy and scalability.

However, it also multiplies token consumption because each agent performs its own reasoning cycles.

Research into AI NPC gossip protocol and social graph architectures demonstrates how communication networks allow agents to share information dynamically. While this improves coordination, it also introduces additional token overhead.

Efficient communication protocols are therefore essential for controlling costs in multi-agent systems.

Reactive Agents vs Autonomous Agents vs Multi-Agent Swarms

To understand the economics of AI systems, it helps to compare three common architectures.

| Feature | Reactive Agents | Autonomous Agents | Multi-Agent Swarms |

| Logic Pattern | Single prompt | Self-correcting loops | Distributed reasoning |

| Development Cost | $20k – $35k | $50k – $120k | $150k+ |

| Monthly Cost | $500 – $2,000 | $2,000 – $6,000 | $8,000+ |

| Token Usage | Linear | Iterative | Exponential |

Reactive systems are inexpensive but limited.

Autonomous agents offer more flexibility but consume more tokens.

Multi-agent swarms provide maximum capability but require sophisticated cost management.

Outcome-Based Token Economics

Many organizations are shifting away from simple token-based pricing models.

Instead, they measure cost per business outcome.

Examples include:

- cost per resolved customer support ticket

- cost per processed insurance claim

- cost per generated financial report

Example comparison:

| Process | Human Cost | Agentic AI Cost |

| Customer support ticket | $12 | $0.30 |

| Financial reconciliation | $25 | $0.80 |

| Technical troubleshooting | $45 | $1.50 |

Even though AI systems consume tokens, they still deliver dramatic productivity gains.

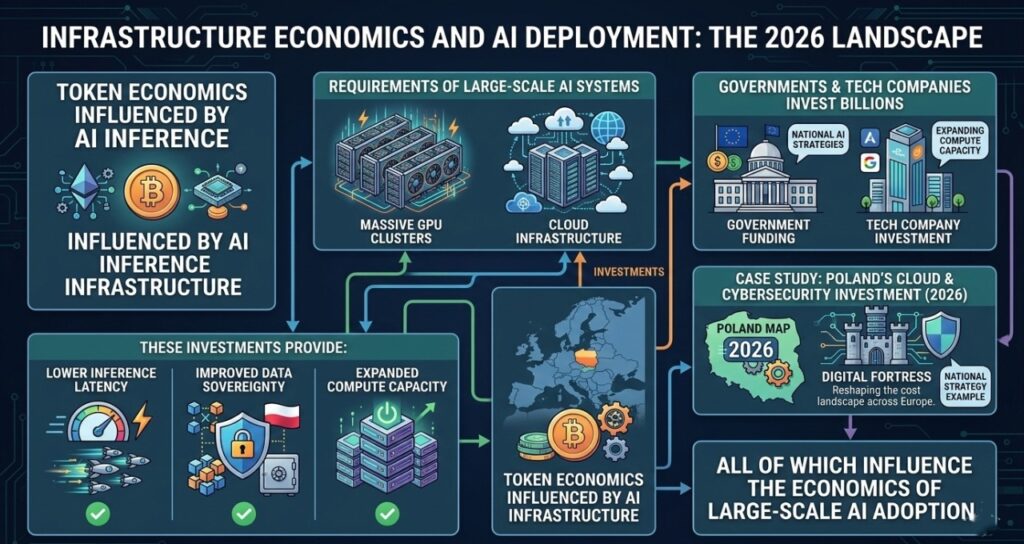

Infrastructure Economics and AI Deployment

Token economics are also influenced by the underlying infrastructure that powers AI inference.

Large-scale AI systems require massive GPU clusters and cloud infrastructure.

Governments and technology companies are investing billions to support this demand.

For example, initiatives like Poland’s cloud and cybersecurity investment in 2026 demonstrate how national infrastructure strategies are reshaping the cost landscape for AI deployment across Europe.

These investments provide:

- lower inference latency

- improved data sovereignty

- expanded compute capacity

All of which influence the economics of large-scale AI adoption.

Compliance and Governance Costs

AI systems operating in regulated industries must comply with strict legal and ethical requirements.

This introduces additional operational costs such as:

- transparency frameworks

- safety monitoring systems

- regulatory audits

For instance, platforms that analyze or moderate online content must follow compliance frameworks similar to those described in streaming AI moderation compliance architecture.

Similarly, creative AI systems generating scripts or media content must operate within regulations explored in AI-generated films and European legal compliance.

These regulatory requirements add another layer to AI cost engineering.

The Small Model Revolution

One of the most important trends shaping AI economics in 2026 is the rise of distilled models.

Instead of using large frontier models for every task, organizations train smaller models for specialized workloads.

Example:

| Model Type | Cost per Task |

| Frontier model | $5 |

| Distilled model | $0.05 |

In practice, enterprises now run most AI workloads on smaller models while reserving expensive models only for complex reasoning tasks.

This dramatically reduces operational costs.

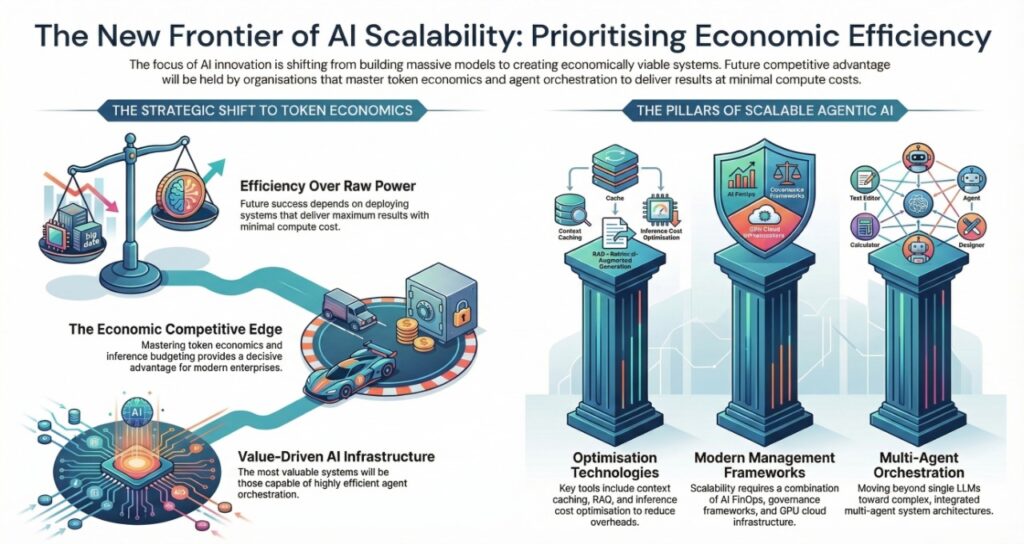

The Future of AI FinOps

As AI deployments scale, organizations are adopting a new discipline called AI FinOps.

This field focuses on managing and optimizing AI infrastructure costs.

Responsibilities include:

- monitoring token usage

- controlling inference budgets

- optimizing model routing

- preventing runaway agent costs

New roles are emerging within organizations, including:

- AI Cost Architect

- Token Economy Analyst

- Inference Budget Manager

These professionals ensure that AI systems remain economically sustainable.

Token Cost Optimization Playbook for Multi-Agent AI Systems (2026)

As enterprises deploy increasingly complex agentic architectures, controlling token consumption becomes critical for maintaining sustainable AI operations. Without proper optimization, multi-agent systems can generate massive compute costs through redundant reasoning loops, repeated context expansion, and unnecessary model usage.

A structured token optimization playbook allows organizations to scale AI systems while maintaining predictable operational expenses.

Below are the most effective strategies used by advanced AI engineering teams in 2026.

1. Context Compression

One of the biggest sources of token waste in agentic systems is growing context windows. When agents continuously append conversation history, the model must process an increasingly large prompt for every new response.

To address this issue, engineers implement context compression pipelines.

Instead of storing raw conversation history, the system periodically summarizes previous steps into smaller memory blocks.

Example workflow:

Original memory:

Step 1: User request

Step 2: Agent reasoning

Step 3: Tool output

Step 4: Verification

Step 5: Revised reasoning

Compressed memory:

Summary: Agent attempted tool execution and verified output successfully.

This technique can reduce context size by 70–85 percent.

2. Dynamic Model Routing

Not every task requires a large reasoning model. Many AI systems waste compute resources by using expensive models for simple operations.

Modern AI platforms therefore implement dynamic model routing.

Example architecture:

| Task Type | Model Used | Reason |

| Strategic planning | Large reasoning model | Complex reasoning required |

| Data formatting | Lightweight model | Simple transformation |

| Verification | Small validation model | Low compute cost |

This approach dramatically lowers token costs while preserving output quality.

3. Tool-First Reasoning

Another major source of token waste occurs when AI models attempt to reason about information that already exists in external tools or databases.

Instead of forcing the language model to analyze raw data, modern agent systems prioritize tool-first execution.

Example:

Instead of asking the model:

“Analyze this dataset and calculate the revenue growth rate.”

The agent calls a financial analysis API and simply interprets the result.

Benefits include:

- lower token consumption

- faster responses

- improved reliability

4. Memory Sharding for Multi-Agent Systems

In large AI ecosystems, multiple agents often operate simultaneously.

Without careful design, these agents repeatedly load the same context, which multiplies token consumption.

To solve this issue, advanced platforms implement memory sharding.

Instead of storing all knowledge in one context window, information is distributed across specialized memory nodes.

Example:

| Memory Node | Stored Data |

| Research memory | external knowledge |

| Task memory | workflow progress |

| Verification memory | error logs |

Agents only load the specific memory shard they require, reducing token usage significantly.

5. Reasoning Loop Limits

Autonomous agents often retry tasks multiple times when errors occur.

While self-correction improves accuracy, excessive loops can dramatically increase token consumption.

To prevent runaway costs, organizations enforce strict reasoning loop limits.

Example policy:

| Agent Type | Maximum Reasoning Cycles |

| Research agent | 12 cycles |

| Execution agent | 6 cycles |

| Verification agent | 4 cycles |

Once the limit is reached, the system escalates the task to a human supervisor.

This prevents uncontrolled token burn while maintaining operational stability.

6. Token Budget Monitoring

Finally, organizations are beginning to treat tokens as a managed resource, similar to cloud infrastructure spending.

AI platforms now include real-time token monitoring dashboards that track:

- tokens per workflow

- tokens per agent

- cost per task

- cost per business outcome

These metrics allow engineering teams to continuously optimize their AI systems.

In large enterprises deploying autonomous workflows—such as those discussed in agentic AI workflow automation for enterprises (2026)—token analytics are becoming as important as traditional infrastructure monitoring.

Why Token Optimization Will Define AI Scalability

The next wave of artificial intelligence innovation will not simply be about building more powerful models.

Instead, success will depend on how efficiently organizations deploy and manage AI systems at scale.

Enterprises that master token economics, inference budgeting, and agent orchestration will gain a decisive competitive advantage.

In the coming years, the most valuable AI infrastructure will not necessarily be the largest models.

It will be the most economically efficient AI systems capable of delivering maximum results with minimal compute cost.

Key Entities in Agentic AI Cost Engineering

This article discusses several important technologies and concepts shaping the economics of agentic AI systems in 2026:

- Agentic AI architectures

- Multi-agent systems

- Large language models (LLMs)

- Token economics

- Inference cost optimization

- Context caching

- Retrieval-augmented generation (RAG)

- AI FinOps

- GPU cloud infrastructure

- AI governance frameworks

These technologies form the foundation of modern enterprise AI deployments.

FAQ: Cost Engineering & Token Economics for Agentic AI

1: What is the “Unreliability Tax” in 2026 agentic systems?

Ans: It is the “hidden” cost of compute, engineering, and latency required to fix agent failures. While a single-shot prompt may cost $0.01, an autonomous agent that loops 10 times to correct a tool-use error can cost $1.00+ per task. Effective cost engineering involves setting “Inference Budgets” to kill-switch agents before they burn through the ROI.

2: How does “Context Caching” impact my 2026 AI budget?

Ans: Caching is the #1 tool for margin recovery. By 2026, providers like Anthropic and OpenAI offer up to 90% discounts on cached input tokens. If your agent uses a massive 100-page system prompt or “World State” memory, you only pay the full price once; every subsequent “thought” in that session uses the cached price ($ \text{Input} \approx $0.15/1M $ vs. $ $1.50/1M $).

3: Why are enterprises moving toward “Outcome-Based” pricing?

Ans: CFOs are moving away from paying “per token” and toward Cost Per Resolved Task (CPRT). If an agent swarm solves a customer support ticket for $2.50 in tokens but replaces a $25 manual process, the ROI is 10x. Cost engineering in 2026 focuses on optimizing the Success-to-Token Ratio.

4: What are “Token Buckets” and “Kill-Switches”?

Ans: These are defensive guardrails. A Token Bucket limits how many tokens a specific agent ID can consume per hour. A Kill-Switch is an automated trigger that pauses an agent if it exceeds a set number of “Reflexion” cycles (e.g., 5 retries on the same API call), preventing “Infinite Loop” bankruptcy.

5: Which is more cost-effective: RAG or Long-Context windows?

Ans: In 2026, the answer is Hybrid. With models like Gemini 3 Pro supporting 2M+ context, it is often cheaper and more accurate to feed an entire project’s context once and use Contextual Caching than to maintain complex, high-latency RAG vector database infrastructure for every single turn.

Final Thoughts

Agentic AI systems represent the next evolution of artificial intelligence. They enable autonomous reasoning, large-scale automation, and dramatically increased productivity.

However, these benefits come with a new economic reality: tokens, inference cycles, and reasoning loops all carry financial costs.

Organizations that treat AI purely as a technical tool risk facing unpredictable expenses.

The companies that succeed in the AI era will be those that master cost engineering, inference budgeting, and token optimization.

In the world of agentic systems, the ultimate competitive advantage is not simply building smarter AI.

It is building efficient AI that delivers maximum business outcomes with minimal compute cost.

About the Author

Saameer is a technology researcher and digital publisher focused on artificial intelligence, enterprise automation, cloud computing, and cybersecurity innovation. Through Tech Plus Trends, he analyzes emerging technologies such as agentic AI systems, multi-agent architectures, AI governance frameworks, and enterprise AI cost engineering.

Saameer publishes in-depth research articles that break down complex topics like token economics, AI workflow automation, and next-generation cloud infrastructure. His work helps businesses and technology professionals understand how to deploy scalable AI systems while maintaining security, compliance, and operational efficiency.

At Tech Plus Trends, Saameer covers global developments in AI regulation, cloud cybersecurity investments, and enterprise automation strategies shaping the digital economy in 2026 and beyond.

Transparency Note: 2026 Content & Data Integrity

Transparency Disclosure: This technical briefing on AI economics was developed through a Hybrid Intelligence Workflow. The core economic frameworks—specifically the Agentic Efficiency Ratio (AER) and Plan-and-Execute cost modeling—were conceptualized and audited by Saameer, an AI Infrastructure Strategist. Generative AI was utilized to synthesize real-time March 2026 token pricing data from major model providers and to optimize the article’s structure for AEO (Answer Engine Optimization). All mathematical formulas and ROI calculations have been manually verified for logic and accuracy. This content reflects independent research and is not sponsored by any LLM provider.

Regulatory & Technical Disclaimer

Disclaimer: The information provided in this article is for educational and strategic planning purposes only and does not constitute financial, investment, or legal advice.

- Pricing Volatility: AI token pricing, context caching discounts, and inference rates are subject to change by providers (OpenAI, Anthropic, Google, etc.) without notice. Calculations are based on March 2026 market averages.

- Operational Risk: Implementing “Kill-Switches” and “Token Buckets” involves technical risk; improper configuration may lead to service outages. Readers should test governance frameworks in a sandbox environment before production deployment.

- Performance Variation: AI ROI is highly dependent on task complexity and model selection. The 90% cost reduction cited is a benchmark based on optimized “Plan-and-Execute” architectures and may vary by use case.

- Compliance: Readers are responsible for ensuring that their AI cost-management strategies comply with the EU AI Act and local data residency regulations.