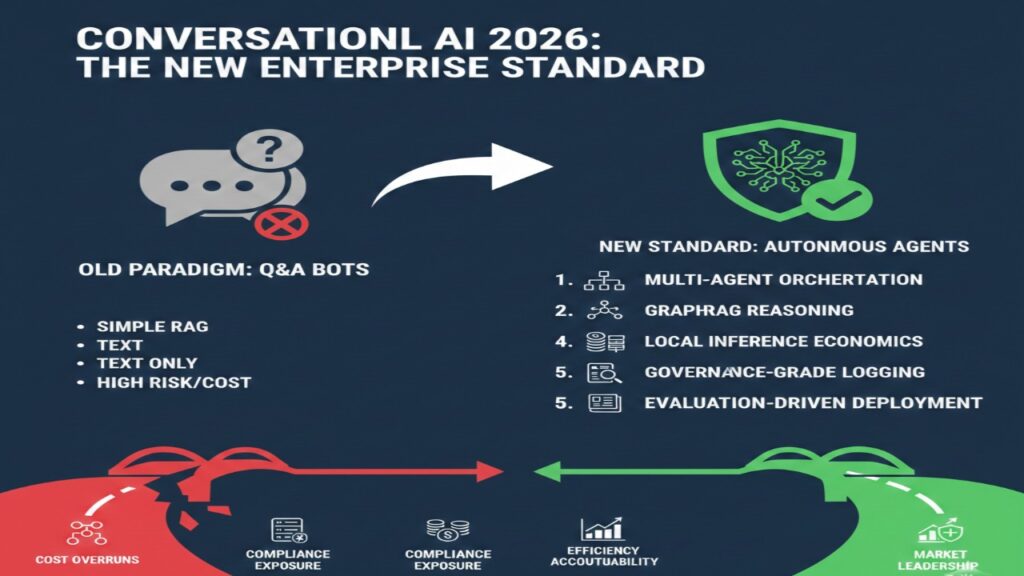

In 2026, conversational AI chatbot development has shifted from simple intent-matching systems to autonomous, auditable agentic workflows. Enterprises are no longer deploying bots that merely answer questions — they are deploying agents that execute refunds, query internal databases, trigger workflows, and operate under regulatory scrutiny. The shift introduces architectural consequences: cost volatility, hallucination liability, logging obligations, and governance exposure. Building a chatbot today requires decisions that affect not just UX — but compliance, taxation, compensation strategy, and audit risk.

The 2026 Conversational AI Architecture Exposure Snapshot in 8 Decisions

Before writing prompts, leadership must choose the system model.

| Layer | 2024 Default | 2026 Standard | Exposure if ignored |

| Logic | Linear chains | LangGraph multi-agent state machines | Task failure & loops |

| Retrieval | Vector-only RAG | GraphRAG (KG + vectors + hybrid search) | Multi-hop reasoning gaps |

| Deployment | Cloud API only | Hybrid / Local (vLLM, Ollama) | Escalating API burn |

| Memory | Chat history JSON | Semantic + episodic memory | Context collapse |

| Tool Use | Simple function call | Scoped & audited execution | Prompt injection risk |

| Logging | Minimal | Thread persistence + traceability | Compliance exposure |

| Evaluation | Manual testing | RAGas + DeepEval pipelines | Undetected hallucinations |

| Transparency | Optional | AI Act Article 50 disclosure logic | Regulatory breach |

In 2026, architectural shortcuts become audit liabilities.

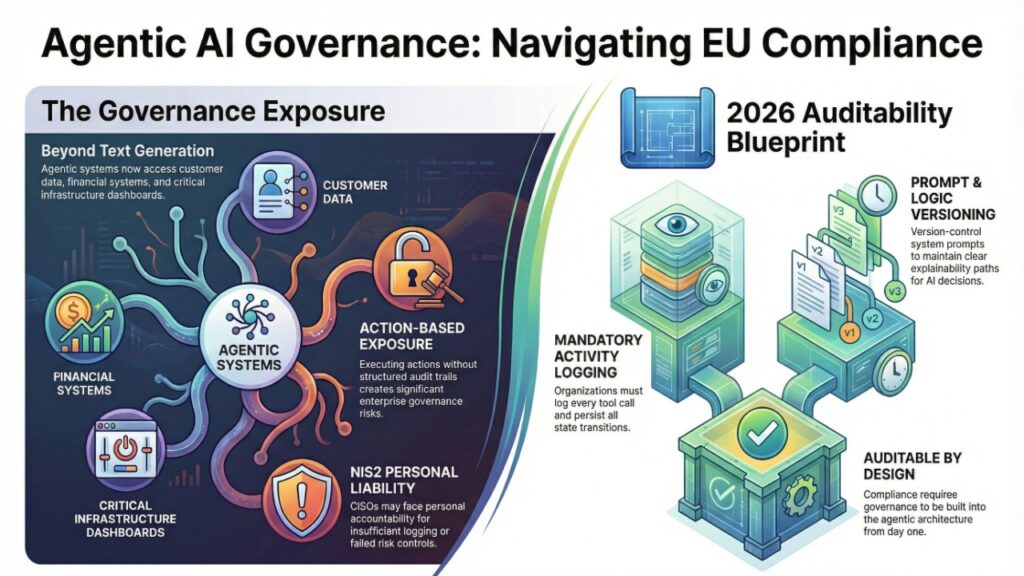

Why 2026 Enterprise Chatbot Architecture Now Intersects with EU Cyber Liability Frameworks

Conversational agents increasingly access:

- Customer data

- Internal policies

- Financial systems

- Infrastructure dashboards

When an AI system performs actions — not just generates text — governance becomes critical.

Under enforcement environments such as NIS2 CISO personal liability in Germany, accountability for insufficient logging or risk controls may escalate beyond technical teams. If your chatbot executes actions without structured audit trails, you are not deploying a feature — you are introducing governance exposure.

Key requirement in 2026:

- Log all tool calls

- Persist state transitions

- Version-control system prompts

- Maintain explainability paths

Agentic systems must be auditable by design.

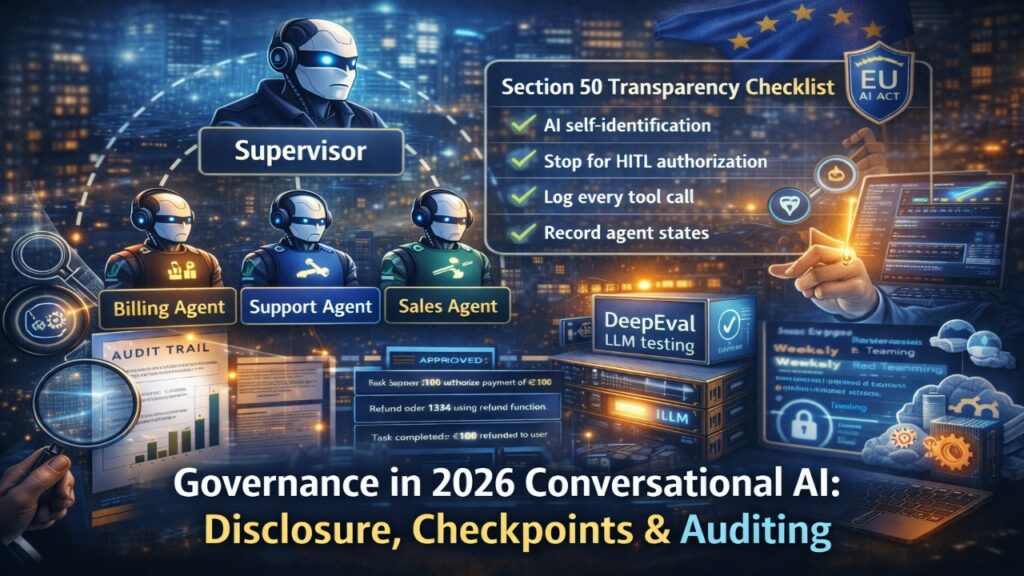

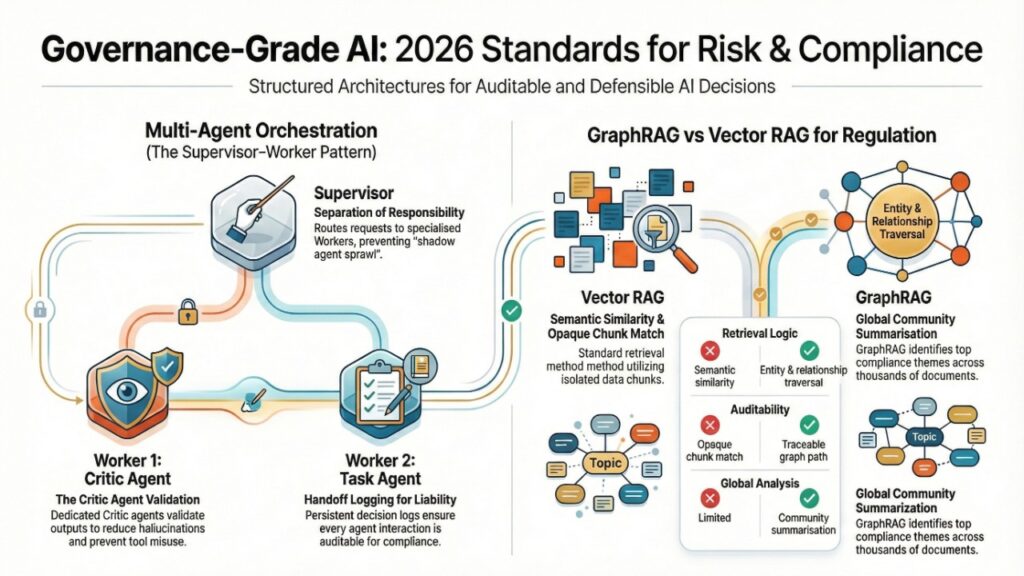

How Multi-Agent Orchestration with LangGraph Reduces Operational and Liability Risk in 2026

Single-agent systems lack separation of responsibility.

The 2026 standard is the Supervisor–Worker pattern.

Core structure:

- Supervisor agent routes requests

- Worker agents specialize (Billing, Support, Sales)

- Critic agent validates output

- Handoff logging persists decisions

This architecture:

- Reduces hallucinations

- Prevents tool misuse

- Enables compliance logging

It also mitigates “shadow agent sprawl” — an increasingly costly phenomenon examined in the 2026 shadow AI audit pricing matrix, where audit fees scale with undocumented AI deployments.

If you cannot enumerate your agents and their privileges, your governance posture is weak.

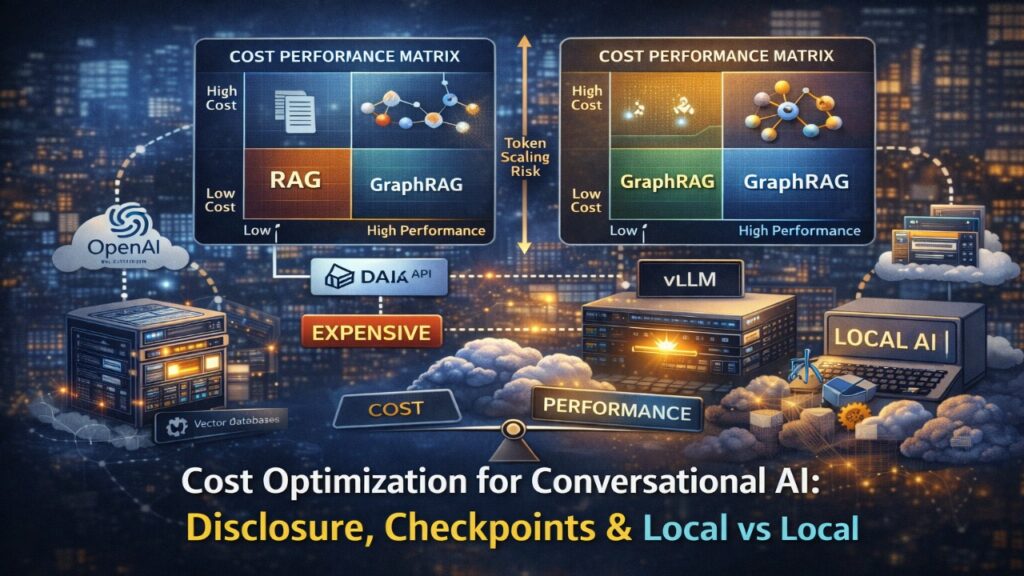

Where GraphRAG Outperforms Vector RAG for Regulated Conversational Systems in 2026

Vector RAG retrieves similar text.

GraphRAG retrieves relationships and community patterns.

GraphRAG vs Vector RAG

| Capability | Vector RAG | GraphRAG |

| Retrieval Logic | Semantic similarity | Entity & relationship traversal |

| Multi-hop Reasoning | Weak | Strong |

| Global Thematic Analysis | Limited | Community summarization |

| Auditability | Opaque chunk match | Traceable graph path |

2026 Breakthrough: Global Community Summarization

GraphRAG enables queries such as:

“What are the top 3 compliance themes across 5,000 legal documents?”

Vector RAG fails due to chunk isolation.

GraphRAG performs community detection and subgraph summarization.

This matters in regulated industries, where reasoning paths must be defensible and transparent — particularly in alignment with AI output attribution and watermarking expectations under EU compliance frameworks such as those discussed in AI watermarking tools for EU compliance in 2026.

GraphRAG is no longer just better retrieval — it is governance-grade reasoning.

How vLLM, Paged Attention, and Local Inference Redefine Conversational AI Cost Structure in 2026

Multi-agent systems amplify token usage.

Cloud APIs become economically unstable at scale.

Why vLLM Leads in 2026

- Paged Attention – Virtual memory-style GPU management

- 10x throughput for concurrent agent calls

- Efficient batching for supervisor-worker architectures

- 4-bit quantization support (GGUF)

Cost Comparison: Cloud vs Local

| Deployment | Cost Stability | Privacy | Latency |

| Cloud API | Variable | External | Low |

| Hybrid | Moderate | Controlled | Moderate |

| Local vLLM | Predictable | Internal | Hardware-dependent |

However, local deployment shifts cost to talent and infrastructure.

Enterprises must evaluate internal build economics against European compensation realities, including benchmarks from cybersecurity net pay across Europe in 2026.

Freelance or B2B AI specialists operating under structured tax models — such as those outlined in Poland’s 2026 B2B tax comparison (Ryczałt vs IP Box) — can materially change total cost of ownership.

Architecture and hiring are now financially intertwined.

EU AI Act Article 50 Transparency: The 2026 Disclosure Requirement Many Chatbot Architectures Miss

Under EU AI Act transparency provisions (effective 2025–2026), conversational AI systems interacting with humans must:

- Self-identify as AI at interaction start

- Avoid deceptive impersonation

- Disclose synthetic content when relevant

Your architecture must include:

- Disclosure logic in system prompts

- Automated identification on session start

- Logging of transparency statements

Failure to implement disclosure is a compliance design flaw — not a UX oversight.

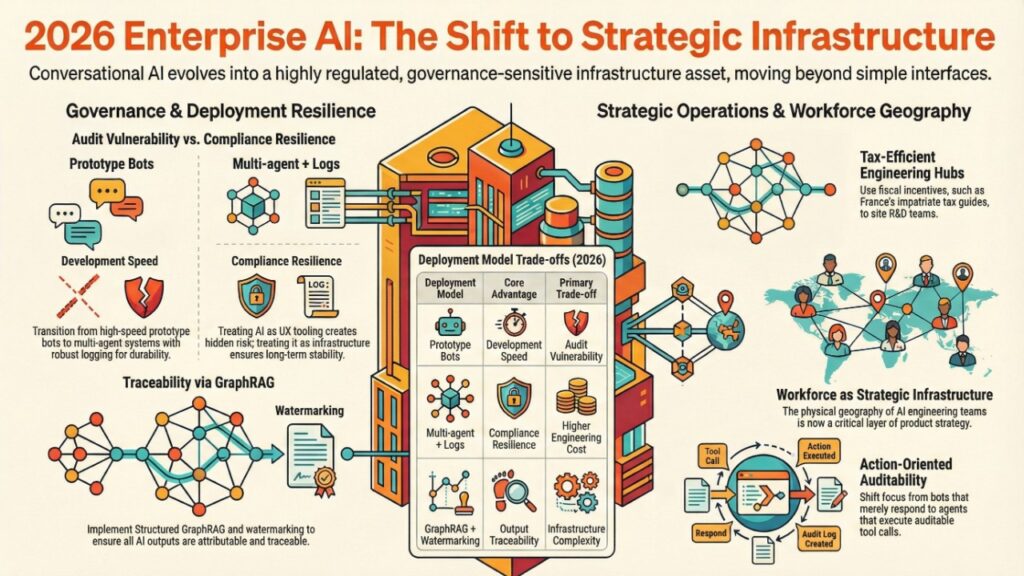

Enterprise AI Team Structuring: Relocation Incentives and AI Engineering Strategy in 2026

Large enterprises expanding AI agent development hubs increasingly consider fiscal incentives when relocating senior engineers.

For example, France’s structured relocation benefits for cybersecurity professionals — detailed in the France 2026 cybersecurity engineer impatriate tax guide — influence where conversational AI R&D teams are established.

When agentic AI becomes a core product layer, workforce geography becomes strategic infrastructure.

Production-Grade Conversational AI Governance: Who Gains Advantage in 2026?

| Deployment Model | Advantage | Exposure |

| Prototype bot | Speed | Audit vulnerability |

| Multi-agent + logs | Compliance resilience | Higher engineering cost |

| Shadow internal agents | Low visibility | High audit pricing |

| Structured GraphRAG + watermarking | Traceability | Infrastructure complexity |

Enterprises that treat chatbot architecture as regulated infrastructure gain durability. Those treating it as UX tooling accumulate hidden risk.

What This Means for Your 2026 Conversational AI Deployment Strategy

You must now reassess:

- Is your chatbot executing actions or merely responding?

- Can every tool call be audited?

- Is your cost model sustainable at scale?

- Is your engineering team structured tax-efficiently?

- Are outputs attributable and traceable?

In 2026, conversational AI is governance-sensitive infrastructure.

2026 Executive FAQ on Conversational AI Chatbot Development

1: Does NIS2 materially affect conversational AI systems?

Ans: If the system interacts with regulated infrastructure or processes sensitive operational data, logging and governance controls become executive-level concerns. The decision trigger is whether your chatbot executes operational actions.

2: When should we adopt GraphRAG over vector RAG?

Ans: When multi-hop reasoning or cross-document summarization is required. The trigger is the need for explainable reasoning paths.

3: Is local deployment financially justified?

Ans: For sustained high-volume usage, local inference stabilizes cost. The trigger is predictable token burn exceeding infrastructure ROI.

4: How do we manage Human-in-the-Loop safely?

Ans: Use interrupt checkpoints before high-risk actions (refunds, account changes). The trigger is operational impact.

5: What is “Agent Drift” and how is it controlled?

Ans: Drift occurs when agent behavior deviates due to prompt, model, or schema changes. In 2026, teams use DeepEval synthetic adversarial testing to red-team agents weekly. The trigger is deployment at scale.

6: Do transparency requirements affect architecture?

Ans: Yes. AI Act Article 50 requires automated disclosure logic. The trigger is any system directly interacting with end users.

Regulatory and Institutional Sources Shaping 2026 Conversational AI Governance

European Parliament & Council — EU Artificial Intelligence Act (Regulation (EU) 2024/…)

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

European Commission — AI Act Implementation & Guidance Portal

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

European Union Agency for Cybersecurity (ENISA) — NIS2 Directive Resources

https://www.enisa.europa.eu/topics/nis-directive/nis2

EUR-Lex — Directive (EU) 2022/2555 (NIS2 Directive Full Legal Text)

https://eur-lex.europa.eu/eli/dir/2022/2555/oj

European Data Protection Board (EDPB) — AI & Data Protection Guidance

https://edpb.europa.eu

Bundesamt für Sicherheit in der Information Technik (BSI – Germany)

https://www.bsi.bund.de

Commission Nationale de l’Informatique et des Libertés (CNIL – France)

https://www.cnil.fr

OECD AI Policy Observatory

https://oecd.ai

Final Standard

In 2026, conversational AI chatbot development is no longer about generating answers.

It is about orchestrating accountable, efficient, and economically defensible agentic systems.

Enterprises that master:

- Multi-agent orchestration

- GraphRAG reasoning

- Local inference economics

- Governance-grade logging

- Evaluation-driven deployment

will lead the next phase of conversational AI maturity.

Those that remain in the “simple RAG tutorial” mindset will increasingly face cost overruns, compliance exposure, and operational fragility.

AUTHOR BIO

Saameer is a European cybersecurity market analyst and B2B strategy writer focused on regulatory risk, AI compliance, and cross-border tax optimization for security professionals. His 2026 research covers EU AI Act enforcement, NIS2 liability exposure, Shadow AI governance, and the evolving economics of boutique security consulting across Germany, France, and Central Europe.

Saameer writes for senior CISOs, independent consultants, and digital creators navigating the intersection of technology, compliance, and financial strategy in the European market.

Disclaimer: This content is for informational and educational purposes only. It does not constitute legal, financial, or regulatory advice. Implementing autonomous agentic systems involves significant operational risk and compliance obligations under frameworks like the EU AI Act and NIS2. Readers should consult with legal counsel and cybersecurity auditors before deploying agents that perform financial or data-sensitive actions.

Transparency Note: How this Research was Conducted

- Human-Centric Insight: This article was conceptualized and architected by [Your Name/Company], focusing on the intersection of AI development and European regulatory strategy.

- AI-Assisted Synthesis: We utilized advanced LLMs to synthesize 2026 market data, cross-reference technical documentation for vLLM and LangGraph, and verify emerging tax codes.

- Verification: All technical code and regulatory citations have been human-verified for accuracy against February 2026 standards.

- Provenance: This content is “AI-Assisted” but carries full human editorial responsibility.