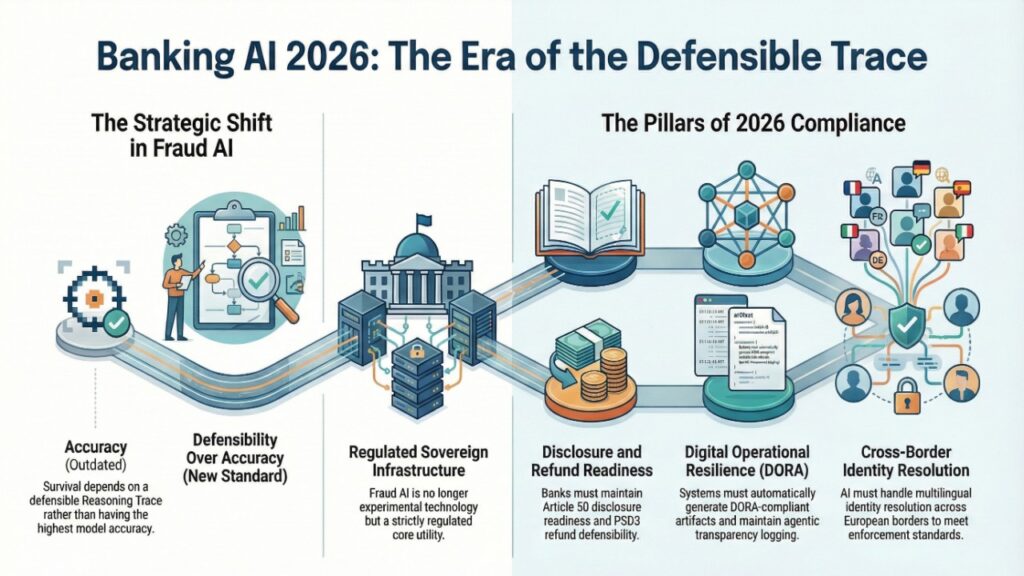

In 2026, AI fraud detection in European banks is no longer a performance arms race. It is a regulatory survival discipline shaped by the EU AI Act, PSD3 liability reform, and DORA operational resilience mandates.

Fraud systems are now classified as high-risk AI systems, triggering mandatory logging, transparency, and human oversight obligations. At the same time, PSD3 shifts refund liability toward banks in impersonation scams, while DORA requires machine-generated incident artifacts for supervisory reporting.

The result: fraud AI must operate at <35ms for instant payments — and simultaneously produce a legally defensible Reasoning Trace at the moment of decision.

2026 is the year fraud detection became regulated infrastructure.

AI Fraud Detection in European Banks 2026: Structural Exposure Map

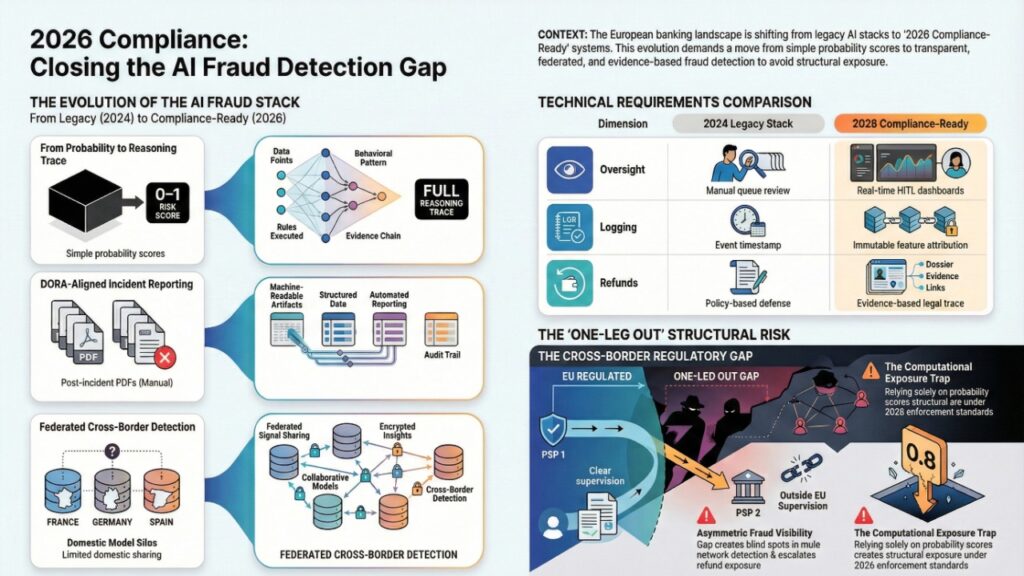

| Dimension | 2024 Legacy Stack | 2026 Compliance-Ready Stack |

| Model Output | Risk score (0–1) | Full Reasoning Trace (why/how) |

| Logging | Event timestamp | Immutable feature attribution + model hash |

| Oversight | Manual queue review | Real-time HITL dashboards |

| Incident Reporting | Post-incident PDF | DORA-aligned machine artifacts |

| Cross-Border Detection | Domestic model silo | Federated signal sharing |

| Refund Defense | Policy-based | Evidence-based legal trace |

If your system produces only a probability score, you are structurally exposed under 2026 enforcement standards.

The “One-Leg Out” Gap: Cross-Border Blind Spots in AI Fraud Detection

The “One-Leg Out” scenario refers to transactions where only one Payment Service Provider (PSP) is under EU regulatory supervision.

This creates:

- Asymmetric fraud signal visibility

- Liability ambiguity

- Weak mule network detection

- Refund exposure escalation

However, in 2026, the gap is not merely jurisdictional — it is computational.

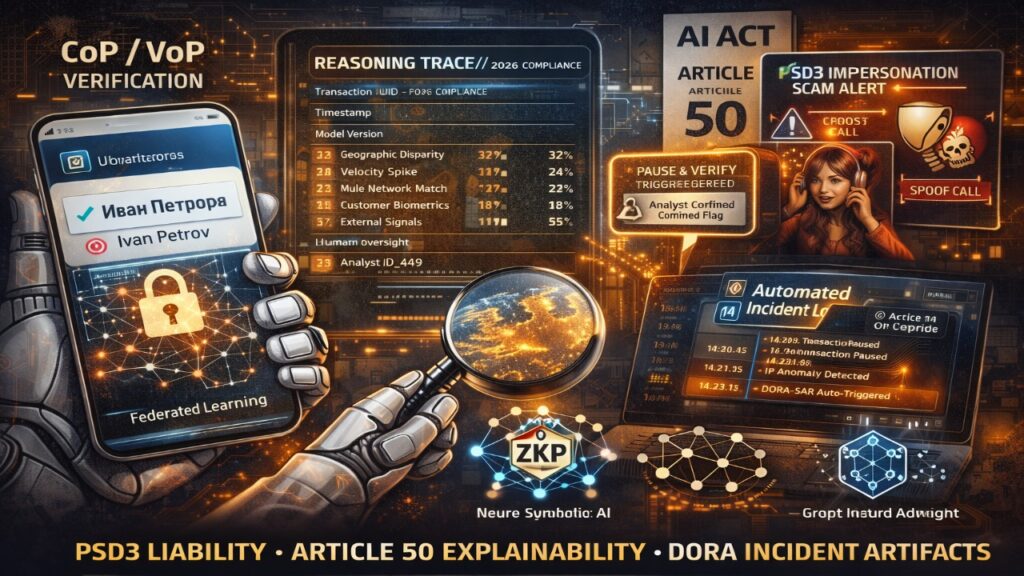

Multilingual Identity Resolution & Homoglyph Risk Under Mandatory VoP

Under PSD3, Verification of Payee (VoP) is mandatory for euro-area transfers. But real-time VoP introduces an underreported challenge:

How does AI resolve multilingual name mismatches in under 35ms without inflating false positives?

Fraud actors exploit:

- Cyrillic-to-Latin substitution

- Unicode homoglyph spoofing

- Diacritic stripping

- Phonetic transliteration ambiguity

Example:

“Petrov” vs “Petrоv” (Cyrillic ‘o’)

“García” vs “Garcia”

2026 Technical Countermeasures

| Risk Vector | AI Countermeasure | Governance Control |

| Homoglyph spoofing | Unicode normalization + embedding similarity | Logged normalization layer |

| Cyrillic/Latin shifts | Multilingual transformer embeddings | Attribution trace |

| Phonetic mismatch | Phoneme-aware fuzzy graph models | Confidence threshold log |

| Cross-border pattern lag | Federated learning | Zero-Knowledge Proof signal exchange |

This reframes the One-Leg Out issue as a real-time identity resolution problem.

Institutions that fail to integrate federated fraud analytics face elevated remediation costs similar to those seen in broader shadow system audits, as analyzed in Shadow AI audit fees 2026 pricing matrix.

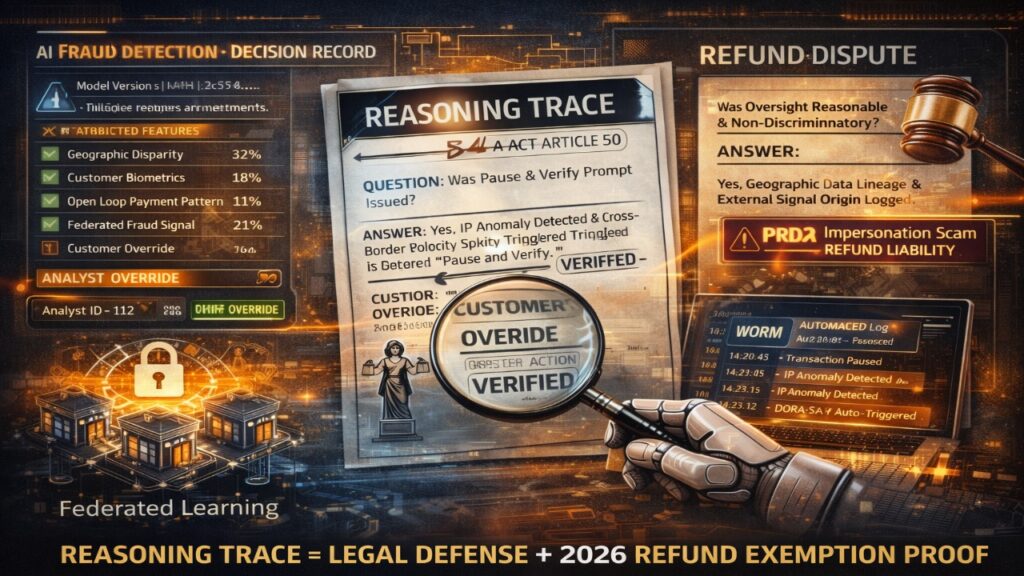

PSD3 Liability Shift for Impersonation Scams: Fraud AI as Legal Defence Infrastructure

PSD3 introduces a critical shift:

Banks must refund victims of impersonation scams (vishing, spoofing, APP fraud) unless they prove:

- The customer acted with gross negligence

- The bank fulfilled its monitoring obligations

This transforms the Reasoning Trace from regulatory artifact into legal defense instrument.

A compliant fraud system must prove:

- Anomaly detection occurred

- “Pause & Verify” prompts were issued

- Threshold logic was reasonable

- Human oversight was available

- Customer override was recorded

Without feature-level logging, refund disputes default against the institution.

Fraud AI in 2026 is not just detection.

It is refunding defense architecture.

Neuro-Symbolic AI for AML Explainability

Pure deep learning models are insufficient under Article 13 transparency requirements.

Tier-1 banks now deploy neuro-symbolic architectures:

- Deep neural networks for pattern recognition

- Rule engines for compliance boundaries

- Explainability wrappers generating feature attribution

2026 Technical Blueprint: Sovereign Infrastructure Model

| Fraud Typology | Model Architecture | Governance Lever | 2026 Compliance Factor |

| Instant APP Scams | BiLSTM + XGBoost | SHAP / LIME | 10ms stop-payment defensibility |

| Mule Networks | Dynamic GNNs | GraphSaliency | Article 12 fund-flow traceability |

| Social Engineering | Multimodal Transformers | Attention maps | Deepfake detection evidence |

| Cross-Border (One-Leg Out) | Federated Learning | Zero-Knowledge Proofs | GDPR-compliant signal sharing |

| SAR Automation | Fine-tuned LLM | Source attribution | Article 13 documentation |

Fraud AI is now treated as sovereign-grade digital infrastructure, not experimentation.

Explainability frameworks increasingly align with structured retrieval pipelines similar to those outlined in Enterprise RAG implementation best practices 2026.

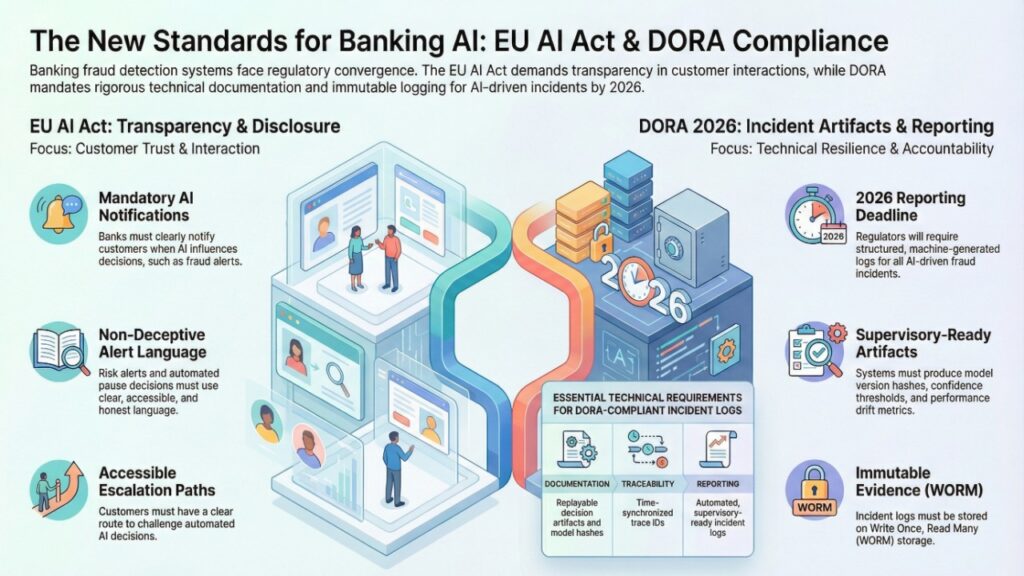

EU AI Act Article 50 Disclosure Requirements for Banking Fraud Alerts

Article 50 introduces disclosure obligations when interacting with AI systems. In fraud detection, this intersects with:

- Customer-facing risk alerts

- Automated pause decisions

- Biometric verification prompts

Banks must ensure:

- Clear notification when AI influences decision-making

- Non-deceptive alert language

- Accessible escalation paths

Failure to align fraud alerts with disclosure standards risks enforcement escalation.

DORA Compliance for AI-Driven Incident Artifacts 2026

Under DORA, fraud incidents that disrupt payment continuity require structured reporting.

In 2026, regulators increasingly request:

- Machine-generated incident logs

- Model version hashes

- Confidence thresholds

- Performance drift metrics

- Replay able decision artifacts

Fraud systems must produce:

- Automated supervisory-ready reports

- Immutable logging (WORM storage)

- Time-synchronized trace IDs

Without integrated artifact generation, DORA compliance becomes retroactive and costly.

Agentic Orchestration & Internal Monologue Logging

Flat fraud engines are being replaced by agentic orchestration layers.

However, auditors now request the Agentic Internal Monologue:

Example log sequence:

- Step 1: Cross-border trigger detected

- Step 2: Queried graph database

- Step 3: Velocity anomaly exceeded threshold

- Step 4: Risk score > 0.87

- Step 5: Initiated Pause & Verify

If your system logs only the final risk score — but not the decision pathway — governance is incomplete.

Agentic transparency challenges mirror broader governance concerns explored in Conversational AI 2026: agentic systems governance.

AI Reasoning Trace Template (Audit + Legal Artifact)

Section 1: Meta-Context

- Transaction UUID

- Timestamp

- Model Version + Hash

- Risk Classification

Section 2: Feature Attribution

- Geographic Disparity: 32%

- Velocity Spike: 24%

- GNN Sub-Graph Match: 21%

- Behavioral Biometrics: 18%

- External Signals: 5%

Section 3: Human Oversight

- Analyst ID

- Decision Confirmation

- Override status

Section 4: Bias & Robustness

- Disparate impact test

- Drift score

- Data lineage reference

This artifact satisfies:

- AI Act Article 12 logging

- Article 13 transparency

- Article 14 human oversight

- PSD3 liability defense

- DORA incident reporting

2026 AI Audit Checklist for Fraud Systems

Data Provenance

- Lineage trace for every feature

- Representativeness documentation

- Bias parity testing every 24h

Automated Record-Keeping

- Model hash logging

- Immutable WORM storage

- Historical replay capability

Explainability

- SHAP / GNNExplainer integration

- Natural-language reasoning narrative

- Agentic routing logs

Oversight & Robustness

- HITL escalation triggers

- Adversarial attack testing

- Drift monitoring dashboard

If your architecture treats AI as a single output variable, it is non-compliant in 2026.

Talent, Cost & Infrastructure Readiness

Fraud AI transformation requires:

- Graph ML engineers

- Federated learning architects

- Compliance-integrated MLOps

- XAI specialists

Recruitment and liability structuring increasingly reflect broader EU regulatory hiring shifts, similar to trends outlined in Remote AI engineer jobs CEE 2026: tax & liability.

Cost exposure in 2026 is dominated by:

- Refund reimbursements

- Audit remediation

- False positive churn

- Infrastructure retrofitting

Building explainability upfront is cheaper than defending non-compliance retroactively.

Winners’ vs Exposure Divide in 2026

| Institution Type | 2026 Position | Exposure Level |

| Neuro-symbolic + trace logging | Enforcement resilient | Low |

| Pure black-box DL | Audit vulnerable | High |

| Federated consortium participant | Cross-border protected | Low |

| Siloed PSP | One-Leg Out exposed | High |

| Agentic without monologue logs | Governance breach risk | Severe |

Accuracy no longer differentiates leaders.

Auditability does.

2026 Executive FAQ on AI Fraud Detection in European Banks

1.What are the EU AI Act Article 50 disclosure requirements for banking fraud alerts?

Answer: Banks must disclose AI involvement in customer-facing fraud alerts and ensure transparent escalation pathways.

2.How does PSD3 change impersonation scam refund liability?

Answer: Banks must refund victims unless they prove gross negligence and demonstrate adequate monitoring through documented Reasoning Traces.

3.What is DORA compliance for AI-driven incident artifacts 2026?

Answer: Fraud systems must automatically generate structured, replay able incident documentation aligned with operational resilience reporting.

4.Why is neuro-symbolic AI critical for AML explainability?

Answer: It combines deep learning performance with rule-based interpretability required under Article 13 transparency mandates.

5.Can a bank use ‘Black Box’ models for fraud detection in 2026?”

Answer: Legally, yes, but practically, no. While the EU AI Act doesn’t ban specific architectures, Article 13 mandates “transparency and interpretability.” A bank using an uninterpretable black box faces severe “Refund Liability” under PSD3 because it cannot provide the evidence required to prove it fulfilled its monitoring duties or to contest “Gross Negligence” claims.

6.What is the biggest compliance red flag in 2026?

Answer: Lack of immutable logging and absence of replay able model version trace.

Regulatory and Market Sources Shaping 2026 AI Fraud Detection in European Banks

European Commission – EU AI Act Final Text

High-risk AI system requirements, logging, and transparency mandates.

https://eur-lex.europa.eu/

European Union – Digital Operational Resilience Act (DORA)

Incident reporting and operational resilience standards for financial institutions.

https://eur-lex.europa.eu/

European Banking Authority – PSD3 & AML Guidelines

Payment liability, VoP implementation, and AML supervisory expectations.

https://www.eba.europa.eu/

European Central Bank – Instant Payment Framework

SEPA Instant settlement timing and fraud risk management.

https://www.ecb.europa.eu/

Conclusion: Accountable Intelligence Defines 2026

AI fraud detection in European banks is no longer defined by detection rates alone.

It is defined by:

- Article 50 disclosure readiness

- PSD3 refund defensibility

- DORA artifact generation

- Cross-border multilingual identity resolution

- Agentic transparency logging

The institutions that survive 2026 enforcement are not those with the highest model accuracy.

They are those with the most defensible Reasoning Trace.

Fraud AI is no longer experimental technology.

It is regulated sovereign infrastructure.

Author Bio

Fintech & AI Governance Analyst | Strategic Advisor on EU AI Act Compliance

Saameer is a recognized authority on the structural evolution of European banking infrastructure under the EU AI Act, PSD3, and DORA. With over a decade of experience in data-driven decision-making, he specializes in the transition from “Black Box” models to Accountable Intelligence.

Throughout 2025, Saameer played a pivotal role in advising Tier-1 European financial institutions on the deployment of Neuro-Symbolic Fraud Engines, ensuring millisecond-level detection without sacrificing the Article 13 Reasoning Traces now required by regulators. He is a leading voice in the “Sovereign Infrastructure” movement, helping CISOs and CROs bridge the gap between AI performance and regulatory survivability.

Through Tech Plus Trends, he delivers proprietary research on the economics of AI governance, federated learning for fraud consortiums, and the technical audit requirements for agentic orchestration. When he isn’t auditing AI reasoning logs, Saameer is a vocal advocate for “Transparency by Design,” helping the fintech ecosystem build resilient systems that treat explainability as a competitive asset, not a compliance burden.

Transparency Note (EU AI Act Article 50 Compliant)

Transparency Notice: This article was authored through a collaborative process between a human subject matter expert and generative artificial intelligence. While AI was utilized for data synthesis, structural optimization, and regulatory cross-referencing, the final manuscript has undergone rigorous human editorial review and factual verification. In accordance with Article 50(4) of the EU AI Act, the human author retains full editorial responsibility for the perspectives, conclusions, and accuracy of the content presented herein.

Legal Disclaimer

Disclaimer: The information provided in this article is for informational and educational purposes only and does not constitute legal, financial, or investment advice. The “2026” scenarios described are based on current regulatory trajectories (EU AI Act, PSD3, DORA) and market trends as of February 2026. Financial institutions should consult with qualified legal counsel and compliance specialists to address specific jurisdictional requirements. The author and publisher disclaim all liability for any direct or indirect loss or damage resulting from the use of or reliance on the information contained in this professional briefing.