Power, latency, and infrastructure constraints are reshaping sovereign AI in Europe

“Europe is investing €20 billion into AI gigafactories, but a critical bottleneck is already emerging: power and grid capacity. While headlines focus on GPUs and sovereignty, the real constraint is electricity access and latency. This guide breaks down the hidden problems shaping Europe’s AI infrastructure in 2026—and what enterprises must do about them.”

Key Takeaway

AI gigafactories in Europe are not just larger data centers—they are sovereign, regulation-aligned compute systems combining GPU clusters, energy infrastructure, and compliance layers. In 2026, enterprises must balance latency, power access, and EU AI Act requirements when choosing between Nordic training hubs, central inference clusters, or hybrid “split-stack” deployments.

What AI Gigafactories Are — And Why They Matter Now

The term sounds like hype. It isn’t.

AI gigafactories are ultra-scale compute facilities designed specifically for training and running large AI systems. They go far beyond traditional data centers in size, power density, and regulatory integration.

Why now?

Three forces collided in 2026:

- The EU’s €20B InvestAI initiative accelerated physical infrastructure buildouts

- GPU clusters crossed the 100,000+ unit threshold, demanding new architectures

- The EU AI Act shifted compute from commodity to regulated infrastructure

Who does this impact?

- Tier-1 banks needing compliant inference

- Governments building sovereign AI systems

- Enterprises scaling RAG and agent-based architectures

- Cloud providers racing to localize compute

This isn’t about “where your app runs.” It’s about who controls the intelligence layer of Europe’s economy.

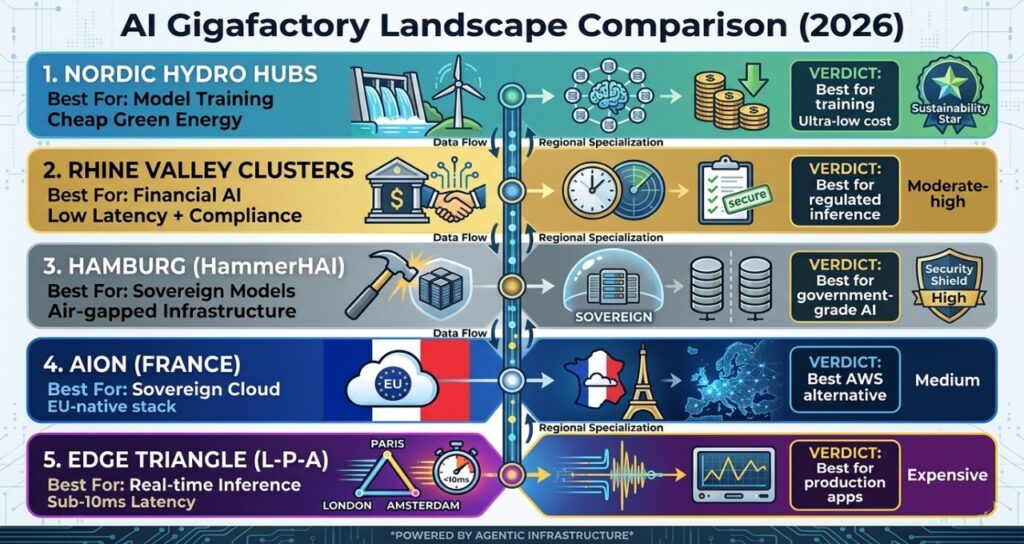

AI Gigafactory Landscape Comparison (2026)

| Location / Platform | Best For | Key Strength | Power Profile | Verdict |

| Nordic Hydro Hubs | Model training | Cheap green energy | Ultra-low cost | Best for training |

| Rhine Valley Clusters | Financial AI | Low latency + compliance | Moderate-high | Best for regulated inference |

| Hamburg (HammerHAI) | Sovereign models | Air-gapped infrastructure | High | Best for government-grade AI |

| AION (France) | Sovereign cloud | EU-native stack | Medium | Best AWS alternative |

| Edge Triangle (London-Paris-Amsterdam) | Real-time inference | Sub-10ms latency | Expensive | Best for production apps |

Europe’s Gigafactory Nodes

Nordic HydroCore Training Hubs

What it does:

Massively parallel training environments powered by hydroelectric energy.

Best for:

Foundation model training and large-scale experimentation.

Strength:

Lowest energy cost in Europe. Natural cooling reduces operational overhead.

Limitation:

Latency. You’re looking at 45–60ms to Central Europe—too slow for real-time applications.

Rhine Valley Sovereign Clusters

What it does:

Hosts compliance-heavy AI workloads near financial and regulatory centers.

Best for:

Banks, fintech, and EU governance systems.

Strength:

Proximity to Frankfurt and Strasbourg enables low-latency, regulation-aligned inference.

Limitation:

Energy constraints. Grid capacity is already under pressure in this region.

Hamburg — HammerHAI Gigafactory

What it does:

A 1.2 GW sovereign AI facility designed for sensitive workloads.

Best for:

Government, healthcare, and defense-grade AI.

Strength:

Air-gapped infrastructure ensures maximum data sovereignty.

Limitation:

Limited accessibility for commercial workloads.

AION (France Sovereign Cloud)

What it does:

Provides a European alternative to US hyperscalers.

Best for:

Companies avoiding US cloud dependency.

Strength:

Full alignment with EU data and AI regulations.

Limitation:

Smaller ecosystem compared to AWS or Azure.

Latency Triangle (London–Paris–Amsterdam)

What it does:

Edge inference clusters delivering real-time AI responses.

Best for:

Trading systems, personalization engines, and media AI.

Strength:

Sub-10ms latency—critical for user-facing AI.

Limitation:

High operational cost due to energy and real estate.

Real-World Case Study

Morgan Stanley AI Assistant Deployment

Morgan Stanley implemented an AI-powered knowledge assistant to support financial advisors. The system reduced information retrieval time by over 30%, improving client response times and operational efficiency.

What made it work?

- Training workloads handled centrally

- Inference deployed close to financial hubs

- Strong compliance alignment

This mirrors Europe’s emerging pattern: distributed compute, centralized intelligence.

The Hidden Friction: Where Most Articles Stop

The Latency Tax

Training in Sweden. Serving users in Milan.

That gap creates a silent cost: latency.

Inference systems—especially those built on RAG architectures like those explored in enterprise AI search vs retrieval-based systems—depend on fast retrieval. Add 50ms delay, and performance collapses.

Solution? A split-stack architecture:

- Train in the Nordics

- Deploy inference at the edge

The Power Bottleneck

Everyone talks about GPUs.

Almost no one talks about transformers.

Europe’s grid infrastructure is already strained. In some regions, new connections are delayed until 2028–2030 (based on EU grid operator disclosures).

A single gigafactory consumes:

- 1.4 TWh/year

- Equivalent to 317,000 households

The real constraint isn’t compute.

It’s electricity.

The “Trust Stack” Problem

Security isn’t just software anymore.

Blackwell-era systems are embedding confidential computing directly into hardware. That means:

- Data stays encrypted during processing

- Access controls are enforced at the silicon level

- Compliance becomes part of infrastructure

This aligns with enterprise trends seen in SOC2-aligned AI systems, but at a much deeper level.

How to Choose the Right Deployment Strategy

Choose Nordic Gigafactories if:

- You are a large enterprise or AI lab

- Your priority is training large models

- You need low-cost, high-scale compute

Choose Rhine Valley or Central EU clusters if:

- You are a regulated organization

- Your priority is compliance + latency

- You serve European customers directly

Choose Edge inference clusters if:

- You are building real-time AI applications

- Your priority is sub-10ms performance

- You rely on user-facing systems

Avoid this category entirely if:

- You don’t yet have a production AI workload

The 6-Layer Sovereign AI Infrastructure Stack

- Energy Layer

Direct integration with renewable energy sources to ensure sustainability and supply stability - Compute Layer

GPU clusters (Blackwell-class) handling training and inference workloads - Interconnect Layer

High-speed links enabling clusters to function as a unified system - Security Layer

Hardware-level encryption and confidential computing - Orchestration Layer

Sovereign cloud and Kubernetes-based workload management - Regulation Layer

Real-time monitoring for EU AI Act compliance

This stack is the physical counterpart to software systems like those discussed in AI knowledge infrastructure replacing enterprise search.

Contrarian Insight

The conventional view is that Europe’s AI strategy is limited by its dependence on non-European chips. The reality is harsher: Europe doesn’t have a chip problem—it has a power distribution problem. The bottleneck isn’t Nvidia. It’s the grid.

FAQ: AI Gigafactories in Europe

1.What is an AI gigafactory?

Ans-An AI gigafactory refers to a large-scale compute facility designed specifically for AI workloads, typically operating at 100MW to 1GW capacity. It includes GPU clusters, energy systems, and compliance layers. These facilities enable training and deployment of large AI models at scale. Organizations should view gigafactories as strategic infrastructure rather than traditional IT assets.

2.Why is Europe investing in AI gigafactories?

Ans-Europe is investing to achieve digital sovereignty and reduce reliance on foreign cloud providers. These facilities ensure compliance with EU regulations and provide localized compute resources. The goal is to control critical AI infrastructure. Companies operating in Europe should align with these initiatives to reduce regulatory and operational risks.

3.What is the latency tax in AI infrastructure?

Ans-The latency tax refers to performance degradation caused by physical distance between compute and users. It affects real-time AI systems, especially those relying on fast data retrieval. High latency can reduce user experience and system efficiency. Organizations should deploy inference systems closer to users to minimize this impact.

4.How much energy does an AI gigafactory consume?

Ans-AI gigafactories consume massive amounts of energy, often around 1.4 TWh annually for large-scale facilities. This is comparable to the energy usage of hundreds of thousands of households. Energy availability is becoming a key constraint. Companies must consider power access when planning AI deployments.

5.Are AI gigafactories compliant with the EU AI Act?

Ans-AI gigafactories are designed to support compliance but do not guarantee it automatically. They provide infrastructure for transparency, monitoring, and data governance. Compliance depends on how systems are implemented. Organizations must integrate compliance into both infrastructure and application layers.

6.What is the difference between training and inference locations?

Ans-Training involves building AI models using large datasets and requires massive compute resources, often located in low-cost energy regions. Inference is the real-time use of those models and requires low latency. The two are increasingly separated geographically. Companies should adopt hybrid architectures to optimize both.

7.Should companies build or lease AI infrastructure?

Ans-Building infrastructure offers control and compliance benefits but requires significant investment. Leasing provides flexibility and faster deployment but may introduce dependency risks. The decision depends on scale, regulatory needs, and budget. Most enterprises will adopt a hybrid approach combining both strategies.

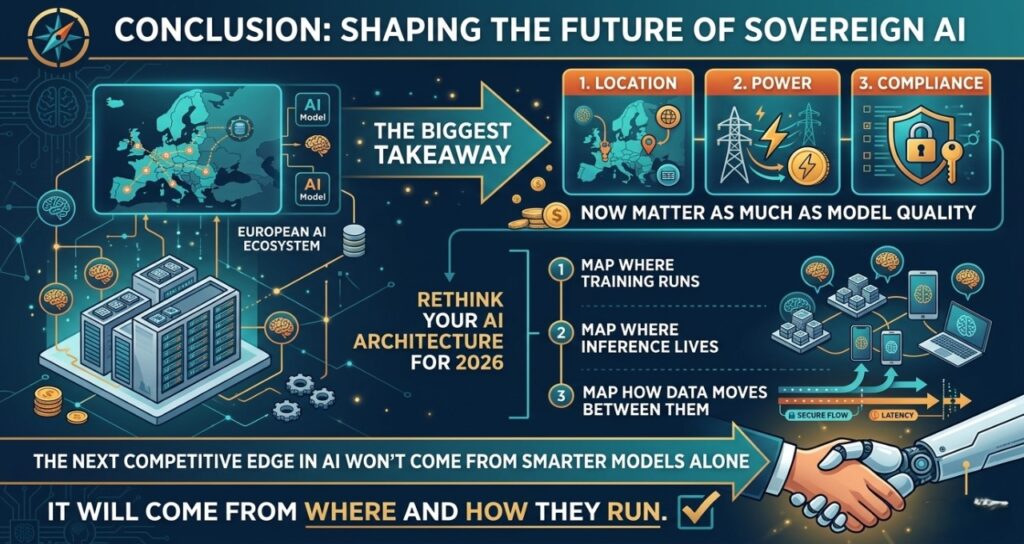

Conclusion

Europe’s AI gigafactories are not just infrastructure projects. They are strategic assets shaping the future of sovereign AI.

The biggest takeaway? Location, power, and compliance now matter as much as model quality.

If you’re building AI systems in 2026, rethink your architecture. Start mapping where your training runs, where your inference lives, and how your data moves between them.

Because the next competitive edge in AI won’t come from smarter models alone—it will come from where and how they run.

Sources

European Commission — InvestAI Initiative and EU AI Infrastructure Funding (2025–2026 policy releases)

Gartner — AI Infrastructure Forecasts and Enterprise Adoption Trends (2024–2026)

McKinsey & Company — Generative AI Cost and Infrastructure Impact Reports

IDC — Worldwide AI Infrastructure Spending Guide

European Commission — EU AI Act (Article 50: Transparency and Explainability Requirements)

NVIDIA — Blackwell (GB200) Architecture Briefings and Confidential Computing Documentation

EuroHPC Joint Undertaking — Sovereign Compute and HPC Deployment Plans

Scaleway — AION Sovereign Cloud Announcements

European Network of Transmission System Operators (ENTSO-E) — Grid Capacity and Energy Distribution Outlook (2025–2030)

Public disclosures and engineering estimates based on aggregated vendor benchmarks for AI gigafactory energy consumption and infrastructure design

AI Transparency & Editorial Disclosure

Editorial Integrity & Methodology

This deep-dive into Europe’s AI Gigafactories (2026) was developed by Saameer and the TechPlus Trends editorial team using a “Human-in-the-Loop” (HITL) analytical framework. While advanced AI systems were utilized to synthesize infrastructure benchmarks, energy consumption data ($1.4\text{ TWh/year}$), and grid operator disclosures, all strategic insights—specifically the “Latency Tax” and “Split-Stack Architecture” models—are the result of human editorial expertise. We prioritize original technical synthesis over automated content generation.

EU AI Act & Regulatory Compliance

In accordance with 2026 transparency standards (specifically Article 50 of the EU AI Act), readers are notified that this content explores the deployment of high-risk AI infrastructure. The discussion regarding Confidential Computing, Sovereign Interconnects, and Data Residency is provided for informational and strategic purposes and does not constitute legal or regulatory counsel.

Infrastructure Risk Disclaimer

The technical models described (including 800V HVDC power paths and District Heat Integration) represent emerging industry standards for 2026. TechPlus Trends advises engineering leaders and CTOs to conduct independent site-specific audits and consult with local grid authorities (ENTSO-E) and Data Protection Officers (DPO) before finalizing any sovereign compute deployments or long-term Power Purchase Agreements (PPAs).

Author Bio

Saameer is a technology journalist and infrastructure analyst with a focus on AI systems, sovereign compute, and digital regulation across Europe. With over five years covering enterprise AI adoption and EU policy shifts, he specializes in breaking down how infrastructure decisions shape real-world AI performance. His work centers on the intersection of GPU ecosystems, cloud platforms, and regulatory frameworks, and has been featured in industry briefings and technology strategy discussions across US and EU markets.