Update (2026): This analysis reflects the latest DORA enforcement guidance, NIS2 implementation realities, and JVM platform changes now in force across EU Tier-1 banks.

Warsaw’s Tier-1 banks are entering 2026 with one clear advantage: they operate inside one of Europe’s most tightly controlled, sovereign AI infrastructure environments. As detailed in Warsaw’s AI infrastructure versus Bucharest, these banks are running high-density, low-latency compute stacks designed for agentic systems—not experimentation

https://techplustrends.com/warsaw-ai-infrastructure-vs-bucharest-2026/

Yet a new internal threat has emerged that most compliance programs are not built to detect: Shadow AI.

Not external attackers. Not sanctioned vendors.

But unauthorized agents, scripts, and LLM wrappers quietly consuming GPU cycles, moving data out of region, and breaking regulatory guarantees from inside the JVM itself.

Key Takeaways

The “Resilient Agentic Governance” Fortress: Managing AI exposure is no longer optional under the DORA 2026 Audit Framework

. Failure to govern agentic workflows directly devalues the €300/Hour Resilience Premium paid to senior architects.

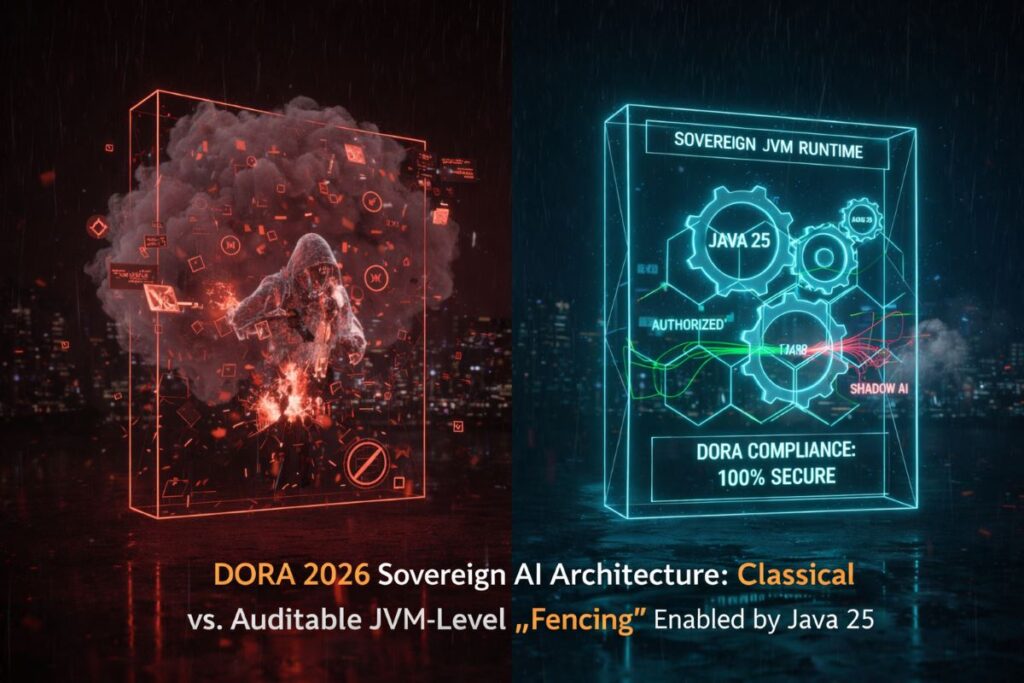

- Shadow AI is now one of the highest-risk DORA failure modes for banks running agentic systems

- Java 25 enables JVM-level resource fencing that can hard-block unauthorized AI execution

- DORA treats unmanaged AI assets as operational resilience violations, not IT hygiene issues

- Warsaw banks are responding with centralized sovereign enforcement, not cloud-only controls

- “DORA-ready” Java experts who can secure the runtime—not just write agents—are seeing rate premiums emerge

My Information Gain

Most Shadow AI discussions stop at policy: “don’t use ChatGPT,” “train employees,” “block browser extensions.”

What they miss is the runtime reality.

As Warsaw banks migrated to Java 25 to unlock agentic throughput—described in detail in Java 25 migration as a B2B gold mine—they dramatically increased concurrency, parallelism, and agent autonomy

https://techplustrends.com/java-25-migration-warsaw-banking-b2b-gold-mine/

That same concurrency expansion is what makes Shadow AI possible at scale.

The real control plane is not HR policy or firewalls.

It is the JVM runtime itself.

Why Shadow AI Breaks Compliance Even When “Nothing Is Hacked”

Traditional security assumes a perimeter breach.

Shadow AI breaks systems without breaching anything.

An engineer pastes a personal LLM API key into a helper script.

A team installs an “AI copilot” extension on a locked-down workstation.

A background agent starts summarizing logs using an external endpoint.

Nothing explodes.

No alarms fire.

But the system has now violated the same In-Region Mandate that governs where banking workloads are allowed to execute

https://techplustrends.com/dora-2026-warsaw-banking-b2b-rates/

https://techplustrends.com/warsaw-banking-in-region-mandate-java-25/

From a regulator’s perspective, this is not misuse.

It is loss of control over execution locality.

Case Study / Real-World Scenario

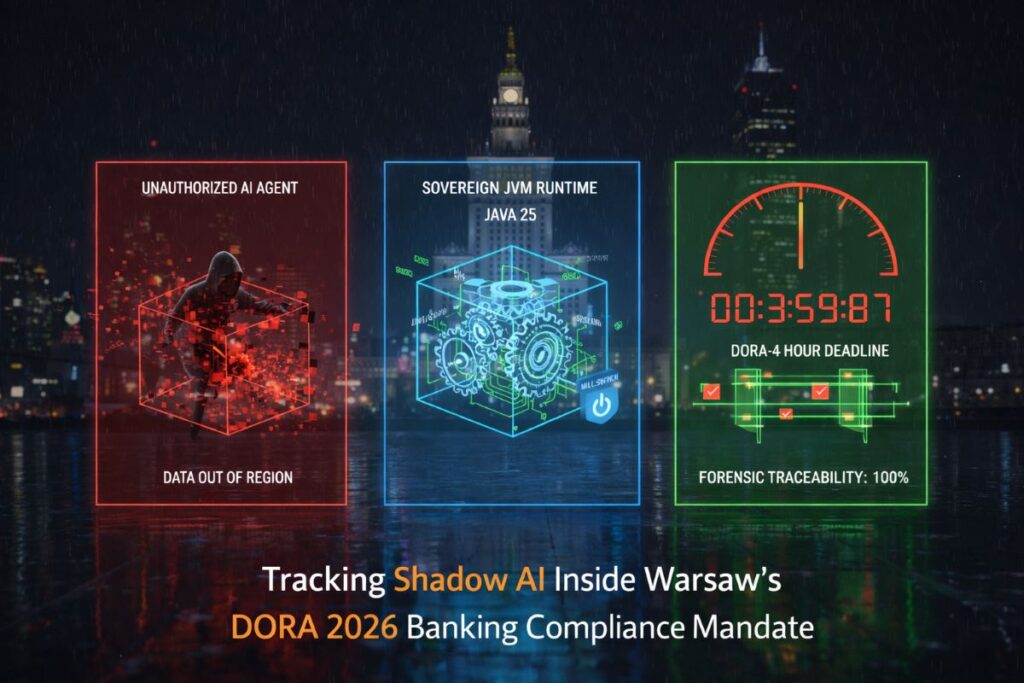

During a simulated DORA audit in late 2025, a Warsaw-based bank was asked to demonstrate how it would trace the origin of a data-processing anomaly inside its AI credit scoring system.

The bank could identify the model.

It could identify the dataset.

But it could not immediately identify the thread that initiated an outbound API call.

Under DORA, this matters.

As outlined in the DORA 2026 audit framework for Warsaw banking systems, major ICT-related incidents must be triaged and reported within hours—not days

https://techplustrends.com/dora-2026-audit-warsaw-banking-java-25/

https://techplustrends.com/shadow-ai-liability-trap-b2b-contractors/

Shadow AI turns that 4-hour window into an impossible forensic race.

Technical Forensic Check: What DORA Auditors Actually Verify

This is where most banks quietly fail.

In a live DORA review, regulators do not ask whether AI was intended to run. They ask whether execution can be proven.

A DORA-ready forensic posture requires:

- Thread-Level Attribution

Can you show which JVM thread initiated an AI decision, not just which service? - Human-Readable Thread Dumps

Can compliance teams (not just engineers) reconstruct execution paths within the 4-hour reporting window? - Deterministic Kill Authority

Can unauthorized AI execution be terminated at runtime without disabling the entire system?

Reactive and asynchronous models obscure these answers.

Java 25’s structured concurrency and virtual-thread traceability make them provable.

This is the difference between detecting an incident and surviving an audit.

Who Benefits — and Who Gets Exposed — in 2026

| Group | Outcome in the Shadow AI Era |

| Banks with JVM-level fencing | Reduced audit risk, provable control |

| Java teams with runtime expertise | Elevated from developers to risk mitigators |

| Cloud-only security teams | Exposed by lack of execution visibility |

| Contractors outside sovereign perimeters | Increasingly disqualified |

| Engineers relocating into Warsaw hubs | Gain access to regulated, premium work |

The same gravitational forces pulling developers from Romania into Poland’s regulated banking ecosystem are now amplified by compliance—not just opportunity

https://techplustrends.com/romanian-developers-moving-poland-2026/

Comparison Matrix

| Dimension | Sovereign JVM Enforcement (Warsaw) | Cloud-Only Controls (Elsewhere) |

| Shadow AI detection | Runtime-level, deterministic | Network-level, probabilistic |

| Execution traceability | Thread-bound, auditable | Fragmented async traces |

| DORA readiness | Designed-in | Retro-fitted |

| Incident response | Minutes to attribution | Hours to uncertainty |

| Regulator confidence | High | Conditional |

CoE Framing (Center of Excellence Perspective)

Warsaw banks are formalizing Runtime Security Centers of Excellence—not SOCs.

These CoEs own:

- JVM configuration baselines

- Scoped-value policies for AI execution

- Resource quotas for GPU-bound threads

- Kill-switch authority at the runtime level

The JVM becomes a regulatory instrument, not just a performance layer.

Strategic Implications for 2026

This is where DORA and NIS2 diverge.

NIS2 establishes regional resilience expectations across sectors.

DORA applies a sector-specific enforcement hammer to banks.

As detailed in NIS2 compliance across Poland and Romania, NIS2 asks whether systems are secure.

DORA asks whether systems are operationally controllable under stress

https://techplustrends.com/techplustrends-com-nis2-compliance-poland-romania-2026/

Shadow AI fails the second test.

Why This Matters

Shadow AI changes:

- Procurement (runtime controls become contract requirements)

- Insurance (underwriters ask about unmanaged AI risk)

- Careers (runtime security knowledge outpaces framework fluency)

The cheapest AI experiment can become the most expensive audit failure.

What To Do Now

For banks:

- Inventory AI execution paths at the JVM level

- Implement deny-by-default external AI access

- Treat Shadow AI as an operational risk, not an HR issue

For B2B Java professionals:

- Learn runtime fencing, not just agent frameworks

- Understand DORA incident reporting logic

- Position yourself as a runtime risk mitigator, not a coder

FAQs

1.Is Shadow AI illegal?

Ans-Not inherently—but unmanaged execution can violate DORA obligations.

2.Can policies alone stop Shadow AI?

Ans-No. Policies do not control threads.

3.Why Java 25 specifically?

Ans-Because it enables per-thread control and structured concurrency.

4.Is this only a Warsaw problem?

Ans-No—but Warsaw is enforcing it first.

5.Does blocking Shadow AI reduce innovation?

Ans-No. It channels it into auditable paths.

6.Will regulators check this explicitly?

Ans-Increasingly, yes—during incident response.

Final Takeaway

In 2026 banking, the greatest AI risk is not what your agents do.

It is what you cannot prove they didn’t do.

Shadow AI is not a cultural issue.

It is a runtime failure.

Warsaw’s banks are responding by securing the JVM itself—and in doing so, redefining what “AI compliance” actually means.

Sources

- EU Digital Operational Resilience Act (DORA)

- ENISA guidance on ICT third-party risk

- OpenJDK Project Loom & Java 25 specifications

- European Banking Authority supervisory statements

Author Bio

Saameer Go is a senior technology journalist and analyst covering enterprise software, AI platforms, infrastructure, and EU technology regulation. With over 15 years of experience analyzing how policy, labor markets, and architecture decisions intersect, he focuses on long-term structural shifts rather than short-term hype.

Disclaimer:This article is provided for informational and educational purposes only. While every effort has been made to ensure the accuracy of the technical analysis regarding Java 25 and DORA 2026, the content does not constitute legal, financial, or professional compliance advice. Regulatory interpretations of DORA and NIS2 are subject to change by the European Supervisory Authorities (ESAs). Readers should consult with qualified legal counsel or certified compliance auditors to ve

1 thought on “Shadow AI: The “Silent Breach” Threatening Warsaw’s DORA 2026 Compliance (Java 25 Guide)”