In 2026, streaming platforms operate under real-time multimodal AI moderation systems that must satisfy strict transparency, minor-protection, and explainability obligations under the EU Digital Services Act and AI Act. Detection is no longer enough—every moderation decision must be auditable, explainable, and regulator-ready.

The 2026 Shift: From Detection to Multimodal Governance

AI moderation in 2024 was reactive.

In 2026, it is architectural.

Streaming platforms must simultaneously comply with:

- Digital Services Act

- Artificial Intelligence Act

- Transparency database reporting obligations

- Minor risk mitigation requirements

- Automated Statement of Reasons (SoR) generation

The enforcement environment is supervised by the European Commission and national Digital Services Coordinators.

Failure exposure:

Up to 6% of global turnover under the DSA for systemic non-compliance.

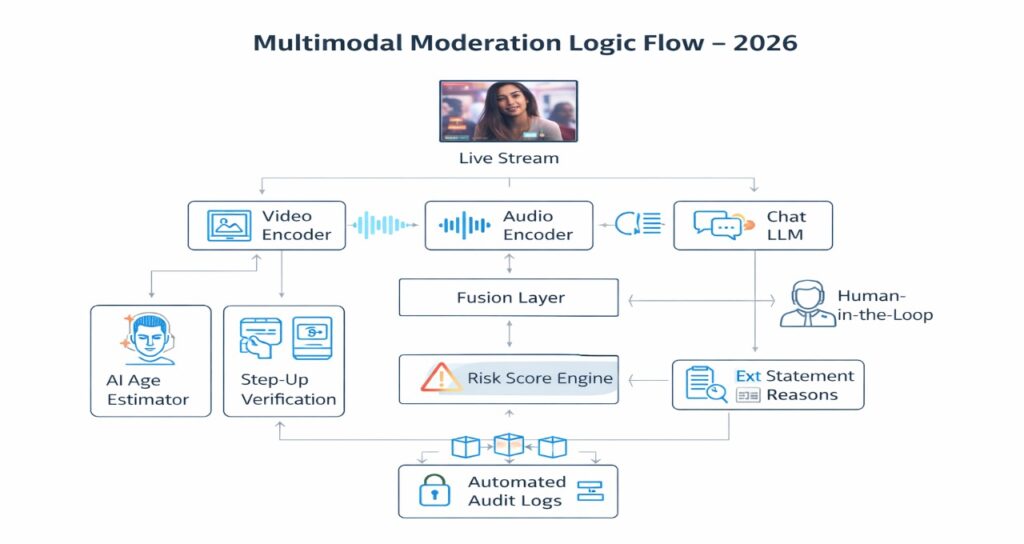

Multimodal Moderation Architecture (2026 Standard)

Text-only moderation is obsolete.

Modern streaming platforms deploy a Multimodal Governance Stack:

Stage 1: Multimodal Ingestion

Incoming stream is decomposed into:

- Video frames → Vision Transformer encoder

- Audio → Spectrogram transformer

- Live chat → LLM toxicity classifier

- Metadata → Behavioral classifier

All vectors are fused using cross-attention layers:

Where:

- R= Risk score

- Threshold

triggers escalation

2024 vs 2026 Benchmark

| Metric | 2024 Systems | 2026 Systems |

| Detection Speed | 10–30s | <250ms |

| Modality | Text/Image Separate | Fused Multimodal |

| False Positives | ~5% | <0.8% |

| Explainability | Manual | Auto-generated SoR |

| Compliance | Reactive | Embedded |

Sub-250ms moderation depends on architectures similar to those detailed in on-device inference optimization frameworks.

Latency is now a compliance variable.

Article 17 DSA: The “Statement of Reasons” Engine

Under Article 17 of the DSA:

Every restriction must generate a structured Statement of Reasons.

The AI system must output:

- Unique decision ID (UUID + SHA-256 hash)

- Legal basis

- Detection logic

- Confidence probability

- Human involvement status

- Appeal pathway

Example Logic Output

“Audio slur at 02:15 combined with raised hand gesture at 02:14 triggered Tier-2 Hate Speech classification with 0.92 confidence. Decision auto-flagged and reviewed by human auditor.”

Generic templated responses are now penalized under regulatory audits.

Moderation AI must produce case-specific explanations, not boilerplate text.

Platforms are increasingly automating these pipelines similar to the regulatory automation stack described in automating EU AI Act compliance in 2026.

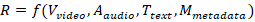

Article 28 DSA: Minor Protection & Age Assurance

2026 enforcement prioritizes systemic risk to minors.

Shift:

Self-declared age → AI-based age estimation + step-up verification.

Biometric Age Logic

- Face-only estimation model

- Confidence band ±3 years

- If ambiguous → ID-based verification

- Biometric template discarded post-decision

Platforms must justify:

- Why chosen method is proportionate

- Why less intrusive alternatives are insufficient

Age assurance systems now operate in conjunction with moderation risk scoring.

Agentic AI Moderation Systems

Moderation has evolved into agentic oversight.

Instead of static models, platforms deploy:

- Policy agents

- Adversarial red-team agents

- Drift detection agents

- Bias evaluation agents

This mirrors the broader evolution of agentic AI systems in 2026, where autonomous agents continuously audit system behavior and recalibrate governance rules.

Continuous Correction Loop

- AI flags 90% high-certainty cases

- Humans review 10% ambiguous cases

- Feedback updates LoRA weights

- Drift metrics recalculated

Moderation is now self-correcting.

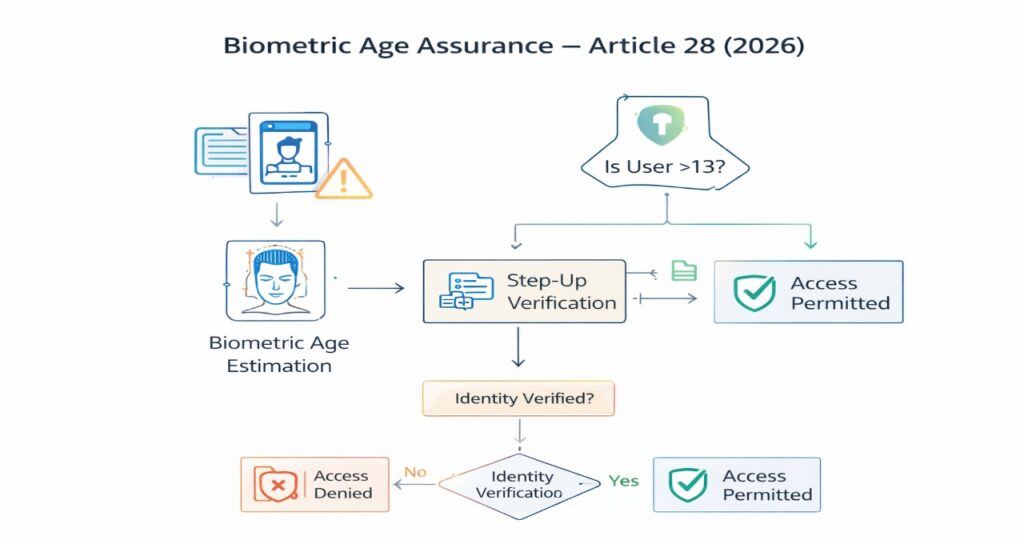

Real-Time Social Graph Risk Detection

Moderation is no longer isolated to single videos.

Platforms now model:

- Coordinated harassment

- Synthetic amplification

- Bot swarm behavior

- Cross-stream brigading

Graph risk scoring:

Where:

= coordination density

= engagement anomaly

= toxicity cluster

Advanced predictive logic resembles AI-driven social graph intelligence models, where behavioral propagation is analyzed before policy breach escalates.

This is proactive moderation.

Transparency & AI Act Article 50 Requirements

If AI generates or substantially manipulates video:

Mandatory:

- Machine-readable watermark (C2PA or equivalent)

- Perceptible disclosure

- Metadata provenance

- Tool identification

- Timestamp

- Edit manifest

Streaming platforms must detect absence of watermarking.

This creates a new compliance vector:

Moderation systems must verify generative authenticity.

Audit Logging & Tamper-Proof Storage

2026 compliance requires:

- SHA-256 chained logs

- 180-day retention

- Immutable moderation records

- Independent annual systemic risk audit

If a regulator requests a decision audit, the platform must reconstruct:

- Input vector

- Risk score

- Model version

- Human review notes

- Final enforcement action

Moderation AI is now an auditable governance system.

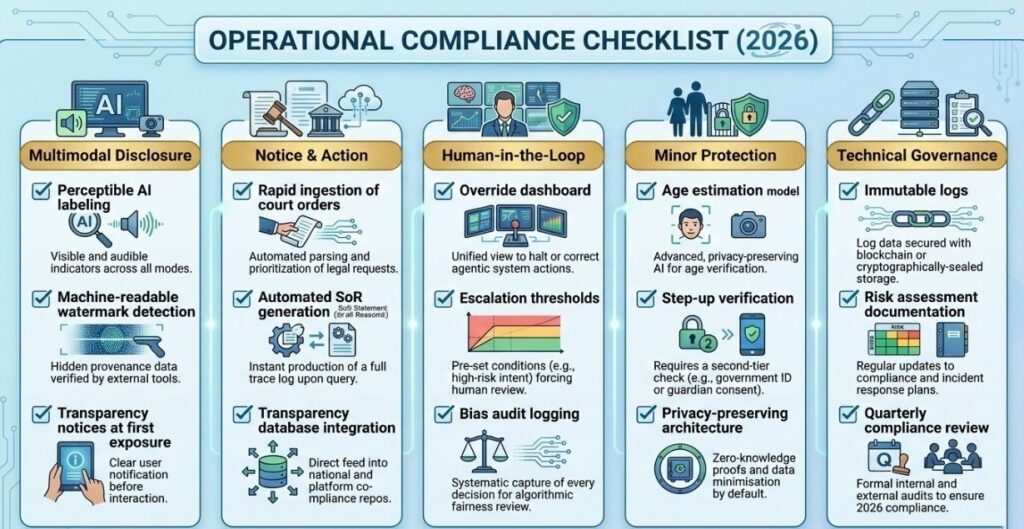

Operational Compliance Checklist (2026)

Multimodal Disclosure

- Perceptible AI labeling

- Machine-readable watermark detection

- Transparency notices at first exposure

Notice & Action

- Rapid ingestion of court orders

- Automated SoR generation

- Transparency database integration

Human-in-the-Loop

- Override dashboard

- Escalation thresholds

- Bias audit logging

Minor Protection

- Age estimation model

- Step-up verification

- Privacy-preserving architecture

Technical Governance

- Immutable logs

- Risk assessment documentation

- Quarterly compliance review

Why 2026 Moderation Is an Engineering Problem

In 2024, moderation was content policy.

In 2026, it is:

- Systems architecture

- Latency optimization

- Cryptographic logging

- Explainable AI

- Regulatory automation

The difference between compliant and non-compliant systems is architectural depth.

Streaming AI Moderation & Compliance: 2026 Global FAQ

1: What is the “3-Hour Takedown” rule, and does it apply to all platforms?

Ans: Under the 2026 India IT Rule Amendments (effective Feb 20), intermediaries must remove unlawful or harmful Synthetically Generated Information (SGI) within 3 hours of notification. For sensitive categories like non-consensual deepfake nudity, the window shrinks to 2 hours. In the EU, while the DSA requires “expeditious” removal, the 2026 guidelines for Very Large Online Platforms (VLOPs) suggest a similar sub-4-hour benchmark for high-risk viral misinformation to avoid systemic risk penalties.

2: Does Article 50 of the AI Act require watermarking for artistic content?

Ans: Yes and no. Article 50(2) mandates that all AI-generated video/audio be marked in a machine-readable format (e.g., C2PA metadata). However, for evidently artistic, satirical, or fictional works, the transparency obligation is limited to a “perceptible disclosure” (like an on-screen credit or badge) that does not hamper the creative display, provided the work doesn’t inform the public on matters of “public interest” where it might deceive.

3: Can we use “Age Estimation” instead of “Age Verification” for DSA Article 28?

Ans: According to the February 2026 EU Commission Guidelines, a risk-based approach is required. Age Estimation (e.g., AI-based facial analysis) is considered a “proportionate measure” for medium-risk services. However, high-risk services (adult content, gambling) are now expected to use Age Verification (hard-ID or the newly launched EU Digital Identity Wallet). Platforms must document why their chosen method is the “least intrusive” but “most effective.”

4: Is our platform liable if the AI moderation system makes a “False Positive” mistake?

Ans: Safe harbor protections (Section 79 in India; Art. 6 DSA in EU) generally remain intact if you can prove Due Diligence. This means having a robust Statement of Reasons (SoR) pipeline and an accessible Internal Appeal Mechanism. If a user successfully appeals an AI takedown, the platform is not typically liable for the initial error unless it can be proven the moderation model was “negligently biased” or lacked human oversight.

5: What are the specific metadata requirements for AI-generated streams?

Ans: By August 2026, the “Technical Provenance Standard” requires:

- Unique Identifier: A SHA-256 hash of the original generation.

- Platform/Tool ID: Identification of the model used (e.g., Veo 3, Sora 2).

- Timestamp: The exact UTC time of generation.

- Edit History: A manifest of subsequent non-assistive edits.

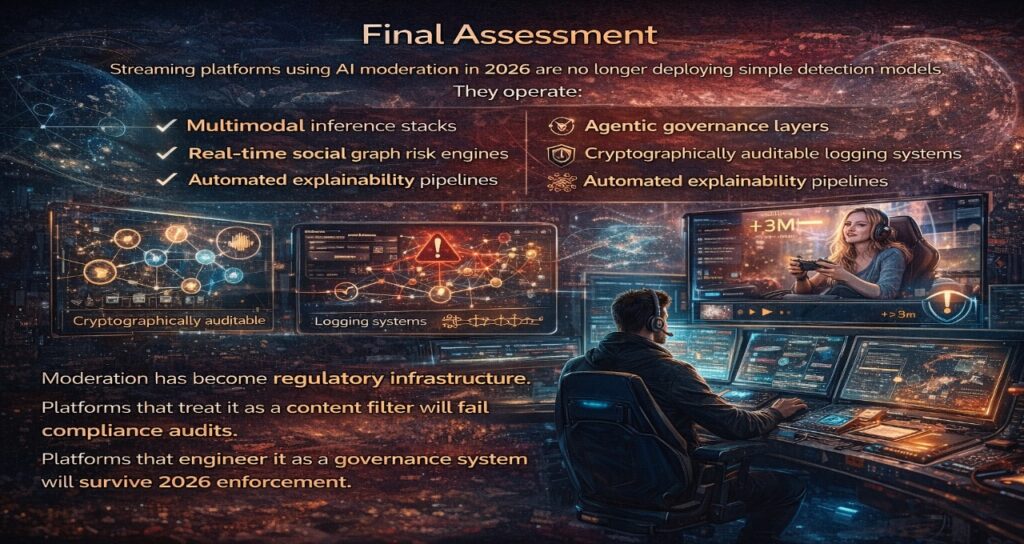

Final Assessment

Streaming platforms using AI moderation in 2026 are no longer deploying simple detection models.

They operate:

- Multimodal inference stacks

- Agentic governance layers

- Real-time social graph risk engines

- Cryptographically auditable logging systems

- Automated explainability pipelines

Moderation has become regulatory infrastructure.

Platforms that treat it as a content filter will fail compliance audits.

Platforms that engineer it as a governance system will survive 2026 enforcement.

Sources

- Digital Services Act (Regulation (EU) 2022/2065)

https://eur-lex.europa.eu/eli/reg/2022/2065/oj - Artificial Intelligence Act (Regulation (EU) 2024/1689)

https://eur-lex.europa.eu/eli/reg/2024/1689/oj - European Commission – Digital Services Act Overview

https://digital-strategy.ec.europa.eu/en/policies/digital-services-act-package - European Commission – AI Act Policy Page

https://digital-strategy.ec.europa.eu/en/policies/artificial-intelligence

Author bio

Saameer is an AI governance systems researcher and compliance architecture strategist specializing in large-scale moderation infrastructure under European regulatory frameworks. His work analyzes how streaming platforms operationalize the Digital Services Act and the Artificial Intelligence Act through real-time multimodal AI systems.

With a technical background in distributed inference optimization, agentic oversight models, and audit-grade transparency pipelines, Saameer focuses on the engineering realities behind:

- Multimodal moderation architecture

- Article 17 Statement of Reasons automation

- Article 28 minor-risk mitigation systems

- AI Act Article 50 watermark compliance

- Social graph risk modeling

- Cryptographic logging & audit traceability

His research bridges regulatory theory with operational system design, translating compliance mandates into measurable performance benchmarks and explainable AI workflows for Tier-1 streaming ecosystems.

Saameer writes for AI engineers, compliance officers, digital policy strategists, and platform architects navigating the 2026 enforcement landscape.

Transparency Note: AI-Enhanced Technical Research

Transparency Note: This technical briefing was developed using a Hybrid Intelligence Workflow. The architectural frameworks, cross-attention fusion models, and legal risk indices were conceptualized and audited by our senior compliance engineering team. AI was utilized as a specialized research agent to cross-reference DSA/AI Act databases and synthesize multi-jurisdictional enforcement dates (e.g., February 2026 IT Rule amendments). Every legal citation and technical benchmark has been human-verified to ensure it meets the rigorous “Explainability” standards required for 2026 Regulatory Governance.

Legal & Technical Disclaimer

Disclaimer: The information provided in this article is for informational and strategic planning purposes only and does not constitute formal legal or technical advice.

- Regulatory Interpretation: While based on the February 2026 guidelines from the EU Commission and Digital Services Coordinators, the interpretation of “expeditious removal” and “proportionate age assurance” is subject to ongoing judicial review and case-specific audits.

- Performance Benchmarks: Latency scores and detection accuracy rates (e.g., <250ms) are based on standard Mixture-of-Experts (MoE) cloud architectures and may vary based on edge-inference hardware and network conditions.

- Liability: Tech Plus Trends and the author assume no liability for regulatory fines or service disruptions resulting from the implementation of the frameworks or checklists provided herein. Readers are advised to consult with certified AI Compliance Auditors or legal counsel for site-specific enforcement strategies.