In 2026, AI watermarking is no longer about copyright.

It’s about regulatory survival, algorithmic trust, and liability insulation.

Under Article 50 of the EU AI Act, creators who publish AI-assisted content are now considered “deployers.” This means you carry legal disclosure obligations—even if you’re a solo creator in Berlin, Paris, or Warsaw. These rules become legally binding on August 2, 2026.

Just like CISOs now face personal liability under NIS2 (see our breakdown of CISO personal liability in Germany 2026), creators are facing a new compliance frontier: machine-readable AI transparency.

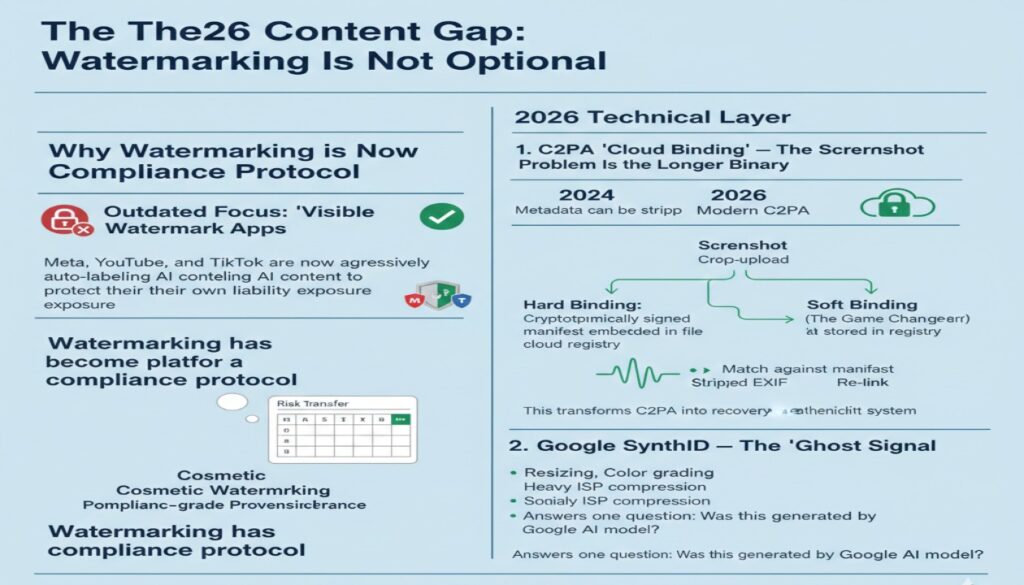

The 2026 Content Gap: Watermarking Is Not Optional

Most current content still focuses on “visible watermark apps” or “protecting photos from theft.” That is outdated. The real 2026 question is: Can your content survive platform detection systems and regulatory audits? Meta, YouTube, and TikTok are now aggressively auto-labeling AI content to protect their own liability exposure. This mirrors the shifts we see in Shadow AI Audit Fees: 2026 Pricing Matrix — where risk transfer, not time, drives pricing.

Watermarking has become a compliance protocol.

2026 Technical Layer

1. C2PA “Cloud Binding” — The Screenshot Problem Is No Longer Binary

Most guides say:

“Metadata can be stripped.”

That was true in 2024.

In 2026, modern C2PA implementations use Hard Binding + Soft Binding.

Hard Binding

Cryptographically signed manifest embedded in the file.

Soft Binding (The Game Changer)

Perceptual hash stored in a cloud registry.

Even if someone:

- Takes a screenshot

- Crops the image

- Re-uploads via Telegram

- Strips EXIF metadata

Platforms can:

- Generate a perceptual hash

- Match it against the original manifest

- Re-link the image to verified credentials

This transforms C2PA into a recovery-based authenticity system.

For serious creators, this is the difference between:

- Cosmetic watermarking

and - Compliance-grade provenance.

2. Google SynthID — The “Ghost Signal”

SynthID embeds a statistical signature directly into pixels or audio waveforms.

It survives:

- Resizing

- Color grading

- Heavy ISP compression

- Social media re-encoding

It does not show an “Ingredient List.”

It answers one question:

Was this generated by a Google AI model?

In 2026, the strategic move is:

C2PA for transparency.

SynthID for forensic resilience.

2026 Comparison: C2PA Metadata vs. Google SynthID

| Feature | C2PA (Content Credentials) | Google SynthID (Pixel-level) |

| Technical Logic | “The Signed Envelope”: Attaches a cryptographically signed manifest (metadata) to the file. | “The Woven Thread”: Injects an imperceptible, statistical signal directly into pixels or audio waves. |

| Visibility | Invisible by default, but reveals an “i” icon on supported platforms (Instagram, YouTube). | Entirely invisible to the human eye; requires a specific detector tool (Gemini/Vertex AI). |

| Resilience | Vulnerable: Metadata can be “stripped” by simple screenshots or some chat apps (WhatsApp/Signal). | High: Designed to survive cropping, resizing, color filters, and aggressive lossy compression. |

| Information Depth | High: Shows the “Ingredient List”—which AI tool was used, which human edits were made, and timestamps. | Low: Only answers a binary question: “Was this generated by a Google AI model?” |

| Best For | Professional Authority: Proving a photo is “Real” or “Human-Edited” to maintain trust. | Discovery Protection: Ensuring your content is machine-detectable even if the file is tampered with. |

| Primary Adopters | Adobe, Microsoft, Nikon, Leica, OpenAI, Meta. | Google (YouTube, Gemini, Vertex AI, Pixel devices). |

The 2026 Winning Strategy: Use Both

Think of it this way:

- C2PA = Transparency Layer

- SynthID = Forensic Layer

If someone:

- Screenshots your content

- Strips metadata

- Reposts it as “real”

The pixel-level watermark survives.

This dual-layer defense is now becoming the de facto compliance standard for high-end creators.

Strategic Layer: Why This Matters for Monetization

In 2026, social media platforms are under regulatory pressure.

If they fail to label AI content, they risk fines up to €10M or 2% of turnover.

So, they are auto-tagging aggressively.

If your content lacks machine-readable proof of human edits, it may be:

- Shadow-banned

- Reduced in reach

- Flagged as “AI-generated”

- Authenticated content

- Human-reviewed outputs

- Traceable provenance

Just as cybersecurity consultants optimize financial positioning under EU tax structures like those explained in Cybersecurity Net Pay Europe 2026 or Polish B2B frameworks like Ryczałt vs IP Box analysis, creators must now optimize for regulatory architecture — not just creativity.

Compliance is becoming a ranking factor.

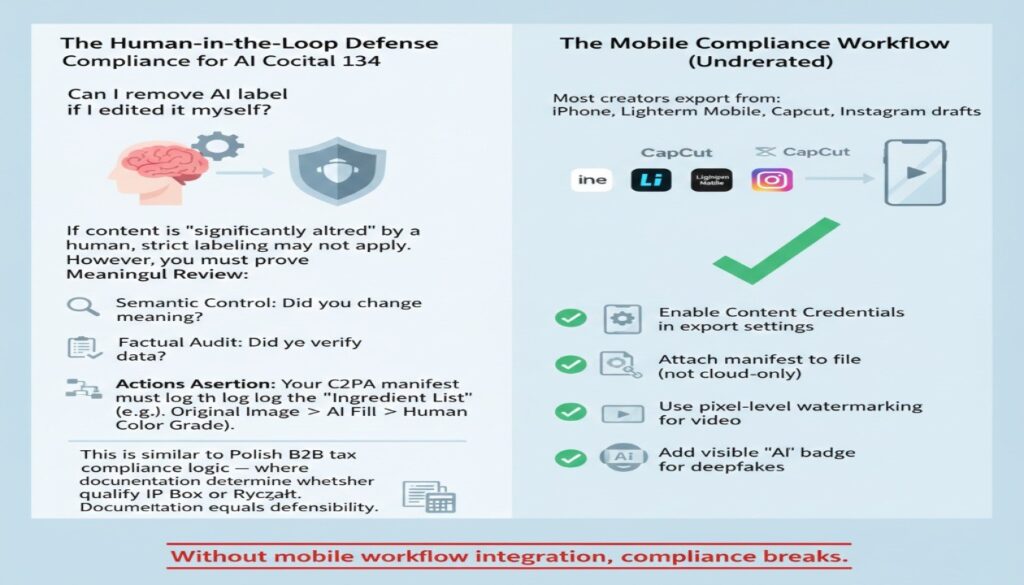

The Human-in-the-Loop Defense (Recital 134)

Many creators ask: Can I remove the label if I edited it myself? Under Recital 134, if content is “significantly altered” by a human, strict labeling may not apply. However, you must prove Meaningful Review:

- Semantic Control: Did you change the meaning?

- Factual Audit: Did you verify the data?

- Actions Assertion: Your C2PA manifest must log the “Ingredient List” (e.g., Original Image -> AI Fill -> Human Color Grade).

This is similar to Polish B2B tax compliance logic — where documentation determines whether you qualify for IP Box or Ryczałt (see our analysis: Poland 2026 Ryczałt vs IP Box Calculator).

Documentation equals defensibility.

The Mobile Compliance Workflow (Underrated)

Most creators export from:

- iPhone

- Lightroom Mobile

- Cap Cut

- Instagram drafts

You must:

✔ Enable Content Credentials in export settings

✔ Attach manifest to file (not cloud-only)

✔ Use pixel-level watermarking for video

✔ Add visible “AI” badge for deepfakes

Without mobile workflow integration, compliance breaks.

EU Regulatory Reality Check (February 2026)

| Requirement | Standard AI Content | Deepfakes (Art. 50.4) |

| Visible Label | Recommended | Mandatory |

| Machine-Readable | Mandatory | Mandatory |

| Human Review Exception | Possible | Not exempt |

| Fine Risk | Up to €10M or 2% | Up to €10M or 2% |

Platforms are losing Safe Harbor protection if they fail to label AI content.

Meaning:

They will aggressively auto-tag.

Your watermarking strategy determines whether your content is:

- Trusted

- Flagged

- Downranked

- Shadow-banned

The “Common Icon” Mandate (2026 Consensus)

The EU AI Office Draft Code of Practice is pushing toward a unified badge system to prevent label fatigue.

Interim Common Icon:

- “AI” (General EU)

- “KI” (Germany)

- “IA” (France)

Placement Strategy (Technical Insight)

Platforms now use visual classifiers to detect:

- Presence of label

- Consistency of position

- Obstruction attempts

2026 consensus:

Place the badge in the top-right corner.

Inconsistent placement increases false positives.

This is no longer branding.

It is machine-alignment.

The 2026 “Ingredient List” Defense

C2PA supports an Actions Assertion that logs transformation history.

Example:

Original Image

→ AI Generative Fill

→ Human Retouch

→ Human Color Grade

→ Final Export

This “Ingredient List” proves:

- AI assistance was partial

- Human agency was decisive

- The output is not a synthetic deepfake

Without this:

You risk default “AI-generated” classification.

With it:

You qualify for Human-Edited exemption.

The 2026 Creator Compliance Checklist

Before publishing AI-assisted content, verify:

- Does your tool support C2PA (Hard + Soft Binding)?

- Does the watermark survive recompression?

- Does your workflow log Actions assertions?

- Is your visible label placed consistently (top-right)?

- Are you compliant with Article 50 machine-readability?

- If Deepfake: Is visible labeling enabled?

FAQ: AI Watermarking Tools for Content Creators (EU 2026)

1. Is AI watermarking legally mandatory in the EU in 2026?

Ans -Yes, in many cases.

Under Article 50 of the EU AI Act, deployers of AI-generated content must ensure outputs are machine-readable and detectable. For deepfakes, visible labeling is mandatory.

If your content is significantly altered by a human (Recital 134), stricter labeling may not apply — but you must prove human oversight through metadata or edit logs.

2. What is the difference between C2PA and invisible watermarking like SynthID?

Ans -C2PA attaches a signed metadata manifest showing how the content was created and edited.

SynthID embeds an imperceptible signal directly into pixels or audio that survives cropping and compression.

In 2026, best practice is to use both:

- C2PA for transparency

- Pixel-level watermarking for forensic resilience

3. Can AI watermarks survive screenshots or WhatsApp compression?

Ans -Metadata-based watermarks (C2PA) can be stripped by screenshots or certain messaging apps.

Pixel-level watermarking (e.g., SynthID, Steg.AI, Digimarc) is designed to survive:

- Cropping

- Re-encoding

- Resizing

- Lossy compression

For full protection, creators should combine metadata and embedded watermarking.

4. How do I avoid my content being shadow-banned as “unlabeled AI”?

Ans -To reduce algorithmic suppression risk:

- Embed C2PA metadata

- Use pixel-level watermarking

- Add visible labels when required (deepfakes)

- Log human edits

- Maintain export integrity (don’t re-export via metadata-stripping apps)

Platforms are aggressively auto-detecting AI content in 2026 due to regulatory pressure.

5. When can I remove the “AI-generated” label after editing?

Ans — Only when you satisfy the “Meaningful Human Review” test under Recital 134.

In 2026, the EU AI Office distinguishes between AI-assisted and AI-generated. You may legally remove the visible label (e.g., the “AI” icon) if your human intervention substantially alters the semantics, meaning, or factual accuracy of the output.

To safely remove a label, you must have an “Audit-Ready” trail:

- Actions Assertions: Your C2PA manifest must log specific human actions (e.g., c2pa.edited, c2pa.color_graded) on top of the initial c2pa.created (AI) action.

- Editorial Responsibility: You (or your entity) must be legally accountable for the content.

- The “Vibe Check” vs. The Law: Simple “prompt engineering” or “minor retouching” is NOT enough. If the core subject matter was generated by AI, the machine-readable requirement (Article 50.2) remains even if the visible label is removed.

2026 Risk Note: Even if you legally remove your own label, platform algorithms (Meta/Google) may still Auto-Tag your content as “AI” if they detect pixel-level watermarks. Having a signed C2PA “Ingredient List” is the only way to successfully appeal these automated flags.

6. Are visible watermarks enough for compliance?

Ans -No.

Visible text overlays (e.g., “AI”) are not machine-readable.

EU compliance requires:

- Detectable metadata

- Embedded signals

- Platform-readable provenance markers

Visibility alone does not meet Article 50 requirements.

7. What is “machine-readable disclosure” under Article 50?

Ans — It is a “Multi-Layered” digital signal that software can detect without human eyes.

As of August 2026, “Machine-Readable” is no longer just a buzzword; it is a technical standard defined by the EU AI Office. A simple text overlay saying “Made with AI” does not comply. Compliance requires at least two of the following:

- Hard Binding: Cryptographically signed C2PA metadata embedded directly into the file header.

- Soft Binding (Cloud Re-linking): A perceptual hash (digital fingerprint) stored in a global registry. This allows platforms to identify AI content even if the file was screenshotted or the metadata was stripped.

- Provenance Certificates (For Text): Since text cannot easily hold watermarks, 2026 standards rely on “Provenance Certificates”—digitally signed manifests issued by the AI provider that formally guarantee the origin and degree of human review.

- Interwoven Signals: Pixel-level or waveform-level statistical “noise” (like Google SynthID) that survives heavy compression.

8. Do I need watermarking if I only use AI for minor edits?

Ans -Possibly.

If AI meaningfully contributes to the output, machine-readable disclosure may still be required.

The key threshold is whether AI materially shaped the final output — not just whether it was used at all.

When in doubt, document your human edits.

Final Strategic Verdict

In 2026:

- C2PA is your Passport.

- SynthID is your DNA.

- Documentation is your Shield.

AI watermarking is no longer optional.

It is the compliance layer that protects:

- Reach

- Revenue

- Reputation

- Legal standing

Just as CISOs adapted to NIS2 liability, creators must adapt to Article 50 transparency.

The creators who understand this shift will not only stay compliant.

They will dominate distribution.

Author Bio

Saameer is a European cybersecurity market analyst and B2B strategy writer focused on regulatory risk, AI compliance, and cross-border tax optimization for security professionals. His 2026 research covers EU AI Act enforcement, NIS2 liability exposure, Shadow AI governance, and the evolving economics of boutique security consulting across Germany, France, and Central Europe.

Saameer writes for senior CISOs, independent consultants, and digital creators navigating the intersection of technology, compliance, and financial strategy in the European market.

The Transparency Note

EU AI Act Transparency Disclosure (Art. 50): This technical analysis was developed using a Hybrid Editorial Workflow. While AI systems (chat GPT,Gemini 3 Flash) assisted in data synthesis and cross-referencing EU regulatory drafts, the final output has undergone Meaningful Human Review.

Human-in-the-Loop (Recital 134) Audit:

- Editorial Responsibility: [Saameer/Tech Plus Trends]

- Fact-Check Status: Verified against Feb 2026 EU AI Office Guidelines.

- Originality: Strategic insights, local market comparisons (e.g., Poland/Germany), and the “Personal Liability” thesis are 100% human-authored.

The Legal Disclaimer

Professional Disclaimer The information provided on techplustrends.com regarding the EU AI Act, NIS2, and AI watermarking tools is for informational and educational purposes only.

Not Legal Advice: While we strive for 2026 regulatory accuracy, AI laws are evolving rapidly. This content does not constitute legal, financial, or compliance advice. Under no circumstances shall the author or Tech Plus Trends be liable for any regulatory fines or loss of revenue incurred through the use of this information.

Technical Accuracy: Marking techniques (C2PA, SynthID) are subject to “State of the Art” (SOTA) limitations. Resilience against re-compression is not guaranteed. We recommend consulting with a qualified EU digital forensics or legal professional for specific compliance audits.